Large language models (LLMs) like GPT-4, LaMDA, PaLM, and others have taken the world by storm with their remarkable ability to know and generate human-like text on an unlimited range of topics. These models are pre-trained on massive datasets comprising billions of words from the web, books, and other sources.

This pre-training phase imbues the models with extensive general knowledge about language, topics, reasoning abilities, and even certain biases present within the training data. Nonetheless, despite their incredible breadth, these pre-trained LLMs lack specialized expertise for specific domains or tasks.

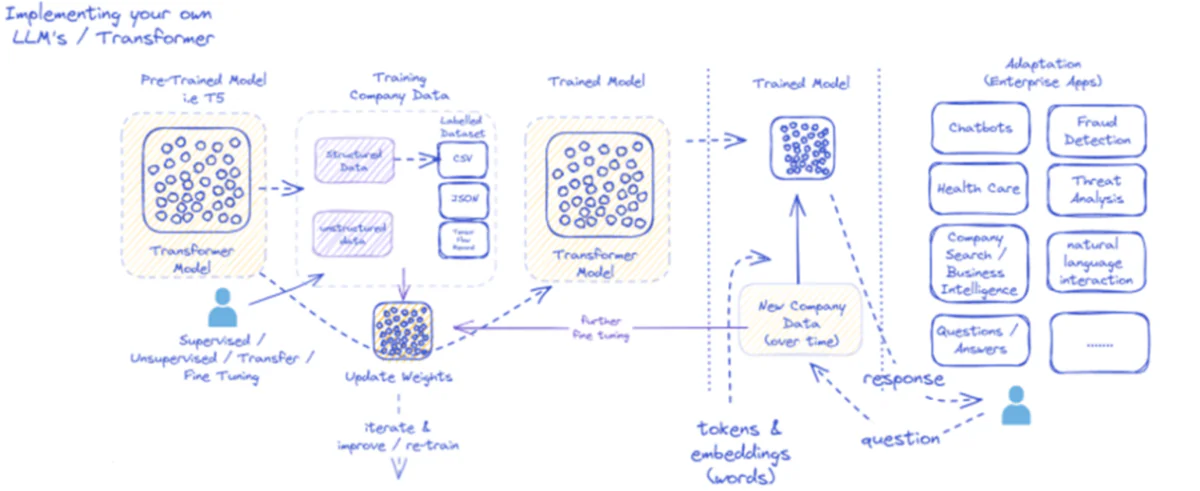

That is where fine-tuning is available in – the strategy of adapting a pre-trained LLM to excel at a specific application or use-case. By further training the model on a smaller, task-specific dataset, we are able to tune its capabilities to align with the nuances and requirements of that domain.

Tremendous-tuning is analogous to transferring the wide-ranging knowledge of a highly educated generalist to craft an material expert specialized in a certain field. On this guide, we’ll explore the whats, whys, and hows of fine-tuning LLMs.

Tremendous-tuning Large Language Models

What’s Tremendous-Tuning?

At its core, fine-tuning involves taking a big pre-trained model and updating its parameters using a second training phase on a dataset tailored to your goal task or domain. This enables the model to learn and internalize the nuances, patterns, and objectives specific to that narrower area.

While pre-training captures broad language understanding from an enormous and diverse text corpus, fine-tuning specializes that general competency. It’s akin to taking a Renaissance man and molding them into an industry expert.

The pre-trained model’s weights, which encode its general knowledge, are used as the start line or initialization for the fine-tuning process. The model is then trained further, but this time on examples directly relevant to the top application.

By exposing the model to this specialized data distribution and tuning the model parameters accordingly, we make the LLM more accurate and effective for the goal use case, while still benefiting from the broad pre-trained capabilities as a foundation.

Why Tremendous-Tune LLMs?

There are several key the explanation why chances are you’ll need to fine-tune a big language model:

- Domain Customization: Every field, from legal to medicine to software engineering, has its own nuanced language conventions, jargon, and contexts. Tremendous-tuning permits you to customize a general model to know and produce text tailored to the particular domain.

- Task Specialization: LLMs may be fine-tuned for various natural language processing tasks like text summarization, machine translation, query answering and so forth. This specialization boosts performance on the goal task.

- Data Compliance: Highly regulated industries like healthcare and finance have strict data privacy requirements. Tremendous-tuning allows training LLMs on proprietary organizational data while protecting sensitive information.

- Limited Labeled Data: Obtaining large labeled datasets for training models from scratch may be difficult. Tremendous-tuning allows achieving strong task performance from limited supervised examples by leveraging the pre-trained model’s capabilities.

- Model Updating: As latest data becomes available over time in a site, you’ll be able to fine-tune models further to include the newest knowledge and capabilities.

- Mitigating Biases: LLMs can pick up societal biases from broad pre-training data. Tremendous-tuning on curated datasets may also help reduce and proper these undesirable biases.

In essence, fine-tuning bridges the gap between a general, broad model and the focused requirements of a specialized application. It enhances the accuracy, safety, and relevance of model outputs for targeted use cases.

Tremendous-tuning Large Language Models

Tremendous-Tuning Approaches

There are two primary strategies in relation to fine-tuning large language models:

1) Full Model Tremendous-tuning

In the complete fine-tuning approach, all of the parameters (weights and biases) of the pre-trained model are updated in the course of the second training phase. The model is exposed to the task-specific labeled dataset, and the usual training process optimizes your entire model for that data distribution.

This enables the model to make more comprehensive adjustments and adapt holistically to the goal task or domain. Nonetheless, full fine-tuning has some downsides:

- It requires significant computational resources and time to coach, much like the pre-training phase.

- The storage requirements are high, as that you must maintain a separate fine-tuned copy of the model for every task.

- There’s a risk of “catastrophic forgetting”, where fine-tuning causes the model to lose some general capabilities learned during pre-training.

Despite these limitations, full fine-tuning stays a robust and widely used technique when resources permit and the goal task diverges significantly from general language.

2) Efficient Tremendous-Tuning Methods

To beat the computational challenges of full fine-tuning, researchers have developed efficient strategies that only update a small subset of the model’s parameters during fine-tuning. These parametrically efficient techniques strike a balance between specialization and reducing resource requirements.

Some popular efficient fine-tuning methods include:

Prefix-Tuning: Here, a small variety of task-specific vectors or “prefixes” are introduced and trained to condition the pre-trained model’s attention for the goal task. Only these prefixes are updated during fine-tuning.

LoRA (Low-Rank Adaptation): LoRA injects trainable low-rank matrices into each layer of the pre-trained model during fine-tuning. These small rank adjustments help specialize the model with far fewer trainable parameters than full fine-tuning.

Sure, I can provide an in depth explanation of LoRA (Low-Rank Adaptation) together with the mathematical formulation and code examples. LoRA is a well-liked parameter-efficient fine-tuning (PEFT) technique that has gained significant traction in the sector of huge language model (LLM) adaptation.

What’s LoRA?

LoRA is a fine-tuning method that introduces a small variety of trainable parameters to the pre-trained LLM, allowing for efficient adaptation to downstream tasks while preserving the vast majority of the unique model’s knowledge. As an alternative of fine-tuning all of the parameters of the LLM, LoRA injects task-specific low-rank matrices into the model’s layers, enabling significant computational and memory savings in the course of the fine-tuning process.

Mathematical Formulation

LoRA (Low-Rank Adaptation) is a fine-tuning method for big language models (LLMs) that introduces a low-rank update to the burden matrices. For a weight matrix 0∈, LoRA adds a low-rank matrix , with and , where is the rank. This approach significantly reduces the variety of trainable parameters, enabling efficient adaptation to downstream tasks with minimal computational resources. The updated weight matrix is given by .

This low-rank update may be interpreted as modifying the unique weight matrix $W_{0}$ by adding a low-rank matrix $BA$. The important thing advantage of this formulation is that as a substitute of updating all $d times k$ parameters in $W_{0}$, LoRA only must optimize $r times (d + k)$ parameters in $A$ and $B$, significantly reducing the variety of trainable parameters.

Here’s an example in Python using the peft library to use LoRA to a pre-trained LLM for text classification:

In this instance, we load a pre-trained BERT model for sequence classification and define a LoRA configuration. The r parameter specifies the rank of the low-rank update, and lora_alpha is a scaling factor for the update. The target_modules parameter indicates which layers of the model should receive the low-rank updates. After creating the LoRA-enabled model, we are able to proceed with the fine-tuning process using the usual training procedure.

Adapter Layers: Much like LoRA, but as a substitute of low-rank updates, thin “adapter” layers are inserted inside each transformer block of the pre-trained model. Only the parameters of those few latest compact layers are trained.

Prompt Tuning: This approach keeps the pre-trained model frozen completely. As an alternative, trainable “prompt” embeddings are introduced as input to activate the model’s pre-trained knowledge for the goal task.

These efficient methods can provide as much as 100x compute reductions in comparison with full fine-tuning, while still achieving competitive performance on many tasks. Additionally they reduce storage needs by avoiding full model duplication.

Nonetheless, their performance may lag behind full fine-tuning for tasks which can be vastly different from general language or require more holistic specialization.

The Tremendous-Tuning Process

Whatever the fine-tuning strategy, the general process for specializing an LLM follows a general framework:

- Dataset Preparation: You will need to acquire or create a labeled dataset that maps inputs (prompts) to desired outputs in your goal task. For text generation tasks like summarization, this might be input text to summarized output pairs.

- Dataset Splitting: Following best practices, split your labeled dataset into train, validation, and test sets. This separates data for model training, hyperparameter tuning, and final evaluation.

- Hyperparameter Tuning: Parameters like learning rate, batch size, and training schedule have to be tuned for probably the most effective fine-tuning in your data. This normally involves a small validation set.

- Model Training: Using the tuned hyperparameters, run the fine-tuning optimization process on the complete training set until the model’s performance on the validation set stops improving (early stopping).

- Evaluation: Assess the fine-tuned model’s performance on the held-out test set, ideally comprising real-world examples for the goal use case, to estimate real-world efficacy.

- Deployment and Monitoring: Once satisfactory, the fine-tuned model may be deployed for inference on latest inputs. It’s crucial to watch its performance and accuracy over time for concept drift.

While this outlines the general process, many nuances can impact fine-tuning success for a specific LLM or task. Strategies like curriculum learning, multi-task fine-tuning, and few-shot prompting can further boost performance.

Moreover, efficient fine-tuning methods involve extra considerations. For instance, LoRA requires techniques like conditioning the pre-trained model outputs through a combining layer. Prompt tuning needs rigorously designed prompts to activate the correct behaviors.

Advanced Tremendous-Tuning: Incorporating Human Feedback

While standard supervised fine-tuning using labeled datasets is effective, an exciting frontier is training LLMs directly using human preferences and feedback. This human-in-the-loop approach leverages techniques from reinforcement learning:

PPO (Proximal Policy Optimization): Here, the LLM is treated as a reinforcement learning agent, with its outputs being “actions”. A reward model is trained to predict human rankings or quality scores for these outputs. PPO then optimizes the LLM to generate outputs maximizing the reward model’s scores.

RLHF (Reinforcement Learning from Human Feedback): This extends PPO by directly incorporating human feedback into the training process. As an alternative of a set reward model, the rewards come from iterative human evaluations on the LLM’s outputs during fine-tuning.

While computationally intensive, these methods allow molding LLM behavior more precisely based on desired characteristics evaluated by humans, beyond what may be captured in a static dataset.

Corporations like Anthropic used RLHF to imbue their language models like Claude with improved truthfulness, ethics, and safety awareness beyond just task competence.

Potential Risks and Limitations

While immensely powerful, fine-tuning LLMs just isn’t without risks that should be rigorously managed:

Bias Amplification: If the fine-tuning data incorporates societal biases around gender, race, age, or other attributes, the model can amplify these undesirable biases. Curating representative and de-biased datasets is crucial.

Factual Drift: Even after fine-tuning on high-quality data, language models can “hallucinate” incorrect facts or outputs inconsistent with the training examples over longer conversations or prompts. Fact retrieval methods could also be needed.

Scalability Challenges: Full fine-tuning of giant models like GPT-3 requires immense compute resources which may be infeasible for a lot of organizations. Efficient fine-tuning partially mitigates this but has trade-offs.

Catastrophic Forgetting: During full fine-tuning, models can experience catastrophic forgetting, where they lose some general capabilities learned during pre-training. Multi-task learning could also be needed.

IP and Privacy Risks: Proprietary data used for fine-tuning can leak into publicly released language model outputs, posing risks. Differential privacy and knowledge hazard mitigation techniques are energetic areas of research.

Overall, while exceptionally useful, fine-tuning is a nuanced process requiring care around data quality, identity considerations, mitigating risks, and balancing performance-efficiency trade-offs based on use case requirements.

The Future: Language Model Customization At Scale

Looking ahead, advancements in fine-tuning and model adaptation techniques might be crucial for unlocking the complete potential of huge language models across diverse applications and domains.

More efficient methods enabling fine-tuning even larger models like PaLM with constrained resources could democratize access. Automating dataset creation pipelines and prompt engineering could streamline specialization.

Self-supervised techniques to fine-tune from raw data without labels may open up latest frontiers. And compositional approaches to mix fine-tuned sub-models trained on different tasks or data could allow constructing highly tailored models on-demand.

Ultimately, as LLMs turn out to be more ubiquitous, the power to customize and specialize them seamlessly for each conceivable use case might be critical. Tremendous-tuning and related model adaptation strategies are pivotal steps in realizing the vision of huge language models as flexible, protected, and powerful AI assistants augmenting human capabilities across every domain and endeavor.

tesisat temizliği Beşiktaş’deki su kaçağı tespiti hizmetlerinin hızlı ve güvenilir olması çok önemlidir. Zira su kaçağı, zamanla daha fazla hasara yol açabilir. https://wehubspace.com/blogs/25917/Be%C5%9Fikta%C5%9F-Su-Ka%C3%A7a%C4%9F%C4%B1-Tespiti