Denoising Diffusion Models are generative AI frameworks that synthesize images from noise through an iterative denoising process. They’re celebrated for his or her exceptional image generation capabilities and variety, largely attributed to text- or class-conditional guidance methods, including classifier guidance and classifier-free guidance. These models have been notably successful in creating diverse, high-quality images. Recent studies have shown that guidance techniques like class captions and labels play a vital role in enhancing the standard of images these models generate.

Nevertheless, diffusion models and guidance methods face limitations under certain external conditions. The Classifier-Free Guidance (CFG) method, which uses label dropping, adds complexity to the training process, while the Classifier Guidance (CG) method necessitates additional classifier training. Each methods are somewhat constrained by their reliance on hard-earned external conditions, limiting their potential and confining them to conditional settings.

To deal with these limitations, developers have formulated a more general approach to diffusion guidance, generally known as Self-Attention Guidance (SAG). This method leverages information from intermediate samples of diffusion models to generate images. We’ll explore SAG in this text, discussing its workings, methodology, and results in comparison with current state-of-the-art frameworks and pipelines.

Denoising Diffusion Models (DDMs) have gained popularity for his or her ability to create images from noise via an iterative denoising process. The image synthesis prowess of those models is essentially attributable to the employed diffusion guidance methods. Despite their strengths, diffusion models and guidance-based methods face challenges like added complexity and increased computational costs.

To beat the present limitations, developers have introduced the Self-Attention Guidance method, a more general formulation of diffusion guidance that doesn’t depend on the external information from diffusion guidance, thus facilitating a condition-free and versatile approach to guide diffusion frameworks. The approach opted by Self-Attention Guidance ultimately helps in enhancing the applicability of the normal diffusion-guidance methods to cases with or without external requirements.

Self-Attention Guidance relies on the easy principle of generalized formulation, and the idea that internal information contained inside intermediate samples can function guidance as well. On the premise of this principle, the SAG method first introduces Blur Guidance, an easy and simple solution to enhance sample quality. Blur guidance goals to use the benign properties of Gaussian blur to remove fine-scale details naturally by guiding intermediate samples using the eliminated information consequently of Gaussian blur. Although the Blur guidance method does boost the sample quality with a moderate guidance scale, it fails to copy the outcomes on a big guidance scale because it often introduces structural ambiguity in entire regions. In consequence, the Blur guidance method finds it difficult to align the unique input with the prediction of the degraded input. To boost the soundness and effectiveness of the Blur guidance method on a bigger guidance scale, the Self-Attention Guidance attempts to use the self-attention mechanism of the diffusion models as modern diffusion models already contain a self-attention mechanism inside their architecture.

With the idea that self-attention is important to capture salient information at its core, the Self-Attention Guidance method uses self-attention maps of the diffusion models to adversarially blur the regions containing salient information, and in the method, guides the diffusion models with required residual information. The tactic then leverages the eye maps during diffusion models’ reverse process, to spice up the standard of the pictures and uses self-conditioning to scale back the artifacts without requiring additional training or external information.

To sum it up, the Self-Attention Guidance method

- Is a novel approach that uses internal self-attention maps of diffusion frameworks to enhance the generated sample image quality without requiring any additional training or counting on external conditions.

- The SAG method attempts to generalize conditional guidance methods right into a condition-free method that will be integrated with any diffusion model without requiring additional resources or external conditions, thus enhancing the applicability of guidance-based frameworks.

- The SAG method also attempts to display its orthogonal abilities to existing conditional methods and frameworks, thus facilitating a lift in performance by facilitating flexible integration with other methods and models.

Moving along, the Self-Attention Guidance method learns from the findings of related frameworks including Denoising Diffusion Models, Sampling Guidance, Generative AI Self-Attention methods, and Diffusion Models’ Internal Representations. Nevertheless, at its core, the Self-Attention Guidance method implements the learnings from DDPM or Denoising Diffusion Probabilistic Models, Classifier Guidance, Classifier-free Guidance, and Self-Attention in Diffusion frameworks. We might be talking about them in-depth within the upcoming section.

Self-Attention Guidance : Preliminaries, Methodology, and Architecture

Denoising Diffusion Probabilistic Model or DDPM

DDPM or Denoising Diffusion Probabilistic Model is a model that uses an iterative denoising process to get better a picture from white noise. Traditionally, a DDPM model receives an input image and a variance schedule at a time step to acquire the image using a forward process generally known as the Markovian process.

Classifier and Classifier-Free Guidance with GAN Implementation

GAN or Generative Adversarial Networks possess unique trading diversity for fidelity, and to bring this ability of GAN frameworks to diffusion models, the Self-Attention Guidance framework proposes to make use of a classifier guidance method that uses a further classifier. Conversely, a classifier-free guidance method can be implemented without using a further classifier to realize the identical results. Although the tactic delivers the specified results, it remains to be not computationally viable because it requires additional labels, and in addition confines the framework to conditional diffusion models that require additional conditions like a text or a category together with additional training details that adds to the complexity of the model.

Generalizing Diffusion Guidance

Although Classifier and Classifier-free Guidance methods deliver the specified results and help with conditional generation in diffusion models, they’re depending on additional inputs. For any given timestep, the input for a diffusion model comprises a generalized condition and a perturbed sample without the generalized condition. Moreover, the generalized condition encompasses internal information throughout the perturbed sample or an external condition, and even each. The resultant guidance is formulated with the utilization of an imaginary regressor with the idea that it might probably predict the generalized condition.

Improving Image Quality using Self-Attention Maps

The Generalized Diffusion Guidance implies that it is possible to offer guidance to the reverse strategy of diffusion models by extracting salient information within the generalized condition contained within the perturbed sample. Constructing on the identical, the Self-Attention Guidance method captures the salient information for reverse processes effectively while limiting the risks that arise consequently of out-of-distribution issues in pre-trained diffusion models.

Blur Guidance

Blur guidance in Self-Attention Guidance relies on Gaussian Blur, a linear filtering method through which the input signal is convolved with a Gaussian filter to generate an output. With a rise in the usual deviation, Gaussian Blur reduces the fine-scale details throughout the input signals, and leads to locally indistinguishable input signals by smoothing them towards the constant. Moreover, experiments have indicated an information imbalance between the input signal, and the Gaussian blur output signal where the output signal incorporates more fine-scale information.

On the premise of this learning, the Self-Attention Guidance framework introduces Blur guidance, a method that intentionally excludes the data from intermediate reconstructions throughout the diffusion process, and as a substitute, uses this information to guide its predictions towards increasing the relevancy of images to the input information. Blur guidance essentially causes the unique prediction to deviate more from the blurred input prediction. Moreover, the benign property in Gaussian blur prevents the output signals from deviating significantly from the unique signal with a moderate deviation. In easy words, blurring occurs in the pictures naturally that makes the Gaussian blur a more suitable method to be applied to pre-trained diffusion models.

Within the Self-Attention Guidance pipeline, the input signal is first blurred using a Gaussian filter, and it’s then diffused with additional noise to supply the output signal. By doing this, the SAG pipeline mitigates the side effect of the resultant blur that reduces Gaussian noise, and makes the guidance depend on content fairly than being depending on random noise. Although blur guidance delivers satisfactory results on frameworks with moderate guidance scale, it fails to copy the outcomes on existing models with a big guidance scale because it gets susceptible to produce noisy results as demonstrated in the next image.

These results is likely to be a results of the structural ambiguity introduced within the framework by global blur that makes it difficult for the SAG pipeline to align the predictions of the unique input with the degraded input, leading to noisy outputs.

Self-Attention Mechanism

As mentioned earlier, diffusion models often have an in-build self-attention component, and it’s considered one of the more essential components in a diffusion model framework. The Self-Attention mechanism is implemented on the core of the diffusion models, and it allows the model to concentrate to the salient parts of the input throughout the generative process as demonstrated in the next image with high-frequency masks in the highest row, and self-attention masks in the underside row of the finally generated images.

The proposed Self-Attention Guidance method builds on the identical principle, and leverages the capabilities of self-attention maps in diffusion models. Overall, the Self-Attention Guidance method blurs the self-attended patches within the input signal or in easy words, conceals the data of patches that’s attended to by the diffusion models. Moreover, the output signals in Self-Attention Guidance contain intact regions of the input signals meaning that it doesn’t end in structural ambiguity of the inputs, and solves the issue of world blur. The pipeline then obtains the aggregated self-attention maps by conducting GAP or Global Average Pooling to aggregate self-attention maps to the dimension, and up-sampling the nearest-neighbor to match the resolution of the input signal.

Self-Attention Guidance : Experiments and Results

To guage its performance, the Self-Attention Guidance pipeline is sampled using 8 Nvidia GeForce RTX 3090 GPUs, and is built upon pre-trained IDDPM, ADM, and Stable Diffusion frameworks.

Unconditional Generation with Self-Attention Guidance

To measure the effectiveness of the SAG pipeline on unconditional models and display the condition-free property not possessed by Classifier Guidance, and Classifier Free Guidance approach, the SAG pipeline is run on unconditionally pre-trained frameworks on 50 thousand samples.

As it might probably be observed, the implementation of the SAG pipeline improves the FID, sFID, and IS metrics of unconditional input while lowering the recall value at the identical time. Moreover, the qualitative improvements consequently of implementing the SAG pipeline is obvious in the next images where the pictures on the highest are results from ADM and Stable Diffusion frameworks whereas the pictures at the underside are results from the ADM and Stable Diffusion frameworks with the SAG pipeline.

Conditional Generation with SAG

The mixing of SAG pipeline in existing frameworks delivers exceptional leads to unconditional generation, and the SAG pipeline is able to condition-agnosticity that enables the SAG pipeline to be implemented for conditional generation as well.

Stable Diffusion with Self-Attention Guidance

Regardless that the unique Stable Diffusion framework generates prime quality images, integrating the Stable Diffusion framework with the Self-Attention Guidance pipeline can enhance the outcomes drastically. To guage its effect, developers use empty prompts for Stable Diffusion with random seed for every image pair, and use human evaluation on 500 pairs of images with and without Self-Attention Guidance. The outcomes are demonstrated in the next image.

Moreover, the implementation of SAG can enhance the capabilities of the Stable Diffusion framework as fusing Classifier-Free Guidance with Self-Attention Guidance can broaden the range of Stable Diffusion models to text-to-image synthesis. Moreover, the generated images from the Stable Diffusion model with Self-Attention Guidance are of upper quality with lesser artifacts because of the self-conditioning effect of the SAG pipeline as demonstrated in the next image.

Current Limitations

Although the implementation of the Self-Attention Guidance pipeline can substantially improve the standard of the generated images, it does have some limitations.

Considered one of the most important limitations is the orthogonality with Classifier-Guidance and Classifier-Free Guidance. As it might probably be observed in the next image, the implementation of SAG does improve the FID rating and prediction rating that signifies that the SAG pipeline incorporates an orthogonal component that will be used with traditional guidance methods concurrently.

Nevertheless, it still requires diffusion models to be trained in a selected manner that adds to the complexity in addition to computational costs.

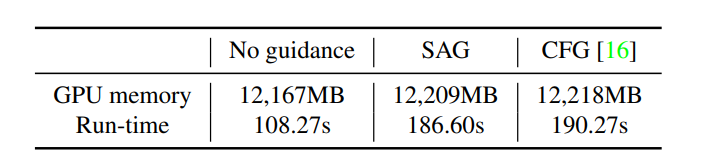

Moreover, the implementation of Self-Attention Guidance doesn’t increase the memory or time consumption, a sign that the overhead resulting from the operations like masking & blurring in SAG is negligible. Nevertheless, it still adds to the computational costs because it includes a further step in comparison to no guidance approaches.

Final Thoughts

In this text, we now have talked about Self-Attention Guidance, a novel and general formulation of guidance method that makes use of internal information available throughout the diffusion models for generating high-quality images. Self-Attention Guidance relies on the easy principle of generalized formulation, and the idea that internal information contained inside intermediate samples can function guidance as well. The Self-Attention Guidance pipeline is a condition-free and training-free approach that will be implemented across various diffusion models, and uses self-conditioning to scale back the artifacts within the generated images, and boosts the general quality.