LG has released its recent model, ‘EXAONE 3.0’, as open source.

It was emphasized that the small language model (SLM) with 7.8 billion parameters outperforms similarly sized global open source models reminiscent of ‘Rama 3.1 8B’, ‘Qone 2 7B’, and ‘Mistral 7B’. It is nearly the primary time that a domestic open source model has been in a position to compete with the performance of popular global models.

LG AI Research Center (President Bae Kyung-hoon) announced on the seventh that it should release the lightweight model with the very best utilization amongst all ExaOne 3.0 models as open source to contribute to the ‘development of the AI research ecosystem’.

At the identical time, the beta version of ‘ChatEXAONE’, an AI agent service based on ExaOne 3.0 targeting executives and employees, was also introduced.

Particularly, LG AI Researchers concurrently released a technical report containing parameters, learning data tokens, model learning methods, and performance evaluation results. This is just not only since it is open source, but additionally since it is nearly the primary time in Korea that a technical report has been released similtaneously a model release.

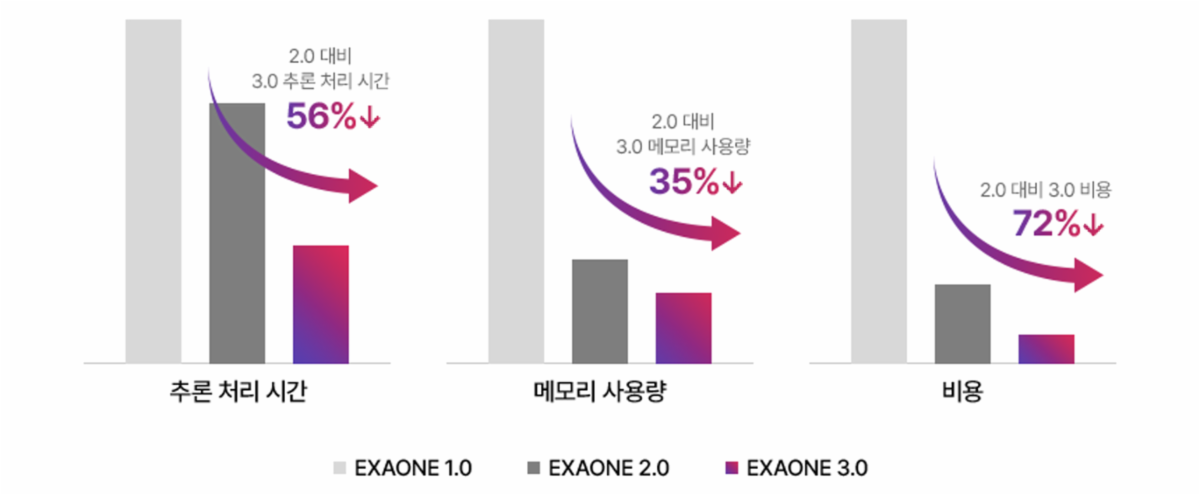

Through this, this model emphasized that performance and economy are key. In comparison with the ‘ExaOne 2.0’ released in July of last 12 months, it succeeded in reducing inference processing time by 56%, memory usage by 35%, and operating costs by 72%.

In addition they said that they solved the facility problem. By specializing in research into lightweight and optimization technologies, they succeeded in increasing the model’s performance and reducing its size by 1/300. ExaOne 1.0 had 300 billion parameters (300B).

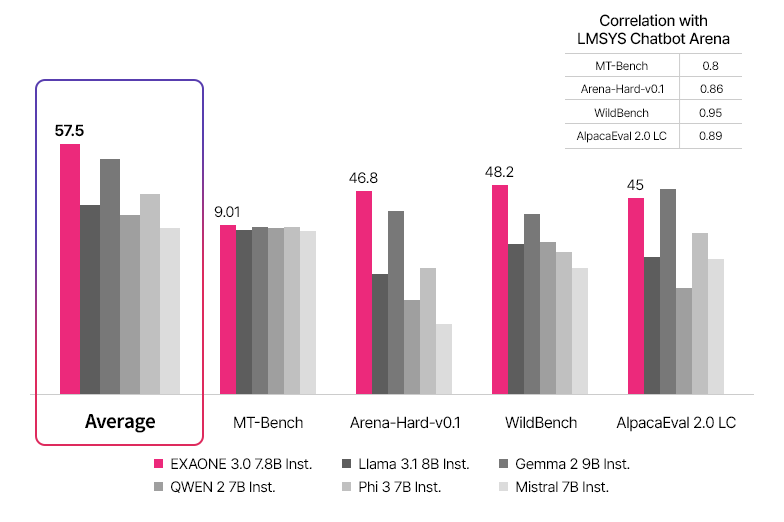

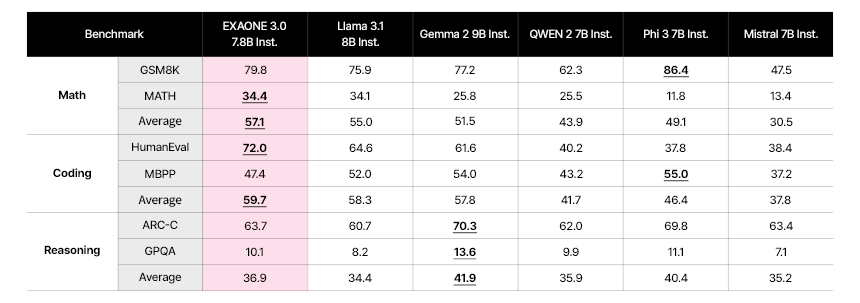

Benchmark scores were also released. Along with major indices reminiscent of MT-Bench, AlpacaEval-2.0, Arena-Hard, and WildBench, details on all 25 benchmarks used for evaluation were disclosed. The comparison targets are Meta’s ‘Rama 3.1 8B’, Alibaba’s ‘Qone 2 7B’, Google’s ‘Gemma 2 9B’, Microsoft’s ‘Pie 3 7B’, and Mistral AI’s ‘Mistral 7B’, that are representative global open source sLMs.

In consequence, it ranked first in 13 benchmarks, including coding and math. Not only did it record the very best performance in all Korean language areas, it also recorded a median rating in English inference (ARC), demonstrating the opportunity of a multilingual model.

It was explained that this was the results of learning over 60 million pieces of domestic and international specialized data, including patents, software codes, mathematics, and chemistry. It also revealed plans to further improve the performance of ‘ExaOne 3.0’ by expanding the fields to incorporate law, bio, medicine, education, and foreign languages by the tip of the 12 months, increasing the quantity of learning data to over 100 million pieces.

As well as, he emphasized that they’re continuing ‘red team’ activities to intentionally attack AI models to confirm and improve technology and repair vulnerabilities.

It was reported that from the second half of the 12 months, it should even be available in the shape of LG services. The means of applying ‘ExaOne 3.0’ to LG affiliate services is underway.

Particularly, the model sizes were designed in a different way depending on the aim, reminiscent of ▲an ultra-light model for on-device AI ▲a light-weight model for general purposes ▲a high-performance model that might be utilized in specialized fields. Affiliates plan to fine-tune ‘ExaOne 3.0’ with their very own data.

Background Hoon Lee, head of LG AI Research Institute, said, “It is necessary to create AI that might be utilized in actual industrial sites, so we plan to strengthen partnerships between LG affiliates and external corporations and organizations with ExaOne, which has specialized performance and economy.” He added, “Particularly, we plan to open source our self-developed AI model for the primary time in Korea, thereby contributing to the activation of the open AI research ecosystem and enhancement of the nation’s AI competitiveness.”

Meanwhile, LG executives and employees can now use the AI secretary ‘ChatXaOne’ from today. Based on ExaOne 3.0, it’s a generative AI service that gives various functions that may increase work convenience and efficiency, reminiscent of real-time web information-based Q&A, document and image-based Q&A, coding, and database management.

This permits AI for use for every little thing from search to summary, translation, data evaluation, report writing, and coding. Particularly, it applies augmented search generation (RAG) technology to know the context of the prompts entered by employees and supply answers that reflect the most recent information.

He added that it could possibly even be utilized by software developers and data evaluation experts. With just natural language input, it could possibly generate 22 programming languages, including Python, Java, and C++, in addition to SQL queries that might be used for database management.

ChatXA One beta service shall be available until the tip of the 12 months, and the official service and mobile app shall be provided sequentially in accordance with the readiness status of every LG affiliate. For affiliates that require in-house document learning and security data management, we plan to construct a separate specialized service.

For more details On the blog You’ll be able to check it.

Reporter Jang Se-min semim99@aitimes.com