A guest blog post by Hugging Face fellow Stas Bekman

As recent Machine Learning models have been growing much faster than the quantity of GPU memory added to newly released cards, many users are unable to coach and even just load a few of those huge models onto their hardware. While there’s an ongoing effort to distill a few of those huge models to be of a more manageable size — that effort is not producing models sufficiently small soon enough.

In the autumn of 2019 Samyam Rajbhandari, Jeff Rasley, Olatunji Ruwase and Yuxiong He published a paper:

ZeRO: Memory Optimizations Toward Training Trillion Parameter Models, which accommodates a plethora of ingenious recent ideas on how one could make their hardware do rather more than what it was thought possible before. A short while later DeepSpeed has been released and it gave to the world the open source implementation of a lot of the ideas in that paper (a number of ideas are still in works) and in parallel a team from Facebook released FairScale which also implemented a few of the core ideas from the ZeRO paper.

In case you use the Hugging Face Trainer, as of transformers v4.2.0 you may have the experimental support for DeepSpeed’s and FairScale’s ZeRO features. The brand new --sharded_ddp and --deepspeed command line Trainer arguments provide FairScale and DeepSpeed integration respectively. Here is the total documentation.

This blog post will describe how you may profit from ZeRO no matter whether you own only a single GPU or a complete stack of them.

Huge Speedups with Multi-GPU Setups

Let’s do a small finetuning with translation task experiment, using a t5-large model and the finetune_trainer.py script which you’ll find under examples/seq2seq within the transformers GitHub repo.

We have now 2x 24GB (Titan RTX) GPUs to check with.

That is only a proof of concept benchmarks so surely things may be improved further, so we’ll benchmark on a small sample of 2000 items for training and 500 items for evalulation to perform the comparisons. Evaluation does by default a beam search of size 4, so it’s slower than training with the identical variety of samples, that is why 4x less eval items were utilized in these tests.

Listed below are the important thing command line arguments of our baseline:

export BS=16

python -m torch.distributed.launch --nproc_per_node=2 ./finetune_trainer.py

--model_name_or_path t5-large --n_train 2000 --n_val 500

--per_device_eval_batch_size $BS --per_device_train_batch_size $BS

--task translation_en_to_ro [...]

We are only using the DistributedDataParallel (DDP) and nothing else to spice up the performance for the baseline. I used to be in a position to fit a batch size (BS) of 16 before hitting Out of Memory (OOM) error.

Note, that for simplicity and to make it easier to know, I even have only shown

the command line arguments necessary for this demonstration. You can find the whole command line at

this post.

Next, we’re going to re-run the benchmark each time adding certainly one of the next:

--fp16--sharded_ddp(fairscale)--sharded_ddp --fp16(fairscale)--deepspeedwithout cpu offloading--deepspeedwith cpu offloading

For the reason that key optimization here is that every technique deploys GPU RAM more efficiently, we’ll try to repeatedly increase the batch size and expect the training and evaluation to finish faster (while keeping the metrics regular and even improving some, but we can’t deal with these here).

Do not forget that training and evaluation stages are very different from one another, because during training model weights are being modified, gradients are being calculated, and optimizer states are stored. During evaluation, none of those occur, but on this particular task of translation the model will try to go looking for the most effective hypothesis, so it actually has to do multiple runs before it’s satisfied. That is why it is not fast, especially when a model is large.

Let us take a look at the outcomes of those six test runs:

| Method | max BS | train time | eval time |

|---|---|---|---|

| baseline | 16 | 30.9458 | 56.3310 |

| fp16 | 20 | 21.4943 | 53.4675 |

| sharded_ddp | 30 | 25.9085 | 47.5589 |

| sharded_ddp+fp16 | 30 | 17.3838 | 45.6593 |

| deepspeed w/o cpu offload | 40 | 10.4007 | 34.9289 |

| deepspeed w/ cpu offload | 50 | 20.9706 | 32.1409 |

It is simple to see that each FairScale and DeepSpeed provide great improvements over the baseline, in the overall train and evaluation time, but additionally within the batch size. DeepSpeed implements more magic as of this writing and appears to be the short term winner, but Fairscale is less complicated to deploy. For DeepSpeed it’s essential to write an easy configuration file and alter your command line’s launcher, with Fairscale you simply must add the --sharded_ddp command line argument, so you could need to try it first because it’s essentially the most low-hanging fruit.

Following the 80:20 rule, I even have only spent a number of hours on these benchmarks and I have not tried to squeeze every MB and second by refining the command line arguments and configuration, because it’s pretty obvious from the straightforward table what you’d need to try next. Whenever you will face an actual project that can be running for hours and maybe days, definitely spend more time to ensure you employ essentially the most optimal hyper-parameters to get your job done faster and at a minimal cost.

In case you would really like to experiment with this benchmark yourself or need to know more details in regards to the hardware and software used to run it, please, discuss with this post.

Fitting A Huge Model Onto One GPU

While Fairscale gives us a lift only with multiple GPUs, DeepSpeed has a present even for those of us with a single GPU.

Let’s try the unimaginable – let’s train t5-3b on a 24GB RTX-3090 card.

First let’s attempt to finetune the massive t5-3b using the traditional single GPU setup:

export BS=1

CUDA_VISIBLE_DEVICES=0 ./finetune_trainer.py

--model_name_or_path t5-3b --n_train 60 --n_val 10

--per_device_eval_batch_size $BS --per_device_train_batch_size $BS

--task translation_en_to_ro --fp16 [...]

No cookie, even with BS=1 we get:

RuntimeError: CUDA out of memory. Tried to allocate 64.00 MiB (GPU 0; 23.70 GiB total capability;

21.37 GiB already allocated; 45.69 MiB free; 22.05 GiB reserved in total by PyTorch)

Note, as earlier I’m showing only the necessary parts and the total command line arguments may be found

here.

Now update your transformers to v4.2.0 or higher, then install DeepSpeed:

pip install deepspeed

and let’s try again, this time adding DeepSpeed to the command line:

export BS=20

CUDA_VISIBLE_DEVICES=0 deepspeed --num_gpus=1 ./finetune_trainer.py

--model_name_or_path t5-3b --n_train 60 --n_val 10

--per_device_eval_batch_size $BS --per_device_train_batch_size $BS

--task translation_en_to_ro --fp16 --deepspeed ds_config_1gpu.json [...]

et voila! We get a batch size of 20 trained just high quality. I could probably push it even further. This system failed with OOM at BS=30.

Listed below are the relevant results:

2021-01-12 19:06:31 | INFO | __main__ | train_n_objs = 60

2021-01-12 19:06:31 | INFO | __main__ | train_runtime = 8.8511

2021-01-12 19:06:35 | INFO | __main__ | val_n_objs = 10

2021-01-12 19:06:35 | INFO | __main__ | val_runtime = 3.5329

We will not compare these to the baseline, because the baseline won’t even start and immediately failed with OOM.

Simply amazing!

I used only a tiny sample since I used to be primarily concerned with with the ability to train and evaluate with this huge model that normally won’t fit onto a 24GB GPU.

In case you would really like to experiment with this benchmark yourself or need to know more details in regards to the hardware and software used to run it, please, discuss with this post.

The Magic Behind ZeRO

Since transformers only integrated these fabulous solutions and wasn’t a part of their invention I’ll share the resources where you may discover all the main points for yourself. But listed below are a number of quick insights that will help understand how ZeRO manages these amazing feats.

The important thing feature of ZeRO is adding distributed data storage to the quite familiar concept of information parallel training.

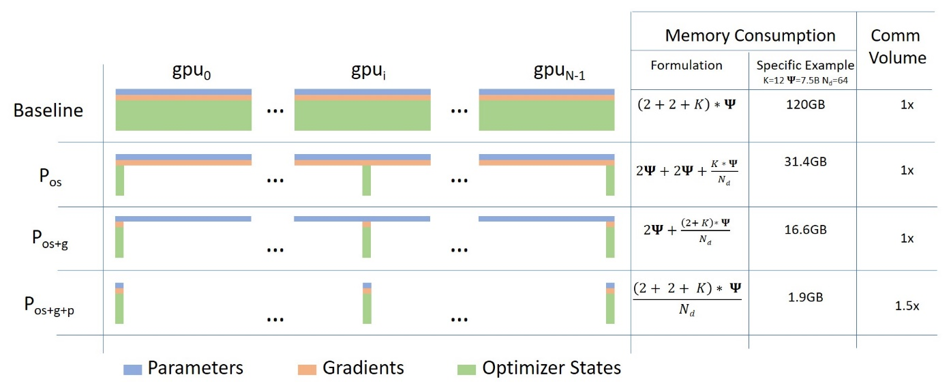

The computation on each GPU is strictly the identical as data parallel training, however the parameter, gradients and optimizer states are stored in a distributed/partitioned fashion across all of the GPUs and fetched only when needed.

The next diagram, coming from this blog post illustrates how this works:

ZeRO’s ingenious approach is to partition the params, gradients and optimizer states equally across all GPUs and provides each GPU only a single partition (also known as a shard). This results in zero overlap in data storage between GPUs. At runtime each GPU builds up each layer’s data on the fly by asking participating GPUs to send the data it’s lacking.

This concept might be difficult to know, and you will see that my attempt at an evidence here.

As of this writing FairScale and DeepSpeed only perform Partitioning (Sharding) for the optimizer states and gradients. Model parameters sharding is supposedly coming soon in DeepSpeed and FairScale.

The opposite powerful feature is ZeRO-Offload (paper). This feature offloads a few of the processing and memory must the host’s CPU, thus allowing more to be fit onto the GPU. You saw its dramatic impact within the success at running t5-3b on a 24GB GPU.

One other problem that quite a lot of people complain about on pytorch forums is GPU memory fragmentation. One often gets an OOM error that will appear like this:

RuntimeError: CUDA out of memory. Tried to allocate 1.48 GiB (GPU 0; 23.65 GiB total capability;

16.22 GiB already allocated; 111.12 MiB free; 22.52 GiB reserved in total by PyTorch)

This system desires to allocate ~1.5GB and the GPU still has some 6-7GBs of unused memory, nevertheless it reports to have only ~100MB of contiguous free memory and it fails with the OOM error. This happens as chunks of various size get allocated and de-allocated many times, and over time holes get created resulting in memory fragmentation, where there’s quite a lot of unused memory but no contiguous chunks of the specified size. In the instance above this system could probably allocate 100MB of contiguous memory, but clearly it might probably’t get 1.5GB in a single chunk.

DeepSpeed attacks this problem by managing GPU memory by itself and ensuring that long run memory allocations don’t mix with short-term ones and thus there’s much less fragmentation. While the paper doesn’t go into details, the source code is out there, so it’s possible to see how DeepSpeed accomplishes that.

As ZeRO stands for Zero Redundancy Optimizer, it is simple to see that it lives as much as its name.

The Future

Besides the anticipated upcoming support for model params sharding in DeepSpeed, it already released recent features that we’ve not explored yet. These include DeepSpeed Sparse Attention and 1-bit Adam, that are speculated to decrease memory usage and dramatically reduce inter-GPU communication overhead, which should result in a good faster training and support even larger models.

I trust we’re going to see recent gifts from the FairScale team as well. I believe they’re working on ZeRO stage 3 as well.

Much more exciting, ZeRO is being integrated into pytorch.

Deployment

In case you found the outcomes shared on this blog post enticing, please proceed here for details on the way to use DeepSpeed and FairScale with the transformers Trainer.

You may, in fact, modify your individual trainer to integrate DeepSpeed and FairScale, based on each project’s instructions or you may “cheat” and see how we did it within the transformers Trainer. In case you go for the latter, to search out your way around grep the source code for deepspeed and/or sharded_ddp.

The excellent news is that ZeRO requires no model modification. The one required modifications are within the training code.

Issues

In case you encounter any issues with the mixing a part of either of those projects please open an Issue in transformers.

But when you may have problems with DeepSpeed and FairScale installation, configuration and deployment – it’s essential to ask the experts of their domains, subsequently, please, use DeepSpeed Issue or FairScale Issue as an alternative.

Resources

Whilst you don’t actually need to know how any of those projects work and you may just deploy them via the transformers Trainer, should you need to work out the whys and hows please discuss with the next resources.

Gratitude

We were quite astonished on the amazing level of support we received from the FairScale and DeepSpeed developer teams while working on integrating those projects into transformers.

Particularly I’d prefer to thank:

from the FairScale team and:

from the DeepSpeed team on your generous and caring support and prompt resolution of the problems now we have encountered.

And HuggingFace for providing access to hardware the benchmarks were run on.

Sylvain Gugger @sgugger and Stas Bekman @stas00 worked on the mixing of those projects.