In lots of Machine Learning applications, the quantity of accessible labeled data is a barrier to producing a high-performing model. The newest developments in NLP show you could overcome this limitation by providing a couple of examples at inference time with a big language model – a method often called Few-Shot Learning. On this blog post, we’ll explain what Few-Shot Learning is, and explore how a big language model called GPT-Neo, and the 🤗 Accelerated Inference API, will be used to generate your individual predictions.

What’s Few-Shot Learning?

Few-Shot Learning refers back to the practice of feeding a machine learning model with a really small amount of coaching data to guide its predictions, like a couple of examples at inference time, as opposed to plain fine-tuning techniques which require a comparatively great amount of coaching data for the pre-trained model to adapt to the specified task with accuracy.

This method has been mostly utilized in computer vision, but with among the latest Language Models, like EleutherAI GPT-Neo and OpenAI GPT-3, we are able to now use it in Natural Language Processing (NLP).

In NLP, Few-Shot Learning will be used with Large Language Models, which have learned to perform a large variety of tasks implicitly during their pre-training on large text datasets. This permits the model to generalize, that’s to know related but previously unseen tasks, with just a couple of examples.

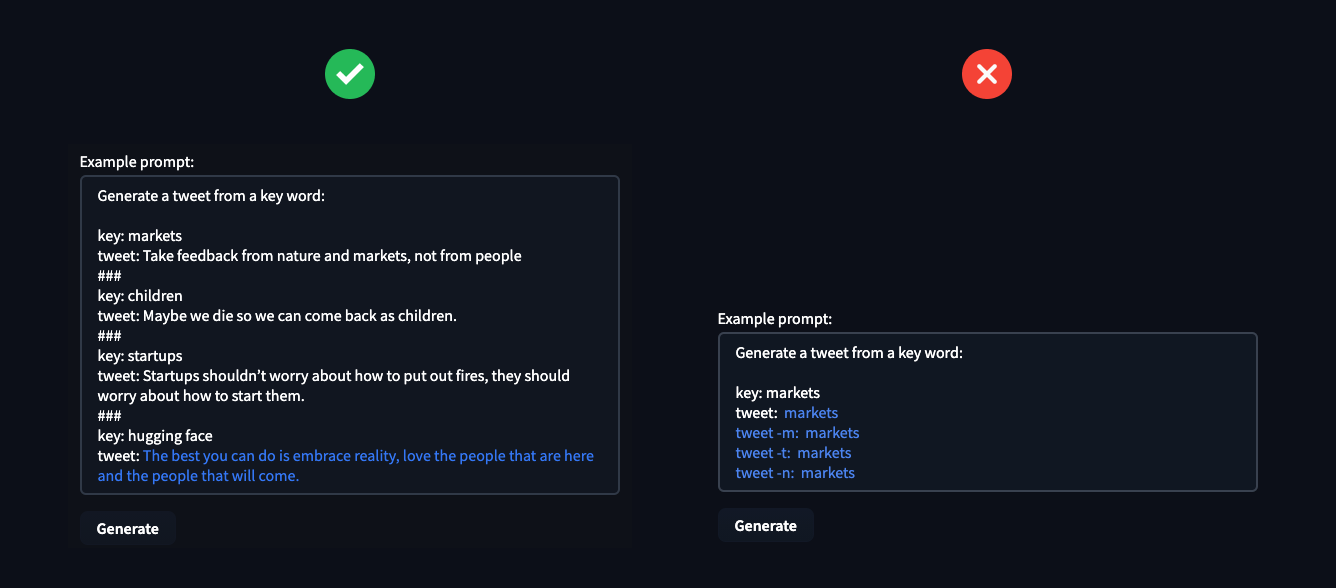

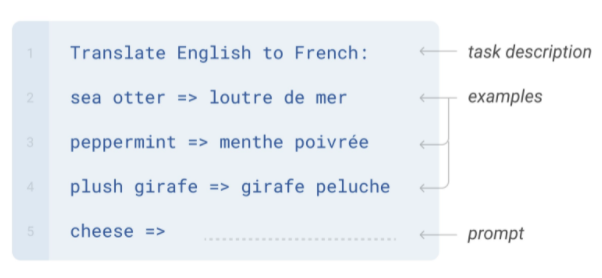

Few-Shot NLP examples consist of three primary components:

- Task Description: A brief description of what the model should do, e.g. “Translate English to French”

- Examples: A number of examples showing the model what it is anticipated to predict, e.g. “sea otter => loutre de mer”

- Prompt: The start of a brand new example, which the model should complete by generating the missing text, e.g. “cheese => “

Image from Language Models are Few-Shot Learners

Creating these few-shot examples will be tricky, since you want to articulate the “task” you wish the model to perform through them. A typical issue is that models, especially smaller ones, are very sensitive to the way in which the examples are written.

An approach to optimize Few-Shot Learning in production is to learn a typical representation for a task after which train task-specific classifiers on top of this representation.

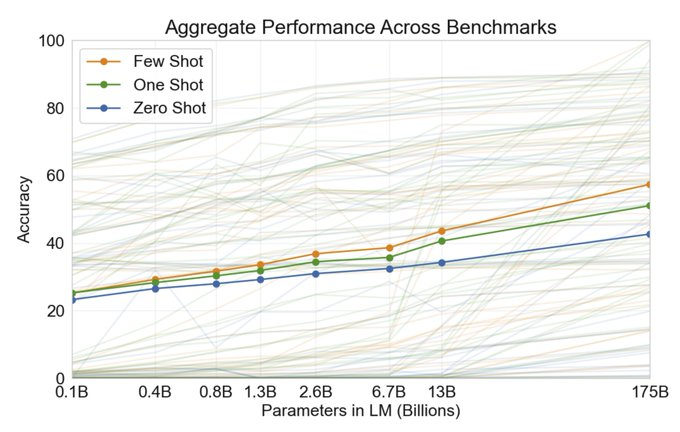

OpenAI showed within the GPT-3 Paper that the few-shot prompting ability improves with the variety of language model parameters.

Image from Language Models are Few-Shot Learners

Let’s now take a take a look at how at how GPT-Neo and the 🤗 Accelerated Inference API will be used to generate your individual Few-Shot Learning predictions!

What’s GPT-Neo?

GPT-Neo is a family of transformer-based language models from EleutherAI based on the GPT architecture. EleutherAI‘s primary goal is to coach a model that’s equivalent in size to GPT-3 and make it available to the general public under an open license.

The entire currently available GPT-Neo checkpoints are trained with the Pile dataset, a big text corpus that’s extensively documented in (Gao et al., 2021). As such, it is anticipated to operate higher on the text that matches the distribution of its training text; we recommend keeping this in mind when designing your examples.

🤗 Accelerated Inference API

The Accelerated Inference API is our hosted service to run inference on any of the ten,000+ models publicly available on the 🤗 Model Hub, or your individual private models, via easy API calls. The API includes acceleration on CPU and GPU with as much as 100x speedup in comparison with out of the box deployment of Transformers.

To integrate Few-Shot Learning predictions with GPT-Neo in your individual apps, you should use the 🤗 Accelerated Inference API with the code snippet below. You could find your API Token here, if you happen to haven’t got an account you possibly can start here.

import json

import requests

API_TOKEN = ""

def query(payload='',parameters=None,options={'use_cache': False}):

API_URL = "https://api-inference.huggingface.co/models/EleutherAI/gpt-neo-2.7B"

headers = {"Authorization": f"Bearer {API_TOKEN}"}

body = {"inputs":payload,'parameters':parameters,'options':options}

response = requests.request("POST", API_URL, headers=headers, data= json.dumps(body))

try:

response.raise_for_status()

except requests.exceptions.HTTPError:

return "Error:"+" ".join(response.json()['error'])

else:

return response.json()[0]['generated_text']

parameters = {

'max_new_tokens':25,

'temperature': 0.5,

'end_sequence': "###"

}

prompt="...."

data = query(prompt,parameters,options)

Practical Insights

Listed here are some practical insights, which aid you start using GPT-Neo and the 🤗 Accelerated Inference API.

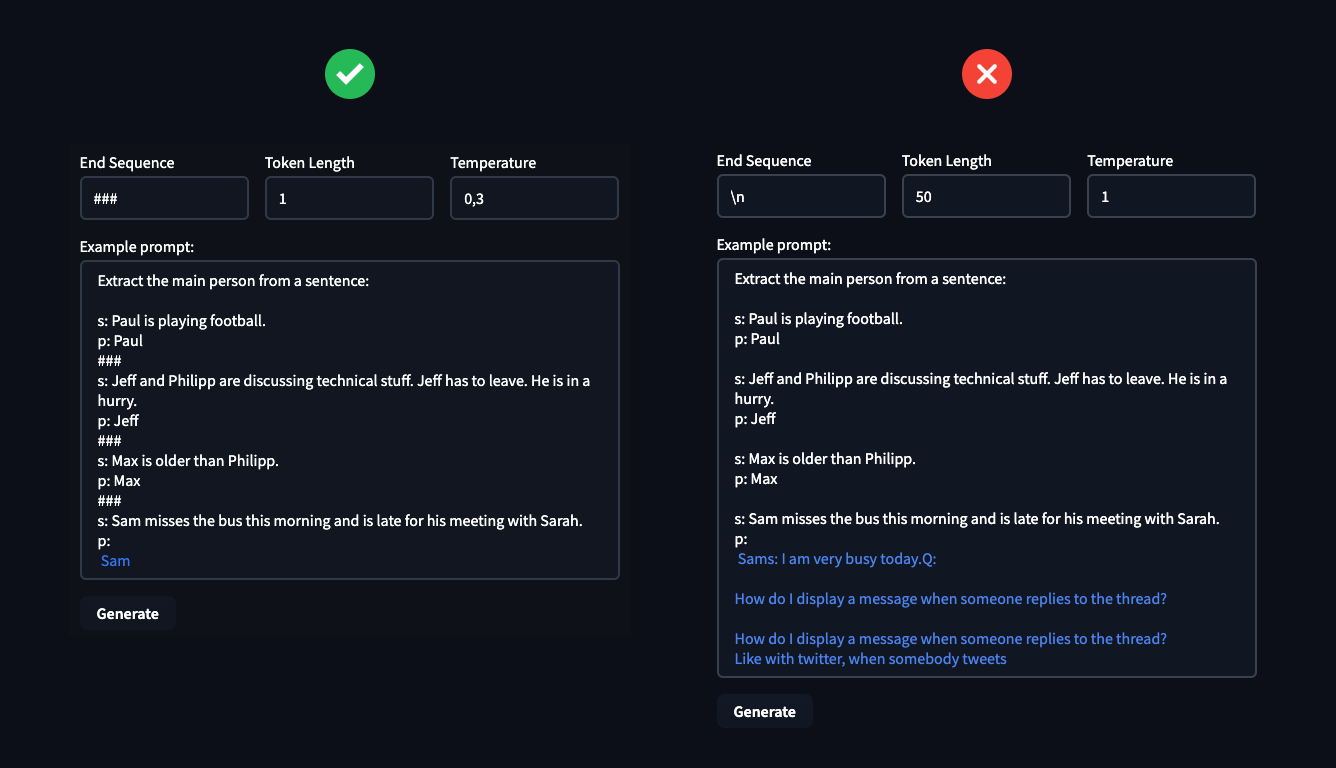

Since GPT-Neo (2.7B) is about 60x smaller than GPT-3 (175B), it doesn’t generalize as well to zero-shot problems and desires 3-4 examples to attain good results. If you provide more examples GPT-Neo understands the duty and takes the end_sequence under consideration, which allows us to regulate the generated text pretty much.

The hyperparameter End Sequence, Token Length & Temperature will be used to regulate the text-generation of the model and you should use this to your advantage to resolve the duty you wish. The Temperature controlls the randomness of your generations, lower temperature ends in less random generations and better temperature ends in more random generations.

In the instance, you possibly can see how vital it’s to define your hyperparameter. These could make the difference between solving your task or failing miserably.

Responsible Use

Few-Shot Learning is a robust technique but in addition presents unique pitfalls that have to be taken under consideration when designing uses cases.

As an example this, let’s consider the default Sentiment Evaluation setting provided within the widget. After seeing three examples of sentiment classification, the model makes the next predictions 4 times out of 5, with temperature set to 0.1:

Tweet: “I’m a disabled joyful person”

Sentiment: Negative

What could go fallacious? Imagine that you simply are using sentiment evaluation to aggregate reviews of products on an internet shopping website: a possible end result may very well be that items useful to individuals with disabilities can be routinely down-ranked – a type of automated discrimination. For more on this specific issue, we recommend the ACL 2020 paper Social Biases in NLP Models as Barriers for Individuals with Disabilities. Because Few-Shot Learning relies more directly on information and associations picked up from pre-training, it makes it more sensitive to one of these failures.

minimize the chance of harm? Listed here are some practical recommendations.

Best practices for responsible use

- Ensure people know which parts of their user experience rely upon the outputs of the ML system

- If possible, give users the power to opt-out

- Provide a mechanism for users to offer feedback on the model decision, and to override it

- Monitor feedback, especially model failures, for groups of users that could be disproportionately affected

What needs most to be avoided is to make use of the model to routinely make decisions for, or about, a user, without opportunity for a human to supply input or correct the output. Several regulations, resembling GDPR in Europe, require that users be provided an evidence for automatic decisions made about them.

To make use of GPT-Neo or any Hugging Face model in your individual application, you possibly can start a free trial of the 🤗 Accelerated Inference API.

If you happen to need assistance mitigating bias in models and AI systems, or leveraging Few-Shot Learning, the 🤗 Expert Acceleration Program can offer your team direct premium support from the Hugging Face team.