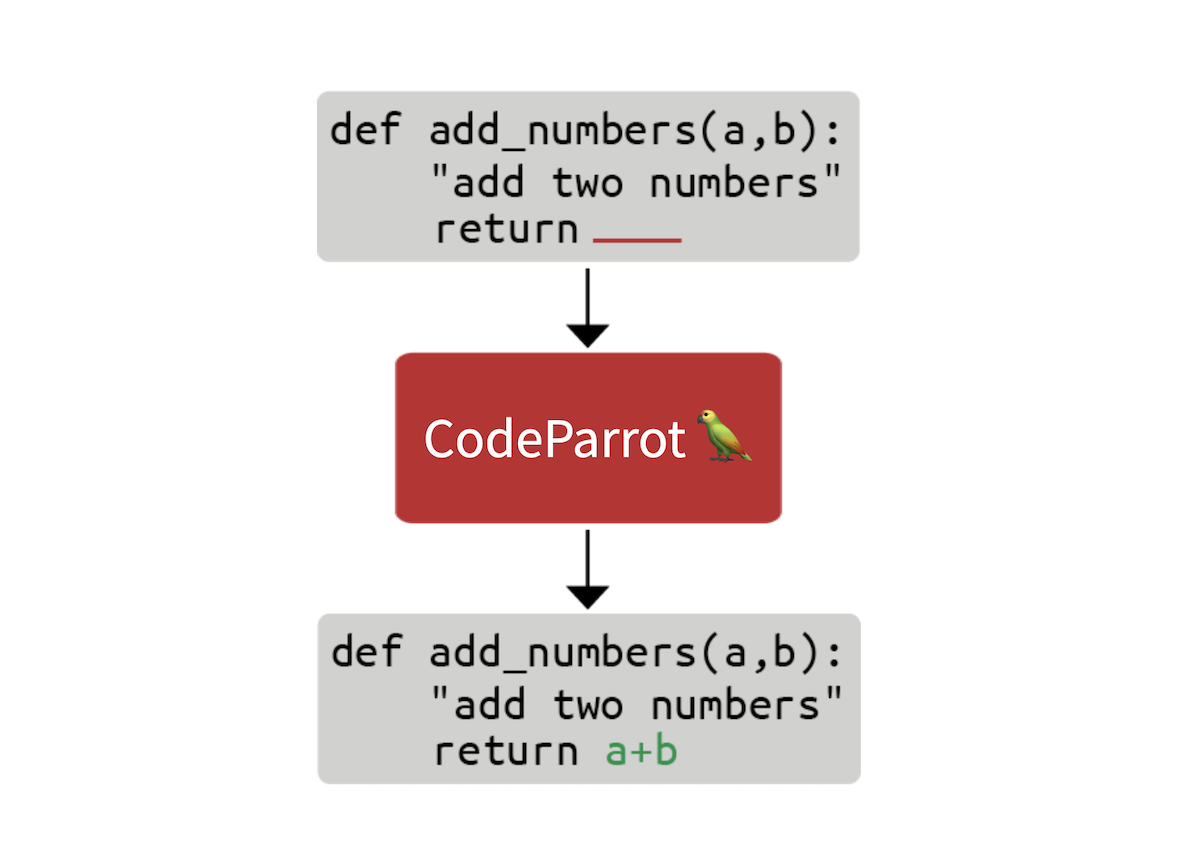

On this blog post we’ll take a have a look at what it takes to construct the technology behind GitHub CoPilot, an application that gives suggestions to programmers as they code. On this step-by-step guide, we’ll learn how you can train a big GPT-2 model called CodeParrot 🦜, entirely from scratch. CodeParrot can auto-complete your Python code – give it a spin here. Let’s get to constructing it from scratch!

Making a Large Dataset of Source Code

The very first thing we’d like is a big training dataset. With the goal to coach a Python code generation model, we accessed the GitHub dump available on Google’s BigQuery and filtered for all Python files. The result’s a 180 GB dataset with 20 million files (available here). After initial training experiments, we found that the duplicates within the dataset severely impacted the model performance. Further investigating the dataset we found that:

- 0.1% of the unique files make up 15% of all files

- 1% of the unique files make up 35% of all files

- 10% of the unique files make up 66% of all files

You may learn more about our findings in this Twitter thread. We removed the duplicates and applied the identical cleansing heuristics present in the Codex paper. Codex is the model behind CoPilot and is a GPT-3 model fine-tuned on GitHub code.

The cleaned dataset remains to be 50GB big and available on the Hugging Face Hub: codeparrot-clean. With that we will setup a brand new tokenizer and train a model.

Initializing the Tokenizer and Model

First we’d like a tokenizer. Let’s train one specifically on code so it splits code tokens well. We will take an existing tokenizer (e.g. GPT-2) and directly train it on our own dataset with the train_new_from_iterator() method. We then push it to the Hub. Note that we omit imports, arguments parsing and logging from the code examples to maintain the code blocks compact. But you will find the complete code including preprocessing and downstream task evaluation here.

def batch_iterator(batch_size=10):

for _ in tqdm(range(0, args.n_examples, batch_size)):

yield [next(iter_dataset)["content"] for _ in range(batch_size)]

tokenizer = GPT2Tokenizer.from_pretrained("gpt2")

base_vocab = list(bytes_to_unicode().values())

dataset = load_dataset("lvwerra/codeparrot-clean", split="train", streaming=True)

iter_dataset = iter(dataset)

new_tokenizer = tokenizer.train_new_from_iterator(batch_iterator(),

vocab_size=args.vocab_size,

initial_alphabet=base_vocab)

new_tokenizer.save_pretrained(args.tokenizer_name, push_to_hub=args.push_to_hub)

Learn more about tokenizers and how you can construct them within the Hugging Face course.

See that inconspicuous streaming=True argument? This small change has a big effect: as an alternative of downloading the complete (50GB) dataset this can stream individual samples as needed saving plenty of disk space! Checkout the Hugging Face course for more information on streaming.

Now, we initialize a brand new model. We’ll use the identical hyperparameters as GPT-2 large (1.5B parameters) and adjust the embedding layer to suit our latest tokenizer also adding some stability tweaks. The scale_attn_by_layer_idx flag makes sure we scale the eye by the layer id and reorder_and_upcast_attn mainly makes sure that we compute the eye in full precision to avoid numerical issues. We push the freshly initialized model to the identical repo because the tokenizer.

tokenizer = AutoTokenizer.from_pretrained(args.tokenizer_name)

config_kwargs = {"vocab_size": len(tokenizer),

"scale_attn_by_layer_idx": True,

"reorder_and_upcast_attn": True}

config = AutoConfig.from_pretrained('gpt2-large', **config_kwargs)

model = AutoModelForCausalLM.from_config(config)

model.save_pretrained(args.model_name, push_to_hub=args.push_to_hub)

Now that we have now an efficient tokenizer and a freshly initialized model we will start with the actual training loop.

Implementing the Training Loop

We train with the 🤗 Speed up library which allows us to scale the training from our laptop to a multi-GPU machine without changing a single line of code. We just create an accelerator and do some argument housekeeping:

accelerator = Accelerator()

acc_state = {str(k): str(v) for k, v in accelerator.state.__dict__.items()}

parser = HfArgumentParser(TrainingArguments)

args = parser.parse_args()

args = Namespace(**vars(args), **acc_state)

samples_per_step = accelerator.state.num_processes * args.train_batch_size

set_seed(args.seed)

We are actually able to train! Let’s use the huggingface_hub client library to clone the repository with the brand new tokenizer and model. We’ll checkout to a brand new branch for this experiment. With that setup, we will run many experiments in parallel and in the long run we just merge one of the best one into the major branch.

if accelerator.is_main_process:

hf_repo = Repository(args.save_dir, clone_from=args.model_ckpt)

if accelerator.is_main_process:

hf_repo.git_checkout(run_name, create_branch_ok=True)

We will directly load the tokenizer and model from the local repository. Since we’re coping with big models we would need to activate gradient checkpointing to diminish the GPU memory footprint during training.

model = AutoModelForCausalLM.from_pretrained(args.save_dir)

if args.gradient_checkpointing:

model.gradient_checkpointing_enable()

tokenizer = AutoTokenizer.from_pretrained(args.save_dir)

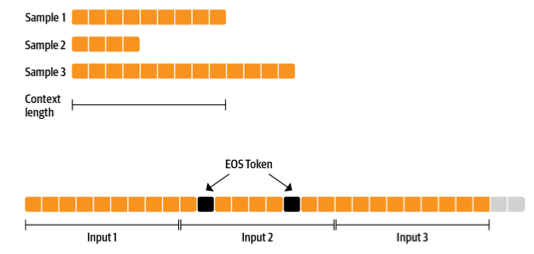

Next up is the dataset. We make training simpler with a dataset that yields examples with a hard and fast context size. To not waste an excessive amount of data (some samples are too short or too long) we will concatenate many examples with an EOS token after which chunk them.

The more sequences we prepare together, the smaller the fraction of tokens we discard (the grey ones within the previous figure). Since we wish to stream the dataset as an alternative of preparing all the pieces prematurely we use an IterableDataset. The complete dataset class looks as follows:

class ConstantLengthDataset(IterableDataset):

def __init__(

self, tokenizer, dataset, infinite=False, seq_length=1024, num_of_sequences=1024, chars_per_token=3.6

):

self.tokenizer = tokenizer

self.concat_token_id = tokenizer.bos_token_id

self.dataset = dataset

self.seq_length = seq_length

self.input_characters = seq_length * chars_per_token * num_of_sequences

self.epoch = 0

self.infinite = infinite

def __iter__(self):

iterator = iter(self.dataset)

more_examples = True

while more_examples:

buffer, buffer_len = [], 0

while True:

if buffer_len >= self.input_characters:

break

try:

buffer.append(next(iterator)["content"])

buffer_len += len(buffer[-1])

except StopIteration:

if self.infinite:

iterator = iter(self.dataset)

self.epoch += 1

logger.info(f"Dataset epoch: {self.epoch}")

else:

more_examples = False

break

tokenized_inputs = self.tokenizer(buffer, truncation=False)["input_ids"]

all_token_ids = []

for tokenized_input in tokenized_inputs:

all_token_ids.extend(tokenized_input + [self.concat_token_id])

for i in range(0, len(all_token_ids), self.seq_length):

input_ids = all_token_ids[i : i + self.seq_length]

if len(input_ids) == self.seq_length:

yield torch.tensor(input_ids)

Texts within the buffer are tokenized in parallel after which concatenated. Chunked samples are then yielded until the buffer is empty and the method starts again. If we set infinite=True the dataset iterator restarts at its end.

def create_dataloaders(args):

ds_kwargs = {"streaming": True}

train_data = load_dataset(args.dataset_name_train, split="train", streaming=True)

train_data = train_data.shuffle(buffer_size=args.shuffle_buffer, seed=args.seed)

valid_data = load_dataset(args.dataset_name_valid, split="train", streaming=True)

train_dataset = ConstantLengthDataset(tokenizer, train_data, infinite=True, seq_length=args.seq_length)

valid_dataset = ConstantLengthDataset(tokenizer, valid_data, infinite=False, seq_length=args.seq_length)

train_dataloader = DataLoader(train_dataset, batch_size=args.train_batch_size)

eval_dataloader = DataLoader(valid_dataset, batch_size=args.valid_batch_size)

return train_dataloader, eval_dataloader

train_dataloader, eval_dataloader = create_dataloaders(args)

Before we start training we’d like to establish the optimizer and learning rate schedule. We don’t need to apply weight decay to biases and LayerNorm weights so we use a helper function to exclude those.

def get_grouped_params(model, args, no_decay=["bias", "LayerNorm.weight"]):

params_with_wd, params_without_wd = [], []

for n, p in model.named_parameters():

if any(nd in n for nd in no_decay): params_without_wd.append(p)

else: params_with_wd.append(p)

return [{"params": params_with_wd, "weight_decay": args.weight_decay},

{"params": params_without_wd, "weight_decay": 0.0},]

optimizer = AdamW(get_grouped_params(model, args), lr=args.learning_rate)

lr_scheduler = get_scheduler(name=args.lr_scheduler_type, optimizer=optimizer,

num_warmup_steps=args.num_warmup_steps,

num_training_steps=args.max_train_steps,)

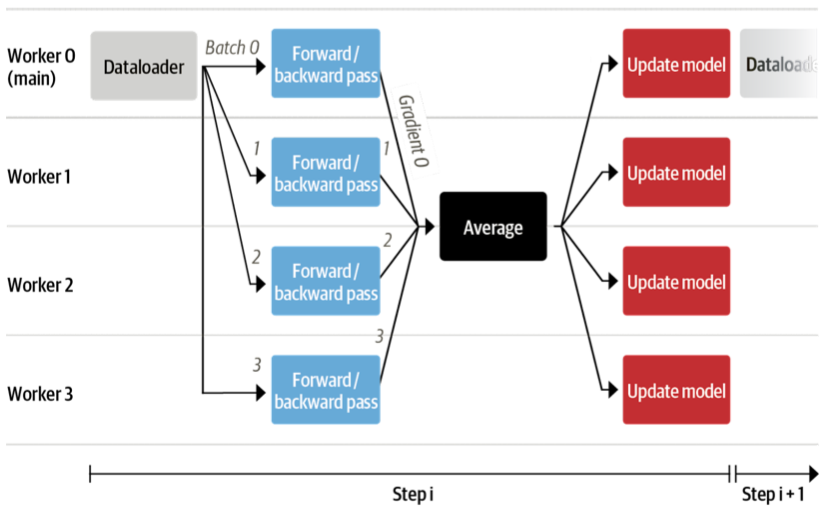

A giant query that is still is how all the information and models will probably be distributed across several GPUs. This appears like a posh task but actually only requires a single line of code with 🤗 Speed up.

model, optimizer, train_dataloader, eval_dataloader = accelerator.prepare(

model, optimizer, train_dataloader, eval_dataloader)

Under the hood it’ll use DistributedDataParallel, which suggests a batch is shipped to every GPU employee which has its own copy of the model. There the gradients are computed after which aggregated to update the model on each employee.

We also want to guage the model every now and then on the validation set so let’s write a function to just do that. This is finished routinely in a distributed fashion and we just need to assemble all of the losses from the employees. We also need to report the perplexity.

def evaluate(args):

model.eval()

losses = []

for step, batch in enumerate(eval_dataloader):

with torch.no_grad():

outputs = model(batch, labels=batch)

loss = outputs.loss.repeat(args.valid_batch_size)

losses.append(accelerator.gather(loss))

if args.max_eval_steps > 0 and step >= args.max_eval_steps:

break

loss = torch.mean(torch.cat(losses))

try:

perplexity = torch.exp(loss)

except OverflowError:

perplexity = float("inf")

return loss.item(), perplexity.item()

We are actually ready to jot down the major training loop. It can look pretty very like a standard PyTorch training loop. Here and there you may see that we use the accelerator functions slightly than native PyTorch. Also, we push the model to the branch after each evaluation.

model.train()

completed_steps = 0

for step, batch in enumerate(train_dataloader, start=1):

loss = model(batch, labels=batch, use_cache=False).loss

loss = loss / args.gradient_accumulation_steps

accelerator.backward(loss)

if step % args.gradient_accumulation_steps == 0:

accelerator.clip_grad_norm_(model.parameters(), 1.0)

optimizer.step()

lr_scheduler.step()

optimizer.zero_grad()

completed_steps += 1

if step % args.save_checkpoint_steps == 0:

eval_loss, perplexity = evaluate(args)

accelerator.wait_for_everyone()

unwrapped_model = accelerator.unwrap_model(model)

unwrapped_model.save_pretrained(args.save_dir, save_function=accelerator.save)

if accelerator.is_main_process:

hf_repo.push_to_hub(commit_message=f"step {step}")

model.train()

if completed_steps >= args.max_train_steps:

break

Once we call wait_for_everyone() and unwrap_model() we make sure that that every one employees are ready and any model layers which were added by prepare() earlier are removed. We also use gradient accumulation and gradient clipping which are easily implemented. Lastly, after training is complete we run a final evaluation and save the ultimate model and push it to the hub.

logger.info("Evaluating and saving model after training")

eval_loss, perplexity = evaluate(args)

log_metrics(step, {"loss/eval": eval_loss, "perplexity": perplexity})

accelerator.wait_for_everyone()

unwrapped_model = accelerator.unwrap_model(model)

unwrapped_model.save_pretrained(args.save_dir, save_function=accelerator.save)

if accelerator.is_main_process:

hf_repo.push_to_hub(commit_message="final model")

Done! That is all of the code to coach a full GPT-2 model from scratch with as little as 150 lines. We didn’t show the imports and logs of the scripts to make the code a bit of bit more compact. Now let’s actually train it!

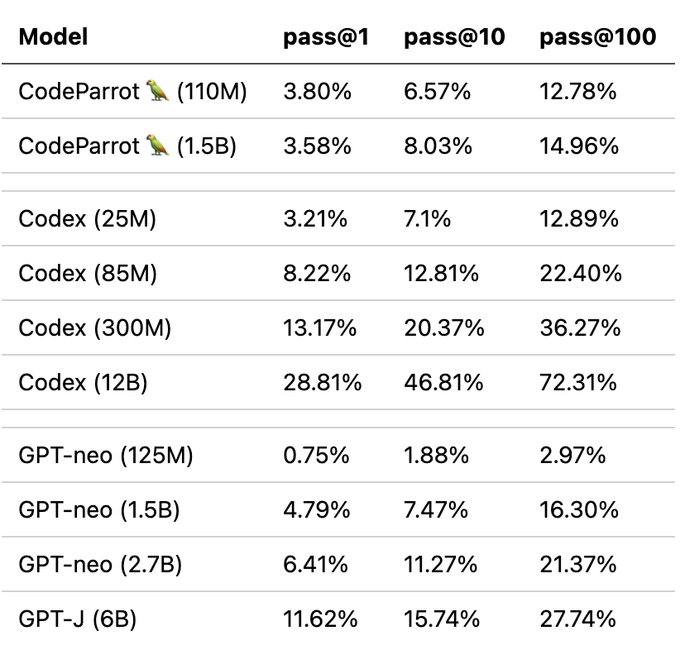

With this code we trained models for our upcoming book on Transformers and NLP: a 110M and 1.5B parameter GPT-2 model. We used a 16 x A100 GPU machine to coach these models for 1 day and 1 week, respectively. Enough time to get a coffee and browse a book or two!

Evaluation

This remains to be relatively short training time for pretraining but we will already observe good downstream performance as in comparison with similar models. We evaluated the models on OpenAI’s HumanEval benchmark that was introduced within the Codex paper. It measures the performance of code generation models on almost 200 coding challenges.

Note that we trained CodeParrot on roughly 25-30B tokens whereas GPT-neo was trained on 300B tokens and Codex on 300B (GPT-3 checkpoint) + 100B (code) tokens. So you actually get a great bang to your buck here.

But what is that this pass@k metric exactly? Simply put it measures the probability of not less than one program passing the unittest of a programming problem given k candidate generations from the model. Naturally, the metric increases with k as more candidates can potentially solve the coding challenge. You should use the metric with datasets:

from datasets import load_metric

code_eval = datasets.load_metric("code_eval")

test_cases = ["assert add(2,3)==5"]

candidates = [["def add(a,b): return a*b", "def add(a, b): return a+b"]]

pass_at_k, results = code_eval.compute(references=test_cases, predictions=candidates, k=[1, 2])

print(pass_at_k)

You too can load OpenAI’s HumanEval dataset with datasets:

from datasets import load_dataset

dataset = load_dataset("openai_humaneval")

Now let’s have a have a look at what we get after anxiously waiting for therefore long!

Results

Let’s start slow and check if we will prompt the model to jot down a function to return the scale of a file given its path:

Prompt:

def get_files_size(filename):

Completion:

"""

Returns the file size of a file.

:param filepath: The trail to the file.

:type filepath: str

:return: The file size.

:rtype: int

"""

return os.path.getsize(filepath)

Thus far so good, but let’s take this a step further and take a look at something a bit more interesting. Certainly one of the less exciting features of constructing production ready code is writing unittest. Let’s examine if we will use CodeParrot to do that for us!

Prompt:

def is_even(value):

"""Returns True if value is an excellent number."""

return value % 2 == 0

import unittest

Completion:

class TestIsEven(unittest.TestCase):

"""Tests for is_even function."""

def test_is_even(self):

"""Test is_even function."""

self.assertTrue(is_even(0))

self.assertTrue(is_even(1))

self.assertTrue

Although the test condition itself doesn’t look quite right the model has built all of the boilerplate code for us. This alone could already be quite useful and save us a few minutes time!

But what we really need to do is construct higher transformer models. Let’s use CodeParrot to assist us try this:

Prompt:

from transformers import AutoTokenizer, AutoModelForSequenceClassification

Completion:

tokenizer = AutoTokenizer.from_pretrained('bert-base-uncased')

model = AutoModelForSequenceClassification.from_pretrained('bert-base-uncased')

Taking a look at a number of examples is good but by now you almost certainly need to try it yourself. We prepared a number of online demos only for that!

Demos

You may play with the models in two demos we added to Hugging Face Spaces. With the primary you may quickly generate code with the model and with the second you may highlight your code with the model to identify bugs!

You too can directly use the models from the transformers library:

from transformers import pipeline

pipe = pipeline('text-generation', model='lvwerra/codeparrot')

pipe('def hello_world():')

Summary

On this short blog post we walked through all of the steps involved for training a big GPT-2 model called CodeParrot 🦜 for code generation. Using 🤗 Speed up we built a training script with lower than 200 lines of code that we will effortlessly scale across many GPUs. With which you could now train your individual GPT-2 model!

This post gives a transient overview of CodeParrot 🦜, but in the event you are concerned about diving deeper into how you can pretrain this models, we recommend reading its dedicated chapter within the upcoming book on Transformers and NLP. This chapter provides many more details around constructing custom datasets, design considerations when training a brand new tokenizer, and architecture selection.