On this Tutorial, you’ll learn how one can pre-train BERT-base from scratch using a Habana Gaudi-based DL1 instance on AWS to reap the benefits of the cost-performance advantages of Gaudi. We’ll use the Hugging Face Transformers, Optimum Habana and Datasets libraries to pre-train a BERT-base model using masked-language modeling, certainly one of the 2 original BERT pre-training tasks. Before we start, we’d like to establish the deep learning environment.

View Code

You’ll learn how one can:

Note: Steps 1 to three can/needs to be run on a special instance size since those are CPU intensive tasks.

Requirements

Before we start, make certain you’ve met the next requirements

Helpful Resources

What’s BERT?

BERT, short for Bidirectional Encoder Representations from Transformers, is a Machine Learning (ML) model for natural language processing. It was developed in 2018 by researchers at Google AI Language and serves as a swiss army knife solution to 11+ of probably the most common language tasks, equivalent to sentiment evaluation and named entity recognition.

Read more about BERT in our BERT 101 🤗 State Of The Art NLP Model Explained blog.

What’s a Masked Language Modeling (MLM)?

MLM enables/enforces bidirectional learning from text by masking (hiding) a word in a sentence and forcing BERT to bidirectionally use the words on either side of the covered word to predict the masked word.

Masked Language Modeling Example:

“Dang! I’m out fishing and an enormous trout just [MASK] my line!”

Read more about Masked Language Modeling here.

Let’s start. 🚀

Note: Steps 1 to three were run on a AWS c6i.12xlarge instance.

1. Prepare the dataset

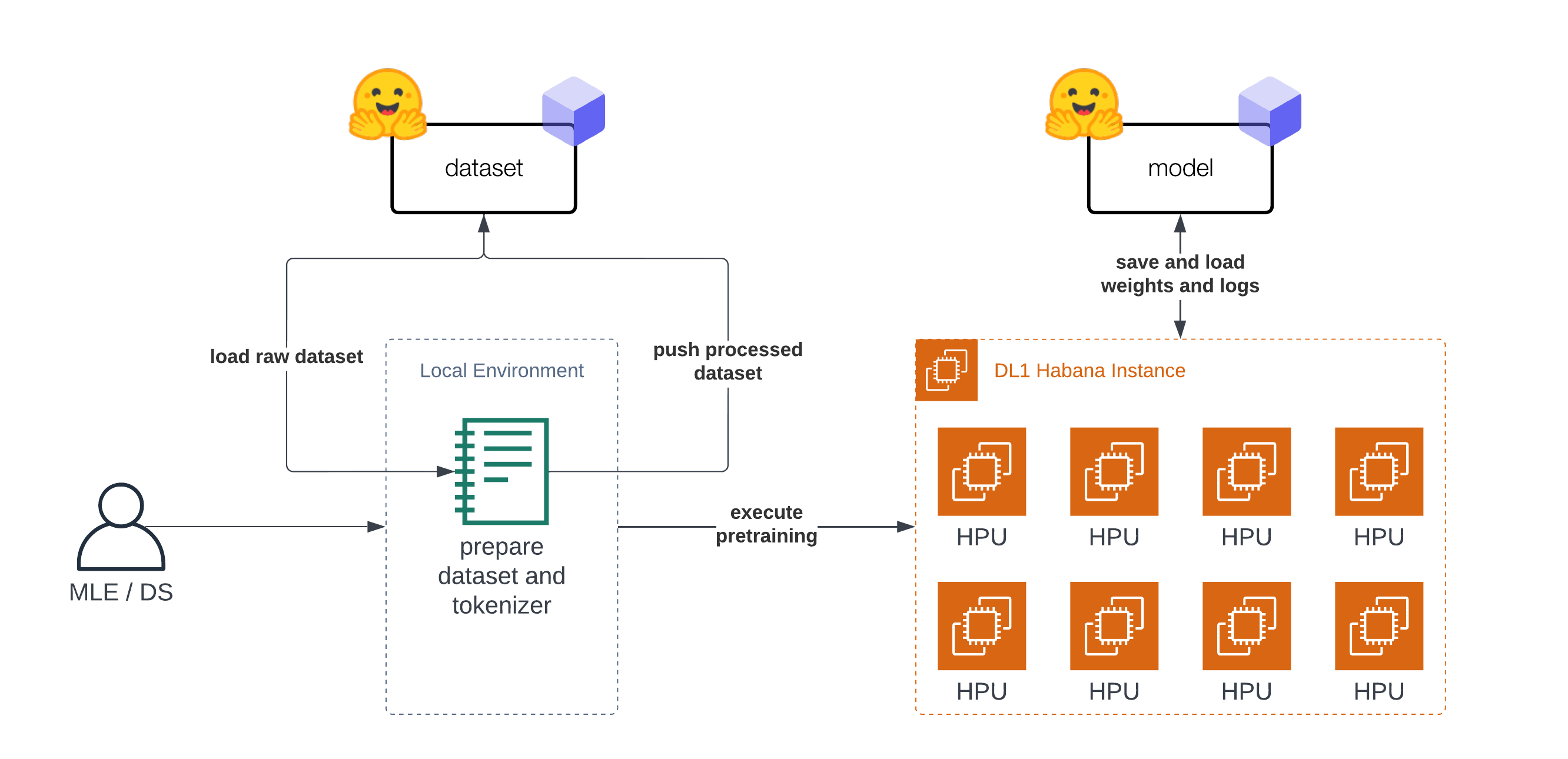

The Tutorial is “split” into two parts. The primary part (step 1-3) is about preparing the dataset and tokenizer. The second part (step 4) is about pre-training BERT on the prepared dataset. Before we will start with the dataset preparation we’d like to setup our development environment. As mentioned within the introduction you do not need to arrange the dataset on the DL1 instance and will use your notebook or desktop computer.

At first we’re going to install transformers, datasets and git-lfs to push our tokenizer and dataset to the Hugging Face Hub for later use.

!pip install transformers datasets

!sudo apt-get install git-lfs

To complete our setup let’s log into the Hugging Face Hub to push our dataset, tokenizer, model artifacts, logs and metrics during training and afterwards to the Hub.

To have the ability to push our model to the Hub, it’s essential to register on the Hugging Face Hub.

We’ll use the notebook_login util from the huggingface_hub package to log into our account. You possibly can get your token within the settings at Access Tokens.

from huggingface_hub import notebook_login

notebook_login()

Since we are actually logged in let’s get the user_id, which will likely be used to push the artifacts.

from huggingface_hub import HfApi

user_id = HfApi().whoami()["name"]

print(f"user id '{user_id}' will likely be used in the course of the example")

The original BERT was pretrained on Wikipedia and BookCorpus datasets. Each datasets can be found on the Hugging Face Hub and could be loaded with datasets.

Note: For wikipedia we’ll use the 20220301, which is different from the unique split.

As a primary step we’re loading the datasets and merging them together to create on big dataset.

from datasets import concatenate_datasets, load_dataset

bookcorpus = load_dataset("bookcorpus", split="train")

wiki = load_dataset("wikipedia", "20220301.en", split="train")

wiki = wiki.remove_columns([col for col in wiki.column_names if col != "text"])

assert bookcorpus.features.type == wiki.features.type

raw_datasets = concatenate_datasets([bookcorpus, wiki])

We usually are not going to do some advanced dataset preparation, like de-duplication, filtering or every other pre-processing. For those who are planning to use this notebook to coach your personal BERT model from scratch I highly recommend including those data preparation steps into your workflow. It will show you how to improve your Language Model.

2. Train a Tokenizer

To have the ability to coach our model we’d like to convert our text right into a tokenized format. Most Transformer models are coming with a pre-trained tokenizer, but since we’re pre-training our model from scratch we also have to train a Tokenizer on our data. We will train a tokenizer on our data with transformers and the BertTokenizerFast class.

More details about training a brand new tokenizer could be present in our Hugging Face Course.

from tqdm import tqdm

from transformers import BertTokenizerFast

tokenizer_id="bert-base-uncased-2022-habana"

def batch_iterator(batch_size=10000):

for i in tqdm(range(0, len(raw_datasets), batch_size)):

yield raw_datasets[i : i + batch_size]["text"]

tokenizer = BertTokenizerFast.from_pretrained("bert-base-uncased")

We will start training the tokenizer with train_new_from_iterator().

bert_tokenizer = tokenizer.train_new_from_iterator(text_iterator=batch_iterator(), vocab_size=32_000)

bert_tokenizer.save_pretrained("tokenizer")

We push the tokenizer to the Hugging Face Hub for later training our model.

bert_tokenizer.push_to_hub(tokenizer_id)

3. Preprocess the dataset

Before we will start with training our model, the last step is to pre-process/tokenize our dataset. We’ll use our trained tokenizer to tokenize our dataset after which push it to the hub to load it easily later in our training. The tokenization process can also be kept pretty easy, if documents are longer than 512 tokens those are truncated and never split into several documents.

from transformers import AutoTokenizer

import multiprocessing

tokenizer = AutoTokenizer.from_pretrained("tokenizer")

num_proc = multiprocessing.cpu_count()

print(f"The max length for the tokenizer is: {tokenizer.model_max_length}")

def group_texts(examples):

tokenized_inputs = tokenizer(

examples["text"], return_special_tokens_mask=True, truncation=True, max_length=tokenizer.model_max_length

)

return tokenized_inputs

tokenized_datasets = raw_datasets.map(group_texts, batched=True, remove_columns=["text"], num_proc=num_proc)

tokenized_datasets.features

As data processing function we’ll concatenate all texts from our dataset and generate chunks of tokenizer.model_max_length (512).

from itertools import chain

def group_texts(examples):

concatenated_examples = {k: list(chain(*examples[k])) for k in examples.keys()}

total_length = len(concatenated_examples[list(examples.keys())[0]])

if total_length >= tokenizer.model_max_length:

total_length = (total_length // tokenizer.model_max_length) * tokenizer.model_max_length

result = {

k: [t[i : i + tokenizer.model_max_length] for i in range(0, total_length, tokenizer.model_max_length)]

for k, t in concatenated_examples.items()

}

return result

tokenized_datasets = tokenized_datasets.map(group_texts, batched=True, num_proc=num_proc)

tokenized_datasets = tokenized_datasets.shuffle(seed=34)

print(f"the dataset incorporates in total {len(tokenized_datasets)*tokenizer.model_max_length} tokens")

The last step before we will start with our training is to push our prepared dataset to the hub.

dataset_id=f"{user_id}/processed_bert_dataset"

tokenized_datasets.push_to_hub(f"{user_id}/processed_bert_dataset")

4. Pre-train BERT on Habana Gaudi

In this instance, we’re going to use Habana Gaudi on AWS using the DL1 instance to run the pre-training. We’ll use the Distant Runner toolkit to simply launch our pre-training on a distant DL1 Instance from our local setup. You possibly can check-out Deep Learning setup made easy with EC2 Distant Runner and Habana Gaudi if you ought to know more about how this works.

!pip install rm-runner

When using GPUs you’ll use the Trainer and TrainingArguments. Since we’re going to run our training on Habana Gaudi we’re leveraging the optimum-habana library, we will use the GaudiTrainer and GaudiTrainingArguments as an alternative. The GaudiTrainer is a wrapper across the Trainer that lets you pre-train or fine-tune a transformer model on Habana Gaudi instances.

-from transformers import Trainer, TrainingArguments

+from optimum.habana import GaudiTrainer, GaudiTrainingArguments

# define the training arguments

-training_args = TrainingArguments(

+training_args = GaudiTrainingArguments(

+ use_habana=True,

+ use_lazy_mode=True,

+ gaudi_config_name=path_to_gaudi_config,

...

)

# Initialize our Trainer

-trainer = Trainer(

+trainer = GaudiTrainer(

model=model,

args=training_args,

train_dataset=train_dataset

... # other arguments

)

The DL1 instance we use has 8 available HPU-cores meaning we will leverage distributed data-parallel training for our model.

To run our training as distributed training we’d like to create a training script, which could be used with multiprocessing to run on all HPUs.

We’ve got created a run_mlm.py script implementing masked-language modeling using the GaudiTrainer. To execute our distributed training we use the DistributedRunner runner from optimum-habana and pass our arguments. Alternatively, you may check-out the gaudi_spawn.py within the optimum-habana repository.

Before we will start our training we’d like to define the hyperparameters we would like to make use of for our training. We’re leveraging the Hugging Face Hub integration of the GaudiTrainer to robotically push our checkpoints, logs and metrics during training right into a repository.

from huggingface_hub import HfFolder

hyperparameters = {

"model_config_id": "bert-base-uncased",

"dataset_id": "philschmid/processed_bert_dataset",

"tokenizer_id": "philschmid/bert-base-uncased-2022-habana",

"gaudi_config_id": "philschmid/bert-base-uncased-2022-habana",

"repository_id": "bert-base-uncased-2022",

"hf_hub_token": HfFolder.get_token(),

"max_steps": 100_000,

"per_device_train_batch_size": 32,

"learning_rate": 5e-5,

}

hyperparameters_string = " ".join(f"--{key} {value}" for key, value in hyperparameters.items())

We will start our training by making a EC2RemoteRunner after which launch it. It will then start our AWS EC2 DL1 instance and run our run_mlm.py script on it using the huggingface/optimum-habana:latest container.

from rm_runner import EC2RemoteRunner

runner = EC2RemoteRunner(

instance_type="dl1.24xlarge",

profile="hf-sm",

region="us-east-1",

container="huggingface/optimum-habana:4.21.1-pt1.11.0-synapse1.5.0"

)

runner.launch(

command=f"python3 gaudi_spawn.py --use_mpi --world_size=8 run_mlm.py {hyperparameters_string}",

source_dir="scripts",

)

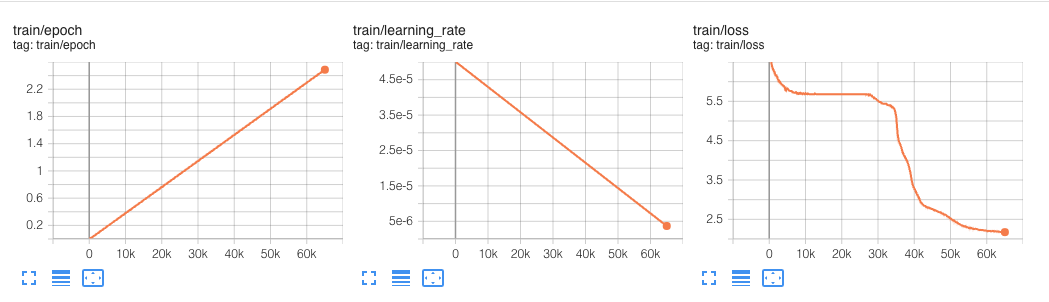

This experiment ran for 60k steps.

In our hyperparameters we defined a max_steps property, which limited the pre-training to only 100_000 steps. The 100_000 steps with a world batch size of 256 took around 12,5 hours.

BERT was originally pre-trained on 1 Million Steps with a world batch size of 256:

We train with batch size of 256 sequences (256 sequences * 512 tokens = 128,000 tokens/batch) for 1,000,000 steps, which is roughly 40 epochs over the three.3 billion word corpus.

Meaning if we would like to do a full pre-training it will take around 125h hours (12,5 hours * 10) and would cost us around ~$1,650 using Habana Gaudi on AWS, which is amazingly low-cost.

For comparison, the DeepSpeed Team, who holds the record for the fastest BERT-pretraining, reported that pre-training BERT on 1 DGX-2 (powered by 16 NVIDIA V100 GPUs with 32GB of memory each) takes around 33,25 hours.

To match the fee we will use the p3dn.24xlarge as reference, which comes with 8x NVIDIA V100 32GB GPUs and costs ~31,22$/h. We would want two of those instances to have the identical “setup” because the one DeepSpeed reported, for now we’re ignoring any overhead created to the multi-node setup (I/O, Network etc.).

This could bring the fee of the DeepSpeed GPU based training on AWS to around ~$2,075, which is 25% greater than what Habana Gaudi currently delivers.

Something to notice here is that using DeepSpeed usually improves the performance by an element of ~1.5 – 2. An element of ~1.5 – 2x, signifies that the identical pre-training job without DeepSpeed would likely take twice as long and price twice as much or ~$3-4k.

We’re looking forward on to do the experiment again once the Gaudi DeepSpeed integration is more widely available.

Conclusion

That is it for this Tutorial. Now you understand the fundamentals on how one can pre-train BERT from scratch using Hugging Face Transformers and Habana Gaudi. You furthermore mght saw how easy it’s to migrate from the Trainer to the GaudiTrainer.

We compared our implementation with the fastest BERT-pretraining results and saw that Habana Gaudi still delivers a 25% cost reduction and allows us to pre-train BERT for ~$1,650.

Those results are incredible since it would allow corporations to adapt their pre-trained models to their language and domain to improve accuracy as much as 10% in comparison with the overall BERT models.

For those who are fascinated with training your personal BERT or other Transformers models from scratch to scale back cost and improve accuracy, contact our experts to study our Expert Acceleration Program. To learn more about Habana solutions, examine our partnership and how one can contact them.

Code: pre-training-bert.ipynb

Thanks for reading! If you’ve any questions, be at liberty to contact me, through Github, or on the forum. You may also connect with me on Twitter or LinkedIn.