AI Officers tripled from the years 2019 to 2024, in keeping with Linkedin Data. Now, roughly half of the most important corporations in countries just like the UK have appointed a CAIO. The goal is straightforward: speed up growth and reduce costs with AI.

The impact of AI on the most important corporations on this planet is unquestionable. Corporations like Atlassian have let go of hundreds of employees (the stock is down 50% within the last 12 months). Block did the same thing, and customarily speaking vanilla SAAS stocks are suffering on account of the perceived risk of AI making it easier to construct alternatives.

Meanwhile, developer productivity tools resembling Claude Code are taking the world by storm. Claude Code crossed $1bn revenue in December 2025, similar to 10,000 corporations spending $100,000 on average — a few quarter of Databricks/Snowflake’s revenues.

On this guide we’ll outline a framework for evaluating the various avenues Chief Data and AI Officers have for advancing AI of their corporations.

Understanding the goals of the business and the likeness of AI to automation as a complete is critical. Opportunity cost can be fundamental — AI Allows corporations that would all the time have been “too slow” or “too inefficient” to blast through this glass ceiling and reinvent themselves.

In this text we’ll lay out an evaluation framework for CDAOs to know the chance of their organisations. The Framework will categorize the chance into different opportunity or productivity areas. This text may even cover cost, timing, and opportuntiy cost considerations when evaluating AI initiatives.

The second a part of the article will deal with real-world examples of AI evaluated inside this framework in addition to Data Team-specific examples based on interviews with hundreds of knowledge professionals prior to now 12 months.

By the top of the article, you should have a transparent framework and for assessing the possible impact of AI in your organisation, practical next steps, and clear examples of where AI is significantly benefiting corporations and data teams.

Section 1: AI Evaluation Framework

What AI Enables: Automation and Productivity

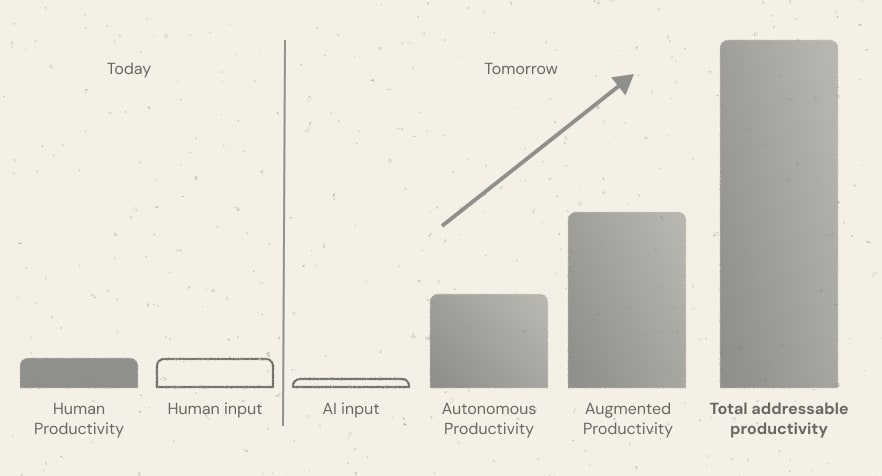

We define a seven key metrics of productivity for AI and Data Officers to determine:

- Human Productivity: the entire amount of output currently produced by the workforce

- Human input: the quantity of cost required to realize the present level of Human Productivity

- AI input: the quantity of cost required to realize the total Productivity Gap

- Autonomous Productivity: the quantity of labor that be reliably carried out by agents or automations

- Human-automatable Productivity: the quantity of Work being done that the workforce could do with AI.

- Total addressable Productivity (“TAP”) and Productivity Gap: Autonomous work + Human-automatable work. Autonomous work + Human-automatable work – Human Productivity; the Productivity Gap

- ROI Gap: (TAP/ AI input) – 1. A measure of the rise in productivity AI can facilitate

Examples

- A call centre company running 100,000 calls a 12 months could feasibly automate all of those with AI; due to this fact the autonomous work could be roughly equal to the Human Work. The Human-automatable Productivity is minimal, but with some AI there is maybe a 20% uplift. The TAP is due to this fact about 0.2*Human Productivity. The AI input is significantly lower than the human input on account of the reduced variety of staff required to take calls.

- A software engineering company with 100 developers has a ten person SRE team. The SRE process may be automated with AI Agents by 50%. This reduces the AI input by 5%. The Autonomous Productivity makes up the shortfall in Human Productivity.

- Developers grow to be 100% more productive with tools like Claude Code. The Augmented Productivity is similar to having abother 95 developers

- The TAP is roughly double the Human Productivity

Autonomous Productivity could be very just like Automation. With Automation, there may be all the time a chance cost — in fact, may be automated, but what makes AI different is that there are actually that may be automated faster, and more cheaply. AI isn’t a panacea for any form of automation, nevertheless.

Augmented Productivity suits nicely into AI use-cases like coding assistants. Much of Anthropic’s success is on account of making good on its promise to make developers faster and more efficient.

AI Input also includes the cost of AI Credits.

AI Constraints: opportunity costs and time

Implementing AI inevitably incurs opportunity cost. Corporations may not have the ability to implement AI within the short-term because it requires an investment and a reallocation of headcount. In case you’re reading this, you’re likely the results of — moderately than repurpose existing resources, corporations can introduce latest headcount to tackle AI implementation.

There’s an opportuntiy cost of implementing now. Corporations undergoing significant transformation activities or corporate affairs will not be able to spare additional resources to AI and automation initiatives.

The second component is time: implementing a gradual state where your complete AI input and TAP is realised will take time. For small corporations, this duration could also be short. For giant multinational enterprises, a radical change in the way in which things are done will inevitably take longer as historical patterns are modified and existing customer SLAs force the usual of AI implementation to be much higher.

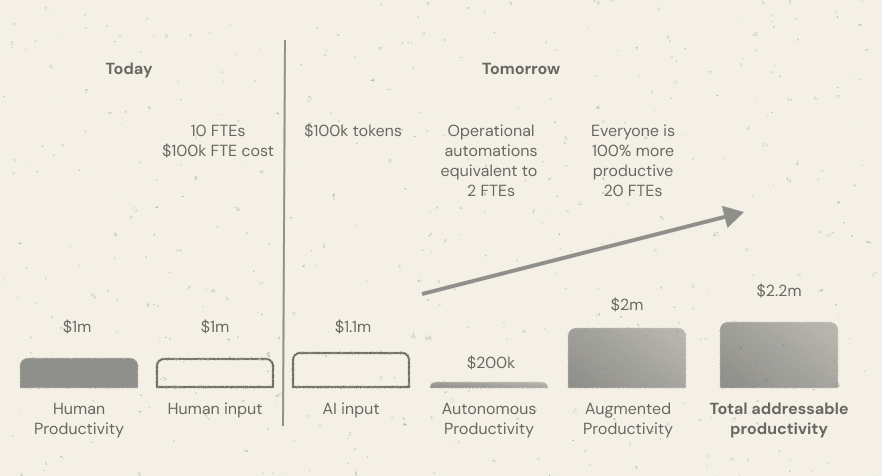

Here is an example for a small software company.

- The corporate employs 10 FTEs at $100k cost each

- The corporate spends $100k on tokens

- Automations / autonomous agents automating key operational activities that may have taken 2 FTEs

- Everybody in the corporate is writing code, so everyone ships twice as much

- The TAP is $2.2m. The Productivity Gap is $1.1m. The ROI is $2.2m / $1.1 -1 = 100%

This assumes an quick implementation time and essentially zero opportunity cost of implementation. In point of fact, leveraging Claude Code or similar tools for complex software development use-cases or data engineering use-cases won’t be quick.

Summary

On this section we outlined an easy framework for evaluating the possible uplift from AI. We saw that there are two predominant areas for profit; Autonomous Productivity and Augmented Productivity. Autonomous Productivity pertains to processes that may be automated that take up human time that may very well be fully automted with agents. Augmented Productivity pertains to work done that requires humans to motion, resembling writing code.

We saw that implementation times and the chance costs of implementation are major aspects when considering whether or to not implement AI — this framework doesn’t have to be AI-specific, but what’s about AI is that , the of advantages and will be different to regular automation initiatives.

ROI may be driven by each Total Addressable Productivity and AI Input. In some industries, it’s possible you’ll be under more of a cost-reduction mandate. In others, hopefully most, Chief Data and AI Officers should look to know how existing resources may be repurposed to realize greater level of productivities.

This implies generally, AI is unlikely to end in a but moderately an and due to this fact growth.

This framework is straightforward and has inherent limitations. The character of labor, makeup of labour, company goals, company activities, and market forces could all impact the quantum and feasibility of the TAP.

One interesting upside to contemplate is the worth of achieving the goals of Autonomous Productivity and Augmented Productivity combined. The worth of the previous is roughly unbounded. The worth of the second is labour-constrained, but enables Speed. An organization that, in a 12 months can move twice as fast because it used to and do 3 times as much potentially drives growth in other areas.

For instance, a supermarket chain seeking to aggressively expand and win market share could gain a transparent external profit from implementing AI, if it allows them to open stores faster than it might otherwise have done — especially if this materialises to a greater extent, relative to its competitors.

Within the sections that follow, we are going to discuss different tools and approaches of Autonomous Productivity and Augmented Productivity.

Section 2: Autonomous Productivity

What’s Autonomous Productivity?

Automous Productivity is the quantity of labor that be reliably carried out by agents or automations without human involvement.

Automation has a deep history with repeatable patterns. The introduction of machinery provided thefirst wave of automation of jobs, which was in turn followed by other phases like the economic revolution after which, in fact, software automation.

We are actually entering a phase of AI Automation. That is characterised by massive productivity gains for people, as they offload parts of their role entirely to AI. It is usually characterised by massive extensions of capability — corporations now not have to trade-off what resources they need, they’ll just have an AI Agent for each function

Examples of Autonomous Productivity

Things corporations can automate:

- Customer support resolution – AI agents answering tickets, troubleshooting issues, and escalating only edge cases.

- Lead qualification and outreach – automated prospect research, cold email generation, and follow-ups.

- Content production – blog drafts, search engine optimisation research, social posts, and newsletter generation.

- Data evaluation and reporting – automated dashboards, anomaly detection, and weekly business reports.

- Software testing and QA – agents running tests, identifying regressions, and suggesting fixes.

- Internal documentation – generating and maintaining SOPs, onboarding materials, and knowledge bases.

- Meeting summaries and motion tracking – capturing notes, assigning tasks, and following up robotically.

- Market research – scanning competitors, summarizing trends, and generating insights.

- Recruiting workflows – screening resumes, scheduling interviews, and initial candidate outreach.

- Financial operations – invoice processing, expense categorization, and basic financial reporting.

Examples of Greater Capability

Roles corporations can hire they couldn’t before:

- 24/7 Customer Experience Manager – an AI agent dedicated to maintaining quick support coverage globally.

- Market Intelligence Analyst – repeatedly monitoring competitors, pricing changes, and industry signals.

- Growth Experimentation Manager – running dozens of selling and product experiments concurrently.

- Internal Knowledge Curator – maintaining living documentation and surfacing relevant knowledge to groups.

- Product Feedback Analyst – processing hundreds of customer comments, reviews, and tickets into insights.

- search engine optimisation Researcher – continually identifying latest keyword opportunities and content gaps.

- Sales Development Representative (SDR) – performing personalized prospecting at massive scale.

- Operational Efficiency Auditor – monitoring workflows and recommending automation opportunities.

- Compliance Monitoring Officer – repeatedly scanning processes for regulatory or policy risks.

- Strategic Scenario Analyst – modeling business scenarios and producing decision support reports.

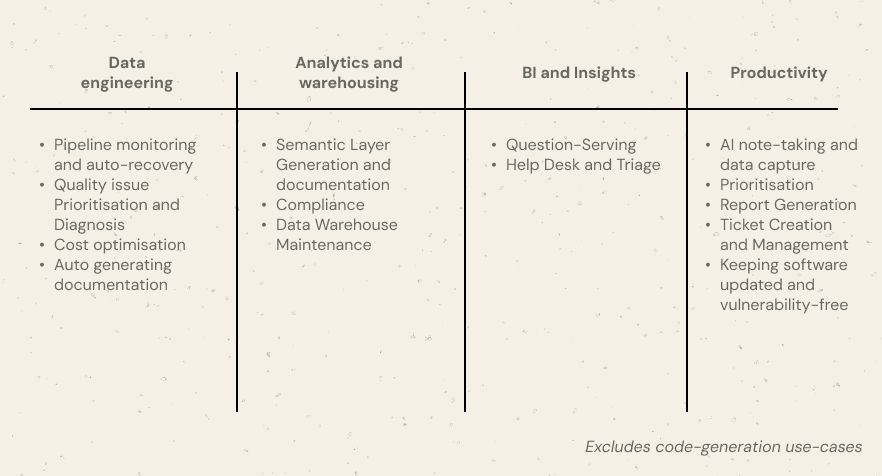

Autonomous Productivity for AI and Data Teams

We’ve spoken to tons of of Data Teams and identified the highest areas that folk are taking a look at AI to enable automations. These areas are included below and we are going to follow-up with actual survey data.

Data Engineering Use-cases

- Pipeline monitoring and auto-recovery – detecting failed jobs, retrying tasks, triggering fallbacks, and notifying only when escalation is required.

- Quality issue Prioritisation and Diagnosis – Identifying probably the most pressing quality issues and prioritising these

- Cost optimisation – detecting inefficient jobs and robotically rescheduling or scaling resources. Corporations like Alvin and Espresso AI have made huge strides on this space

- Auto generating documentation — an actual gripe for engineers is maintaining documentation. Generating architecture diagrams and self-updating documentation may be fully automated with AI

Data Warehousing and Analytics Engineering use-cases

All those Data Engienering use-cases, plus:

- Semantic Layer Generation and documentation — agents can generate entire semantic layers fairly easily while also keeping these in sync. When combined with other knowledge bases, the method may be fully automated. AI without context will in fact, generate bad semantic layers.

- PII and GDPR Compliance — classical automation keeping warehouses in keeping with PII and GDPR compliance e.g. customer deletion requests

- Data Warehouse Maintenance — AI agents that may archive data, delete redundant fields, discover inconsistent definitions

Analytics and Insights use-cases

- Query serving and Text-to-SQL: Assistants like Snowflake Cortex and Databricks Genie allow business users to simply self-serve requests as an alternative of relying a centralised data team (“Silo Trap”)

- Service Desk and Triage: where stakeholders have questions around processes they might require more granular interaction with an AI Agent that may serve requests that usually are not data-specific

General operational use-cases

- AI note-taking and data capture

- Prioritisation

- Report Generation (non KPI-specific, resembling an internal report or incident management report that should be generated every [quarter])

- Ticket Creation and Management

- Keeping track of latest versions / patches / vulnerabilities of dependent software packages

Summary

The overwhelming majority of autonomous productivity avenues for AI and data teams centre around process. Typically, many processes involving data teams require human input and are, due to this fact, poor candidates for Autonomous Productivity.

Nevertheless, this changes when processes change.

For instance, consider a scenario where there may be a single-person data team that has gathered an enormous amount of tribal knowledge around data and architecture. Typically, that person could be an enormous bottleneck for the business and stakeholders seeking to answer basic questions.

The method doesn’t need to be uniform for all sorts of query. A system of triage, where an AI Agent is used to discover and answer basic questions but the only person data team known as up for the highest 1% of queries would represent a meaningful step in advancing Autonomous Productivity.

Similarly, when an incident arises, often Data Teams have to manually produce incident reports. This might grow to be an automatic workflow where something like an Orchestra Agent Pipeline is run with an incident or ticket ID, and the agent subsequently creates the incident report and stores it in

This report doesn’t include an evaluation of the choices for Autonomous Productivity outside of Data and AI Teams because the landscape is the list of things Chief Data and AI Officers could begin to automate is sort of infinitely long.

It would be critical for CDAIO’s to discover those areas of Autonomous Productivity of their business with the best uplift and the shortest implementation times.

Section 3: Augmented Productivity

What’s Augmented Productivity?

Augmented Productivity refers to work that AI can significantly speed up but cannot fully replace. These activities still require human judgment, creativity, or accountability, but AI can dramatically reduce the time required to finish them.

Somewhat than replacing roles entirely, AI acts as a force multiplier. Individuals can move faster, test more ideas, and operate at a level of output that previously required larger teams.

While Autonomous Productivity increases capability through automation, Augmented Productivity increases the effectiveness of human staff.

Examples include writing software with AI assistance, generating evaluation faster, or drafting documents that humans refine and finalize.

Examples of Augmented Productivity

Government & Legal

- Document review in government bureaucracies – civil servants using AI to summarize long regulatory filings, laws drafts, and policy documents before making decisions.

- Legal research for lawyers – AI surfacing case law, summarizing precedents, and outlining arguments that attorneys refine.

- Contract review and drafting – AI flagging risks, inconsistencies, or missing clauses while lawyers approve final language.

- Public consultation evaluation – AI clustering hundreds of citizen responses and summarizing key concerns for policy teams.

Marketing & search engine optimisation

- search engine optimisation managers scaling content production – AI generating keyword clusters, briefs, outlines, and draft articles while humans edit and publish.

- Competitor monitoring – AI repeatedly scanning competitor sites and surfacing changes in pricing, positioning, or content strategy.

- Ad campaign iteration – marketers generating dozens of ad variants, testing messaging, and refining strategy faster.

- Content repurposing – turning one piece of content into newsletters, social posts, and video scripts.

Product & Startup Teams

- Product managers writing specs faster – AI drafting product requirement documents and user stories from rough ideas.

- Customer feedback synthesis – summarizing hundreds of support tickets or reviews into product insights.

- Experiment ideation – generating growth experiments or product improvements based on user data and feedback.

- Investor communication preparation – drafting updates, board reports, and fundraising materials.

Sales & Business Development

- Sales outreach personalization – AI drafting tailored messages based on prospect research that sales reps review before sending.

- Account research – summarizing company news, org structures, and potential buying signals for sales teams.

- Proposal drafting – generating first drafts of RFP responses and client proposals.

- Deal preparation – summarizing previous conversations, stakeholder information, and contract details.

Operations & Internal Teams

- HR teams screening resumes faster – AI summarizing candidate profiles before human review.

- Meeting preparation – AI compiling context, previous decisions, and relevant documents before discussions.

- Internal knowledge search – employees asking AI questions on internal policies, docs, and systems.

- Report writing – AI drafting operational reports or summaries that managers finalize.

Creative & Media

- Video editing workflows – AI generating rough cuts, transcripts, and highlight segments that editors refine.

- Design ideation – generating visual concepts or layouts that designers evolve.

- Script writing assistance – drafting outlines or dialogue that writers edit.

These examples give some ideas for Chief Data and AI Officers for fascinated with how their role can impact the business in a positive way using AI. CDAIOs should ensure they don’t fall into the trap of considering “nearly data” — AI may be for certain kinds of business, and AI implementation may not have anything to do with data in any respect.

Augmented Productivity for AI and Data Teams

By chatting with hundreds of knowledge professionals and software professionals, below are an inventory of those things AI can augment but not fully automate. For probably the most part, these relate to code-generation use-cases.

- Software development – engineers using AI to draft functions, troubleshoot errors, and explore implementation approaches faster.

- Data evaluation and exploration – analysts accelerating exploratory evaluation, SQL writing, and dataset understanding with AI assistance.

- Technical documentation writing – generating drafts of architecture explanations, system documentation, and onboarding guides that engineers refine.

- Product development planning – AI helping structure feature proposals, product specs, and requirement documents.

- Research and strategy work – synthesizing industry information and generating first-pass strategic evaluation.

- Documentation creation and editing – drafting blog posts, reports, or newsletters that humans refine for voice and accuracy.

- Code reviews and debugging support – AI identifying potential issues and suggesting fixes while humans make final decisions.

- Data modeling and architecture design – AI proposing schema ideas, transformations, or modeling approaches for human validation.

- Experiment design and evaluation – generating hypotheses, structuring tests, and assisting interpretation of results.

- Presentation and communication preparation – drafting slide outlines, executive summaries, and reports that humans refine.

Given the technical nature of the work for Data and AI Teams, incorporating AI and automation into processes would seem of fundamental importance in 2026.

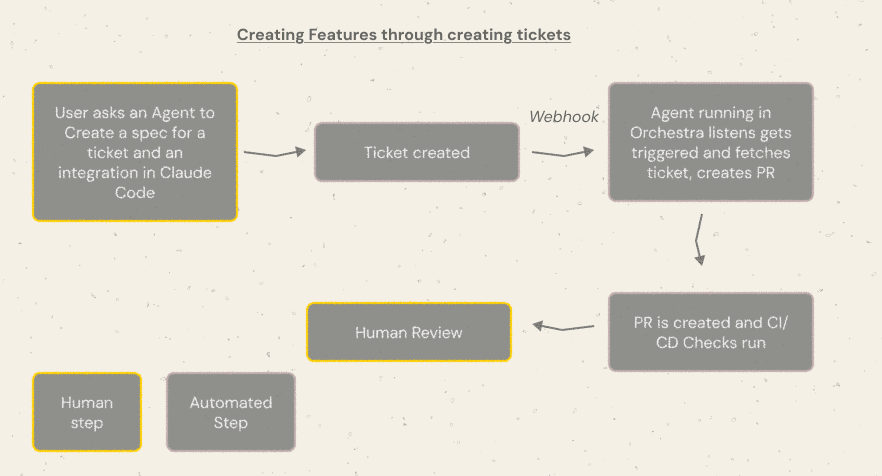

Digging Deeper: example code-generation workflow

This code generation workflow outlines how a user can create a process whereby a Data Engineer simply asks an area agent to create a ticket. For instance, the Data Engineer might say

“Create a Ticket that features a spec for the next reuqest: “Create a knowledge pipeline per my company’s standards that leverages dlt and Orchestra to load data from an api

and fetches the next objects . Make sure that pagination and incrementality is handled where possible. Make sure the entrypoint to the functions can take parameters resembling the obejct name, the beginning date and end date for the information, and some other relevant filters””

Following ticket creation a webhook is fired to an agent playground resembling Orchestra. The Agent Playground runs the agent which creates a PR. The agent must be calibrated and tested first locally before it could go into production and be fully reliable. The PR is created, triggering CI and CD checks. These ideally also trigger agentic workflows which may in turn auto-fix the PR. Finally there may be a human review step.

Which means Data and AI Teams’ focus shifts from

To

- Ability to Teach AI to jot down code how you would like it

- Ability to jot down good tickets

- Ability to review PRs quickly

An interesting statement from the community is that the domain you’re working in matters for AI and Data. For instance, within the React /front-end development area, there may be a considerable amount of below average code available in the web. AI generally struggles to jot down good code on this domain.

The truth for data professionals could also be similar. Many corporations have their very own way, rightly or wrongly, of coding Data Pipelines. Company-specific quirks ought to be avoided in any respect costs, and present a major barrier to automation and profit.

Consider an organization that has decided to fork dbt, resembling Monzo, the UK’s largest neobank. Monzo employs around 100 analytics engineers, and have a comparatively complex and area of interest dbt set-up. It might be much harder to show AI to code “like a Monzo Analyst” than to show AI to jot down good, standard dbt-core code.

If processes are too area of interest to be automated, then this presents a real problem for CDAIOs. Data Leaders should quickly discover if proecsses are too area of interest and entrenched to be automated. Like every automation, AI struggles when clear targets usually are not defined or processes don’t exist, since there are not any “common paths” for it to follow — incident resolution is a superb example, where the “Data Person” typically solves issues through a large number of channels (Email, Slack, In-person etc), in a large number of how.

Section 4: AI inputs

What are AI Inputs?

AI Inputs confer with the entire cost required to supply output using AI systems.

Where productivity frameworks typically measure how much output is produced, AI Inputs deal with the resources required to generate that output.

In practice, AI Inputs are the mix of two predominant components:

- Human labor required to operate AI systems

- Compute costs required to run AI models

Together, these form the true marginal cost of AI-driven work.

Even when AI performs a task autonomously, there may be all the time an input cost: prompting systems, monitoring outputs, validating results, and maintaining infrastructure.

AI Inputs due to this fact represent the entire economic cost of getting AI to do useful work.

The Two Core Components of AI Inputs

Labor Inputs

Even highly autonomous systems require human involvement. This will include:

- Prompt engineering and workflow design

- Supervising outputs and validating results

- Integrating AI into existing systems

- Managing AI infrastructure and agents

- Maintaining datasets, APIs, and integrations

For a lot of corporations today, labor stays the most important AI input cost, particularly during early implementation. There isn’t a more priceless commodity than time.

Token and Compute Inputs

AI systems also incur direct computational costs.

These include:

- Tokens consumed when generating text, code, or evaluation

- Compute used for inference and model execution

- Storage and infrastructure costs for AI pipelines

- API costs for external AI services

While token costs proceed to fall rapidly, they still represent an actual operational input to AI-driven workflows.

Implementation Costs

A 3rd category of AI Inputs pertains to the price of implementing AI inside a company.

Unlike ongoing labor or token costs, these are typically upfront investments.

These can include:

- Constructing internal AI infrastructure

- Purchasing enterprise AI tools

- Integrating AI into internal systems

- Training employees to make use of AI effectively

- Designing latest workflows around AI agents

For a lot of organizations, these implementation costs represent the most important barrier to AI adoption, even when the long-term productivity gains are clear.

Examples of AI Inputs

These construct on the examples in previous sections, drawing attention to the impact to labour of AI and associated token costs.

Government & Legal

- Document review in government bureaucracies

Reviewing long regulatory filings used to require hours of civil servant time. AI can summarize tons of of pages in seconds. Labour shifts from reading documents to reviewing summaries. Token costs increase with long documents and enormous consultation submissions. - Legal research

Lawyers historically spent hours looking for relevant case law. AI can scan large legal databases quickly. Labour moves toward validating arguments and refining strategy. Token costs grow with the scale of legal corpora and the complexity of research queries. - Contract review

Entire contracts may be analyzed by AI to flag risks and inconsistencies. Labour drops from full manual review to targeted verification. Token consumption rises with large legal documents and repeated review iterations. - Public consultation evaluation

Governments processing hundreds of citizen responses previously required large teams of analysts. AI can cluster and summarize responses rapidly. Labour shifts toward interpreting results. Token costs scale directly with the amount of responses.

Marketing & search engine optimisation

- search engine optimisation content production

Writing long-form content once required multiple writers. AI can generate outlines and drafts quickly. Labour shifts toward editing and quality control. Token usage increases with article length and the variety of drafts generated. - Competitor monitoring

Marketing teams previously spent hours reviewing competitor sites and industry news. AI can scan and summarize this repeatedly. Labour drops to reviewing alerts. Token costs grow with the frequency of monitoring and variety of sources analyzed. - Ad campaign generation

Marketers can generate dozens of ad variations immediately. Labour shifts from writing to choosing and refining the most effective options. Token costs increase with the variety of variations generated. - Content repurposing

A single piece of content may be transformed into multiple formats. Labour moves from creation to review. Token consumption grows with the variety of transformations requested.

Product & Startup Teams

- Product specification drafting

Writing detailed product specs once required long drafting cycles. AI can produce first drafts immediately. Labour shifts to refining requirements and validating edge cases. Token costs increase with the length and complexity of specifications. - Customer feedback synthesis

Product teams previously read through hundreds of support tickets and reviews. AI can summarize and cluster this feedback quickly. Labour focuses on deciding what to construct. Token usage grows with the scale of the feedback dataset. - Experiment ideation

Generating product experiments or growth ideas can now be accelerated with AI. Labour shifts to prioritization and execution. Token costs remain relatively low in comparison with other use cases. - Investor communication preparation

AI can draft investor updates and board reports from internal data. Labour focuses on refining narrative and ensuring accuracy. Token usage increases with the scale of reports and historical context provided.

Sales & Business Development

- Sales outreach personalization

Sales teams can generate personalized outreach messages at scale. Labour shifts from writing messages to reviewing them. Token costs increase with the variety of prospects targeted. - Account research

AI can summarize company news, hiring signals, and organizational structure. Labour drops from manual research to reviewing summaries. Token costs increase with the variety of accounts monitored. - Proposal drafting

RFP responses and proposals may be generated quickly. Labour shifts toward customization and relationship constructing. Token consumption grows with document length and variety of proposals generated. - Deal preparation

AI can summarize past conversations and account history. Labour moves toward negotiation strategy. Token costs increase with long email threads and meeting transcripts.

Operations & Internal Teams

- Resume screening

HR teams can summarize candidate profiles immediately. Labour shifts toward evaluating shortlisted candidates. Token costs scale with hiring volume and resume length. - Meeting preparation

AI can analyze previous meeting notes, documents, and emails. Labour shifts to decision-making. Token consumption increases with the quantity of historical context provided. - Internal knowledge search

Employees can query large internal documentation sets using AI assistants. Labour shifts from searching to applying answers. Token costs increase with the scale of the knowledge base. - Operational report drafting

Reports that after required hours of manual writing may be generated quickly. Labour moves toward validation and interpretation. Token usage grows with report length and the number of knowledge sources included.

AI Inputs for Data Teams

The impact of AI to AI Inputs appears to be vary significantly. It might appear, through anecdotal evidence, that corporations in “defensive” positions, aiming to minimise costs while keeping revenues regular, want to reduce headcount while keeping output fixed.

Growth-stage corporations resembling Scale-ups look like doing the alternative; keeping inputs fixed while attempting to maximise output via Augmented Productivity gains. This typically includes some expenditure for Token Costs.

Token Costs vary widlly. Developers constructing applications like Pete Steinberger, the creater of OpenClaw, has wracked up a $50k Codex bill in 5 months. Individual coding subscriptions vary from $20 to $100 a month.

Forecasting token usage is difficult. Corporations should work-out the quantity of spend they’ll allocate towards AI before embarking on the journey, and prioritise initiatives based on learnings from tests and implementations.

Implementation costs and opportunity costs are prone to be probably the most significant things for data teams. While using tools like Codex and Claude code to jot down code faster is comparatively fast and low lift, process is different.

Un-entrenching compelx processes, documenting latest ones, and dispersing this information inside an organisation may very well be extremely time-consuming and slow. Moreover, with data needs of the business ever-growing, Data Teams particularly face high opportunity costs to reallocation of resources to AI implementation.

Data Teams should find appropriate times to implement AI when opportunity costs are low, and/or stay near Business leaders to know the chance costs of AI. If there are significant upsides available, Data Teams should ensure that is communicated clearly and effectively to those in control of resource prioritisation.

Summary | Good AI needs good Process

On this piece I outlined a framework for Chief Data and AI Officers to judge AI initiatives and to form a holistic AI strategy.

The framework focusses on gains in productivity of two kinds; Autonomous and Augmented. While Autonomous Productivity is theoretically boundless, Augmented Productivity pertains to step-changes in productivity for members of the prevailing workforce.

We also identified some risks to AI implementation, particularly around implementation time, cost and the chance cost of implementing AI. Beyond the scope of this evaluation were considerations around security, governance or failed implementations. For a lot of enterprises, data or privacy breaches may very well be detrimental to business, which in turn introduce additional barriers and timing considerations for implementing AI.

We also identified some upside cases — where there may be a “Good thing about Advantages”; a bonus for realising multiple gains in productivity (and their associated consequences) directly.

Critical to each Autonomous and Augmented Productivity use-cases are process. While LLMs excel at understanding unstructured data and existing in a non-deterministic environment, productivity gains stand to be large when processes may be repeatable.

For all AI’s appeal, enterprises fundamentally want reliable, accurate, and trustworthy AI. Without clean definitions and well-defined processes, simply adding an AI layer is unlikely to yield useful results.

Most enterprises should find that there’s a significant Productivity Gap. People who find that tribal knowledge, unstructured processes and human bottlenecks also exist are within the position to bargain with the C-Suite: structures for progress. Without structures, corporations won’t capitalise AI and miss-out on the “AI Boat”, and competitors will win.

This could come nearly as good news, not only for Chief Data and AI Officers, but for Data Practitioners generally. An absence of consistency, an over-reliance on specific people for tribal knowledge, and undocumented processes are fundamentally the source of many issues data professionals face on a regular basis, one such being data quality.

Corporations which might be unable to construct their businesses with clearly-defined processes won’t achieve implementing AI effectively. Which means people who do implement repeatable, well-documented processes, so AI and AI Agents can begin to perform this work.

A well-known phrase in data is: “Garbage in, garbage out.” For years, the challenge hasn’t been explaining this to data teams — it’s been getting the business to care. AI may finally change that.

As corporations rush to deploy AI across every function, a brand new reality is becoming clear: AI is just nearly as good because the processes behind it. Messy systems, unclear ownership, and poor data quality don’t just produce bad dashboards anymore — they produce bad decisions at machine speed.

Because of this 2026 may finally be the 12 months the CDAIO truly comes into its own. Not as a technical leader, but as a business operator chargeable for securing AI foundations.

For corporations to be truly AI-driven, it’s now not just “poor data in, poor data out.”It’s poor process in, poor intelligence out. For the primary time, your complete executive team has a reason to care.