Good morning, { AI enthusiasts }. Every week ago, Spotify’s co-CEO claimed their best devs haven’t written a “single line of code” this yr — echoing a wave of execs that describe AI coding agents as the long run of software.

The shift is occurring – there’s little question about that. But bringing AI into real engineering workflows is more nuanced than hitting a switch and occurring autopilot.

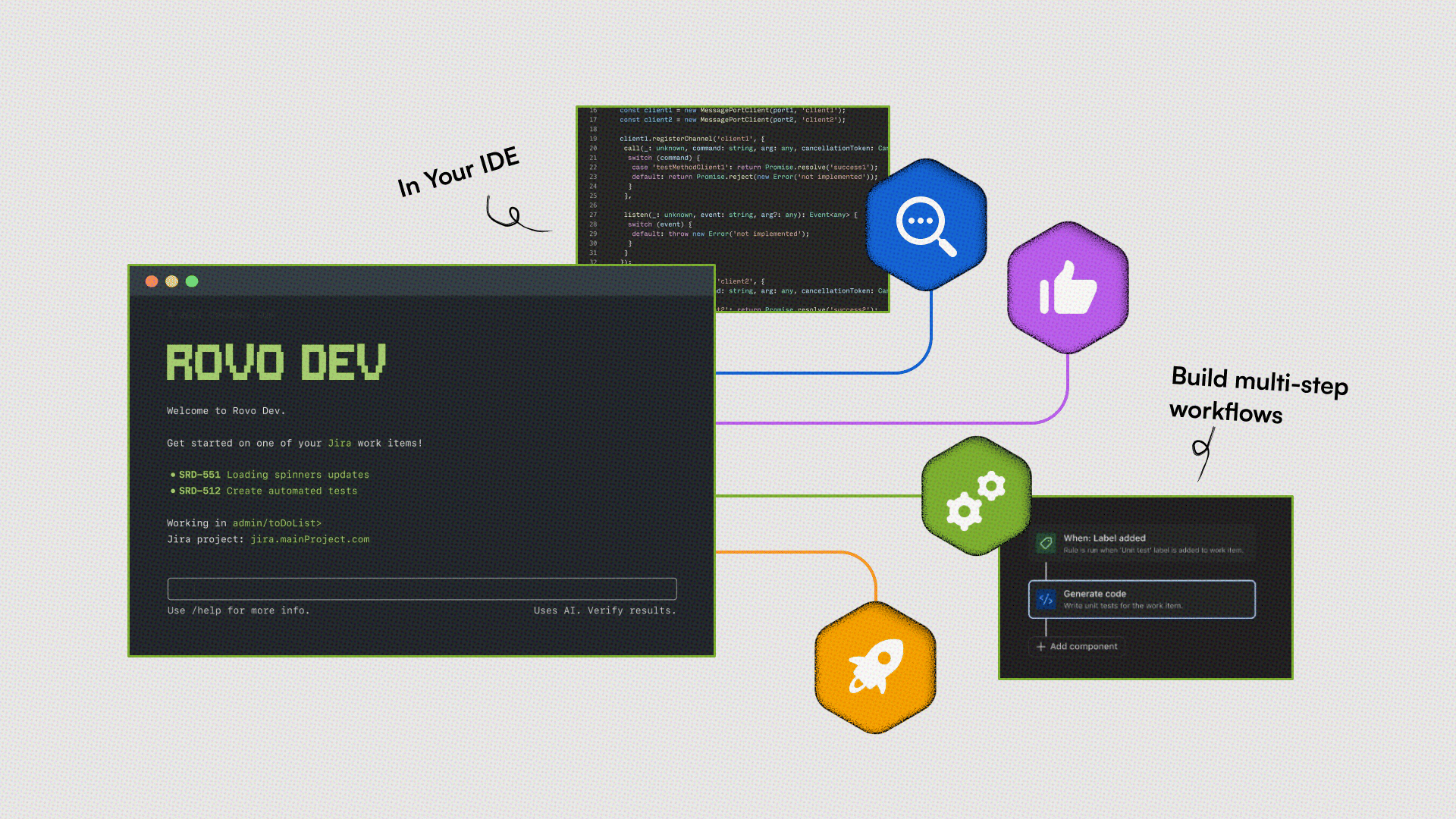

To higher understand what’s changing (and what isn’t), we sat down with Rajeev Rajan, CTO of Atlassian, the corporate behind popular collaboration tools equivalent to Jira, Confluence, and Loom. We got insights on their recent software development agent Rovo Dev and discussed how human roles evolve in an AI-native world.

In today’s AI rundown:

-

The non-negotiable cost of coding with AI

-

When AI writes code, what is going to an engineer do?

-

Constructing agentic AI for developer joy

-

What happens when AI makes a mistake?

-

Truth concerning the “death of SaaS” theory

-

Quick hits with Rajeev

LATEST DEVELOPMENTS

WORKFLOW REDESIGN

The Rundown: As more enterprises adopt coding agents, Rajan says teams may have to revamp their workflows with each human ownership and safety systems, in order that AI moves faster without sacrificing consistent, production-grade quality.

Cheung: AI is generating more code than ever, but research shows 45% of it still accommodates security flaws. How exactly should teams leverage AI coding agents without trading quality for speed?

Rajan: There’s an undeniable trend toward an AI‑native software development lifecycle. But for those who let quality slide, you’re just moving faster toward incidents and customer pain. It’s less about “AI vs. quality” and more about “how can we redesign the workflow on the idea that AI is within the loop by default?”

In code review, we’re entrusting AI to catch bugs, implement coding standards, and explain complex changes. For instance, Rovo Dev helped reduce PR cycle time by 45% and auto-resolved 51% of potential security vulnerabilities. The character of review is changing here: as a substitute of humans reading every line of a peer’s code, it’s a couple of human owner reviewing an agent’s work.

Rajan added: At deployment, if AI helps you generate and ship more code, your safety systems need to keep pace. Think: smaller batches, heavier CI, stronger observability, and fast rollbacks. You may’t operate in a black‑box scenario.

Why it matters: Speed only becomes a bonus when the system behind it’s airtight. The appropriate approach with AI coding agents is to treat them as core enterprise infrastructure — which implies designing workflows around them, constructing in safety from day one, and holding output to the identical standards as human-written code.

ROLE SHIFT

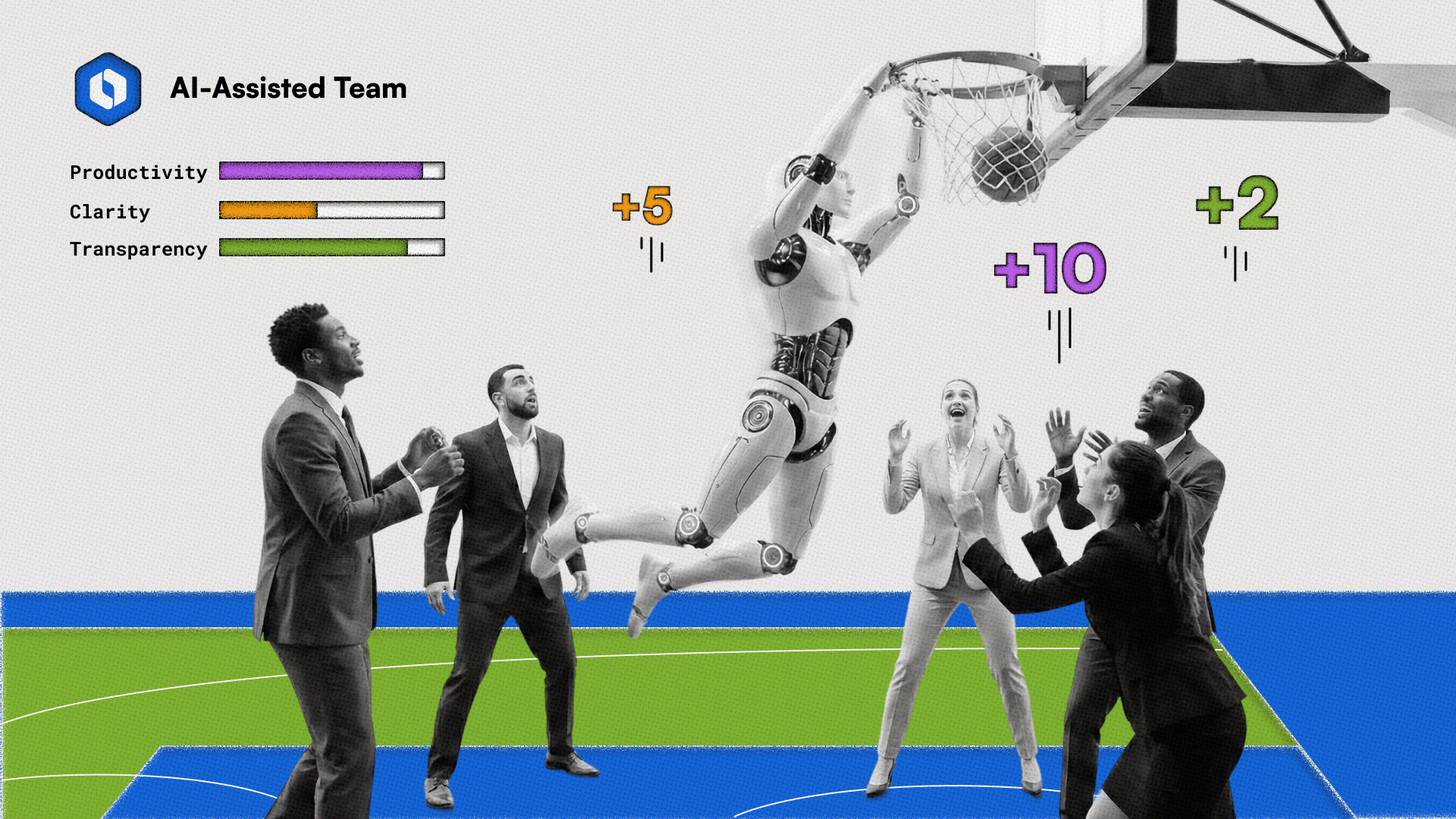

The Rundown: With writing code not the bottleneck, Rajan believes that the subsequent big opportunity for engineers is moving into more strategic functions (like planning to execution) and designing higher systems with AI within the loop.

Cheung: How much of code will probably be AI-written by 2028, and what does the role of a software engineer appear to be with that change?

Rajan: By 2028, I’d not be surprised if most recent code in large corporations is AI-generated. I say that as someone who fell in love with writing code early in my profession and still remembers the enjoyment of seeing something work for the primary time.

We’re seeing a shift where every engineer is a tech lead, orchestrating systems and agents. Engineers now spend more time driving clarity and owning what happens “left of code” and “right of code” – from planning and design on one side to testing, rollout safety, and operations on the opposite.

Cheung: What about recent grads entering the sector — does AI help them or hurt them?

Rajan: Specializing in the correct fundamentals and adopting the AI-native way of working will give recent grads a giant advantage — potentially allowing them to leapfrog senior developers who haven’t adopted AI ways of working yet. Your edge will come from judgment: knowing when to trust the AI and when to challenge it.

Why it matters: As AI writes more code, the moat for engineers moves from actually typing to as a substitute framing problems, designing systems, and maintaining oversight. Rajan’s leapfrog point is particularly interesting: the advantage may not go to essentially the most senior person, but to whoever learns to orchestrate AI fastest.

DEVELOPER JOY

The Rundown: Atlassian kicked off its internal journey to enhance “Developer Joy,” raising developer satisfaction scores from 49% to 83%. With teams moving faster and feeling more empowered to make changes, Rajan shared how this renewed sense of ownership led to direct product improvements with Rovo Dev.

Cheung: Why did you choose to concentrate on developer joy, and the way did you truly measure it?

Rajan: After I joined Atlassian, we selected to border developer productivity as ‘Developer Joy’. If developers are frustrated, blocked, or taken out of their flow, it doesn’t matter what productivity metric you choose — you’re not going to get great outcomes.

We track this with regular satisfaction surveys and hard metrics tied to pain points. Developer satisfaction has gone from 49% to 83%, and we see that show up within the work. For instance, by specializing in certainly one of the largest friction points, the Confluence backend team cut its full server construct time by greater than 60%. We see these sorts of investments as core to our ability to ship value to customers.

Cheung: While you tested early versions of Rovo Dev internally, what did engineers keep off on?

Rajan: Early on, the feedback we got on Rovo Dev was that parts of the experience felt like ‘magic’ within the incorrect way. It might do something useful, but you would not see enough of the work it did to get there.

We actually scrapped and reworked an early ‘one click, do all of it’ flow because our own teams wouldn’t touch it without more transparency and control. They wanted a solution to understand each step the agent took, how their instructions led to different outcomes, and the power to steer the agent. That pushed us hard toward agent sessions you may inspect and experiences that keep developers within the loop.

Why it matters: Rajan’s early Rovo Dev story highlights how critical internal feedback loops are when adopting agentic AI. The more teams take heed to users (and iterate on what feels opaque, dangerous, or frustrating), the stronger and more trustworthy the system becomes. Iteration goes hand-in-hand with developer trust in an AI-native world.

ACCOUNTABILITY

The Rundown: Rajan says powerful AI agents must be deployed to production only when there’s clear human ownership and a solution to track, monitor, and steer the system’s behavior, creating an accountability layer that moves as fast because the AI itself.

Cheung: As AI agents tackle more autonomous work, how do you maintain accountability, and why can’t “the AI did it” ever be an excuse?

Rajan: When something goes incorrect, ‘the AI did it’ can’t be the reply, because AI doesn’t own customer trust – we do.

As we bring autonomous AI into our workflows, now we have to be explicit about accountability: every AI-assisted decision or motion has to have a transparent human owner. If we will’t understand or observe how an AI is behaving, it doesn’t belong in a critical path.

We put guardrails and observability around AI, we log and audit its actions, and we make sure that teams treat it like every other powerful tool: you understand failure modes, you monitor it, and also you don’t ship without ownership. AI can assist move faster, nevertheless it doesn’t replace judgment and responsibility.

Why it matters: With something as powerful as agentic AI, accountability is non-negotiable. Should you get it right, you unlock speed, trust, and sturdy customer confidence. But for those who get it incorrect, the implications might be massive — because autonomous systems can amplify mistakes just as quickly as they amplify progress.

SAAS STORY

The Rundown: Despite the rise of AI coding agents, Rajan argues SaaS tools aren’t going anywhere. In reality, he believes these tools will get stronger — with AI working across projects and controls by tapping contextual insights.

Cheung: What’s your tackle the entire “saaspocalypse” narrative that AI agents will kill SaaS altogether?

Rajan: The concept that one person in your team can vibe code an in-house solution over a weekend and replace a mature SaaS solution you’re paying for, for my part, is overrated.

When customers buy software-as-a-service, they are usually not just buying the code; they buy workflows, shared context, security, compliance, and reliability. That’s where well-designed SaaS products still matter quite a bit. What AI actually does is make great SaaS much more precious.

Rajan added: Your projects, docs, tickets, and conversations live in those systems, and AI can now move across them, automate the boring parts, and orchestrate agents around trusted workflows and controls. So I’m more excited about SaaS that becomes AI-native than in hot takes that SaaS is dead.

Why it matters: The controversy continues to be on, but one thing’s clear: as AI agents grow more capable, the inspiration of SaaS will develop into much more necessary. AI will evolve the platforms that already hold a corporation’s workflows and institutional knowledge, serving as trusted systems of record.

LIGHTNING ROUND

Rajan:A number of AI products today are designed for a single-player system. We see much greater potential in how AI helps entire teams work higher together — allowing necessary context to flow across a team of humans and agents.

RajanI feel AI will make engineering more human, not less. Quite a lot of people worry we are going to lose the craft — I consider we are going to spend less energy on repetitive implementation and more time on strategic, creative work and collaboration.

Rajan: Start by fixing one concrete, high-friction problem that impacts your team. You’d be surprised by how quickly chipping away at issues like slow construct times and noisy tooling can multiply and have a greater impact.

Rajan: Microsoft taught me the worth of deep technical rigor and constructing platforms that stand the test of time. Meta taught me how powerful it’s whenever you pair strong engineering talent with a bias for fast iteration and learning. At Atlassian, I attempt to mix each: long-term architecture with a culture that ships, learns, and adapts quickly.

That is it for today!

- ⭐️⭐️⭐️⭐️⭐️ Nailed it

- ⭐️⭐️⭐️ Average

- ⭐️ Fail

Login or Subscribe to participate

See you soon,