Note: Edited on July 2023 with up-to-date references and examples.

Introduction

Lately, there was an increasing interest in open-ended

language generation because of the rise of huge transformer-based

language models trained on thousands and thousands of webpages, including OpenAI’s ChatGPT

and Meta’s LLaMA.

The outcomes on conditioned open-ended language generation are impressive, having shown to

generalize to latest tasks,

handle code,

or take non-text data as input.

Besides the improved transformer architecture and large unsupervised

training data, higher decoding methods have also played a very important

role.

This blog post gives a transient overview of various decoding strategies

and more importantly shows how you can implement them with little or no

effort using the favored transformers library!

All the following functionalities could be used for auto-regressive

language generation (here

a refresher). Briefly, auto-regressive language generation relies

on the idea that the probability distribution of a word sequence

could be decomposed into the product of conditional next word

distributions:

and being the initial context word sequence. The length

of the word sequence will likely be determined on-the-fly and corresponds

to the timestep the EOS token is generated from .

We are going to give a tour of the currently most outstanding decoding methods,

mainly Greedy search, Beam search, and Sampling.

Let’s quickly install transformers and cargo the model. We are going to use GPT2

in PyTorch for demonstration, however the API is 1-to-1 the identical for

TensorFlow and JAX.

!pip install -q transformers

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

torch_device = "cuda" if torch.cuda.is_available() else "cpu"

tokenizer = AutoTokenizer.from_pretrained("gpt2")

model = AutoModelForCausalLM.from_pretrained("gpt2", pad_token_id=tokenizer.eos_token_id).to(torch_device)

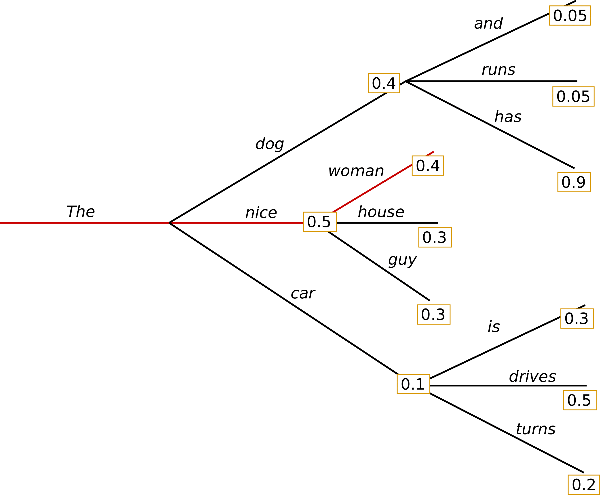

Greedy Search

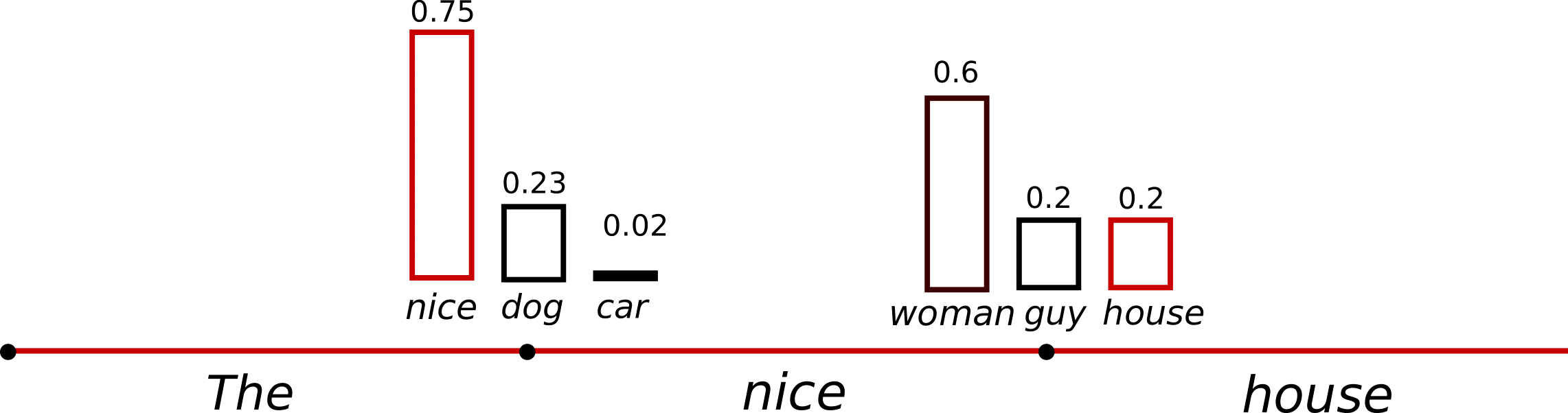

Greedy search is the only decoding method.

It selects the word with the very best probability as

its next word: at each timestep

. The next sketch shows greedy search.

Ranging from the word the algorithm greedily chooses

the following word of highest probability and so forth, so

that the ultimate generated word sequence is

having an overall probability of .

In the next we’ll generate word sequences using GPT2 on the

context . Let’s

see how greedy search could be utilized in transformers:

model_inputs = tokenizer('I enjoy walking with my cute dog', return_tensors='pt').to(torch_device)

greedy_output = model.generate(**model_inputs, max_new_tokens=40)

print("Output:n" + 100 * '-')

print(tokenizer.decode(greedy_output[0], skip_special_tokens=True))

Output:

----------------------------------------------------------------------------------------------------

I enjoy walking with my cute dog, but I'm unsure if I'll ever give you the chance to walk with my dog. I'm unsure if I'll ever give you the chance to walk with my dog.

I'm unsure

Alright! Now we have generated our first short text with GPT2 😊. The

generated words following the context are reasonable, however the model

quickly starts repeating itself! This can be a quite common problem in

language generation basically and appears to be much more so in greedy

and beam search – try Vijayakumar et

al., 2016 and Shao et

al., 2017.

The main drawback of greedy search though is that it misses high

probability words hidden behind a low probability word as could be seen in

our sketch above:

The word

with its high conditional probability of

is hidden behind the word , which has only the

second-highest conditional probability, in order that greedy search misses the

word sequence .

Thankfully, we have now beam search to alleviate this problem!

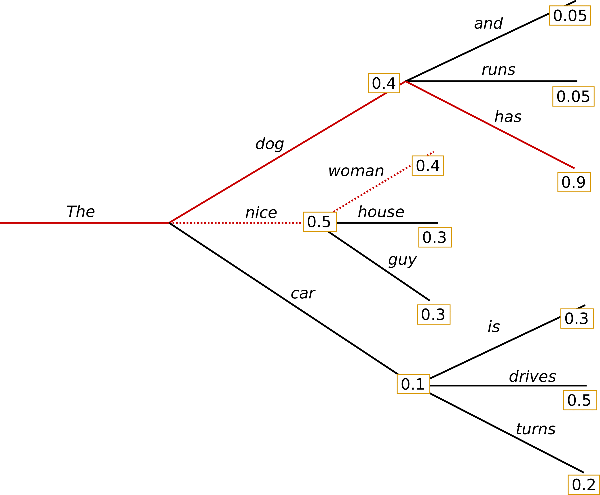

Beam search

Beam search reduces the chance of missing hidden high probability word

sequences by keeping the almost definitely num_beams of hypotheses at each

time step and eventually selecting the hypothesis that has the general

highest probability. Let’s illustrate with num_beams=2:

At time step 1, besides the almost definitely hypothesis ,

beam search also keeps track of the second

almost definitely one .

At time step 2, beam search finds that the word sequence ,

has with

a better probability than ,

which has . Great, it has found the almost definitely word sequence in

our toy example!

Beam search will all the time find an output sequence with higher probability

than greedy search, but is just not guaranteed to search out the almost definitely

output.

Let’s examine how beam search could be utilized in transformers. We set

num_beams > 1 and early_stopping=True in order that generation is finished

when all beam hypotheses reached the EOS token.

beam_output = model.generate(

**model_inputs,

max_new_tokens=40,

num_beams=5,

early_stopping=True

)

print("Output:n" + 100 * '-')

print(tokenizer.decode(beam_output[0], skip_special_tokens=True))

Output:

----------------------------------------------------------------------------------------------------

I enjoy walking with my cute dog, but I'm unsure if I'll ever give you the chance to walk with him again.

I'm unsure if I'll ever give you the chance to walk with him again. I'm unsure

While the result’s arguably more fluent, the output still includes

repetitions of the identical word sequences.

One among the available remedies is to introduce n-grams (a.k.a word sequences of

n words) penalties as introduced by Paulus et al.

(2017) and Klein et al.

(2017). Essentially the most common n-grams

penalty makes sure that no n-gram appears twice by manually setting

the probability of next words that might create an already seen n-gram

to 0.

Let’s try it out by setting no_repeat_ngram_size=2 in order that no 2-gram

appears twice:

beam_output = model.generate(

**model_inputs,

max_new_tokens=40,

num_beams=5,

no_repeat_ngram_size=2,

early_stopping=True

)

print("Output:n" + 100 * '-')

print(tokenizer.decode(beam_output[0], skip_special_tokens=True))

Output:

----------------------------------------------------------------------------------------------------

I enjoy walking with my cute dog, but I'm unsure if I'll ever give you the chance to walk with him again.

I have been interested by this for some time now, and I feel it is time for me to

Nice, that appears a lot better! We will see that the repetition doesn’t

appear anymore. Nevertheless, n-gram penalties need to be used with

care. An article generated in regards to the city Recent York mustn’t use a

2-gram penalty or otherwise, the name of town would only appear

once in the entire text!

One other essential feature about beam search is that we will compare the

top beams after generation and select the generated beam that matches our

purpose best.

In transformers, we simply set the parameter num_return_sequences to

the variety of highest scoring beams that ought to be returned. Make certain

though that num_return_sequences <= num_beams!

beam_outputs = model.generate(

**model_inputs,

max_new_tokens=40,

num_beams=5,

no_repeat_ngram_size=2,

num_return_sequences=5,

early_stopping=True

)

print("Output:n" + 100 * '-')

for i, beam_output in enumerate(beam_outputs):

print("{}: {}".format(i, tokenizer.decode(beam_output, skip_special_tokens=True)))

Output:

----------------------------------------------------------------------------------------------------

0: I enjoy walking with my cute dog, but I'm unsure if I'll ever give you the chance to walk with him again.

I have been interested by this for some time now, and I feel it is time for me to

1: I enjoy walking with my cute dog, but I'm unsure if I'll ever give you the chance to walk together with her again.

I have been interested by this for some time now, and I feel it is time for me to

2: I enjoy walking with my cute dog, but I'm unsure if I'll ever give you the chance to walk with him again.

I have been interested by this for some time now, and I feel it's a superb idea to

3: I enjoy walking with my cute dog, but I'm unsure if I'll ever give you the chance to walk with him again.

I have been interested by this for some time now, and I feel it is time to take a

4: I enjoy walking with my cute dog, but I'm unsure if I'll ever give you the chance to walk with him again.

I have been interested by this for some time now, and I feel it's a superb idea.

As could be seen, the five beam hypotheses are only marginally different

to one another – which mustn’t be too surprising when using only 5

beams.

In open-ended generation, a few reasons have been brought

forward why beam search won’t be the very best possible option:

-

Beam search can work thoroughly in tasks where the length of the

desired generation is kind of predictable as in machine

translation or summarization – see Murray et al.

(2018) and Yang et al.

(2018). But this is just not the case

for open-ended generation where the specified output length can vary

greatly, e.g. dialog and story generation. -

Now we have seen that beam search heavily suffers from repetitive

generation. This is very hard to regulate with n-gram– or

other penalties in story generation since finding a superb trade-off

between inhibiting repetition and repeating cycles of an identical

n-grams requires lots of finetuning. -

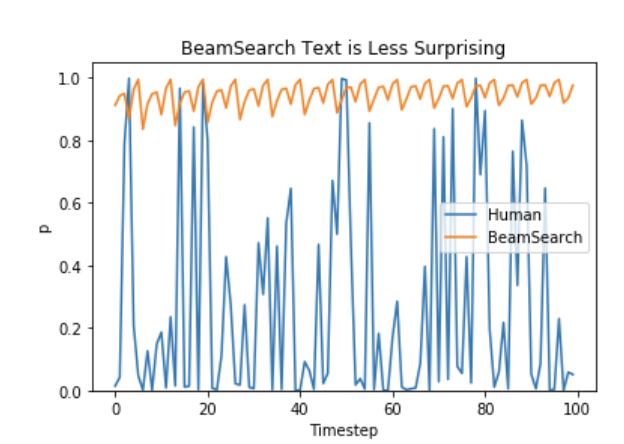

As argued in Ari Holtzman et al.

(2019), prime quality human

language doesn’t follow a distribution of high probability next

words. In other words, as humans, we wish generated text to surprise

us and never to be boring/predictable. The authors show this nicely by

plotting the probability, a model would give to human text vs. what

beam search does.

So let’s stop being boring and introduce some randomness 🤪.

Sampling

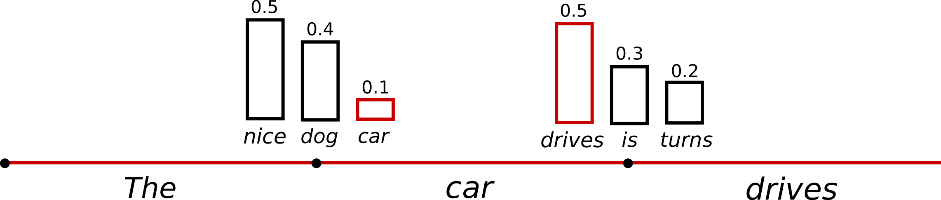

In its most elementary form, sampling means randomly picking the following word in keeping with its conditional probability distribution:

Taking the instance from above, the next graphic visualizes language

generation when sampling.

It becomes obvious that language generation using sampling is just not

deterministic anymore. The word is sampled from the

conditioned probability distribution , followed

by sampling from .

In transformers, we set do_sample=True and deactivate Top-K

sampling (more on this later) via top_k=0. In the next, we’ll

fix the random seed for illustration purposes. Be at liberty to alter the

set_seed argument to acquire different results, or to remove it for non-determinism.

from transformers import set_seed

set_seed(42)

sample_output = model.generate(

**model_inputs,

max_new_tokens=40,

do_sample=True,

top_k=0

)

print("Output:n" + 100 * '-')

print(tokenizer.decode(sample_output[0], skip_special_tokens=True))

Output:

----------------------------------------------------------------------------------------------------

I enjoy walking with my cute dog for the remainder of the day, but this had me staying in an unusual room and never occurring nights out with friends (which can all the time be wondered for a mere minute or so at this point).

Interesting! The text seems alright – but when taking a better look, it

is just not very coherent and doesn’t sound prefer it was written by a

human. That’s the large problem when sampling word sequences: The models

often generate incoherent gibberish, cf. Ari Holtzman et al.

(2019).

A trick is to make the distribution sharper

(increasing the likelihood of high probability words and decreasing the

likelihood of low probability words) by lowering the so-called

temperature of the

softmax.

An illustration of applying temperature to our example from above could

look as follows.

The conditional next word distribution of step becomes much

sharper leaving almost no likelihood for word to be

chosen.

Let’s examine how we will cool down the distribution within the library by

setting temperature=0.6:

set_seed(42)

sample_output = model.generate(

**model_inputs,

max_new_tokens=40,

do_sample=True,

top_k=0,

temperature=0.6,

)

print("Output:n" + 100 * '-')

print(tokenizer.decode(sample_output[0], skip_special_tokens=True))

Output:

----------------------------------------------------------------------------------------------------

I enjoy walking with my cute dog, but I don't love to chew on it. I wish to eat it and never chew on it. I wish to give you the chance to walk with my dog."

So how did you select

OK. There are less weird n-grams and the output is a little more coherent

now! While applying temperature could make a distribution less random, in

its limit, when setting temperature , temperature scaled

sampling becomes equal to greedy decoding and can suffer from the identical

problems as before.

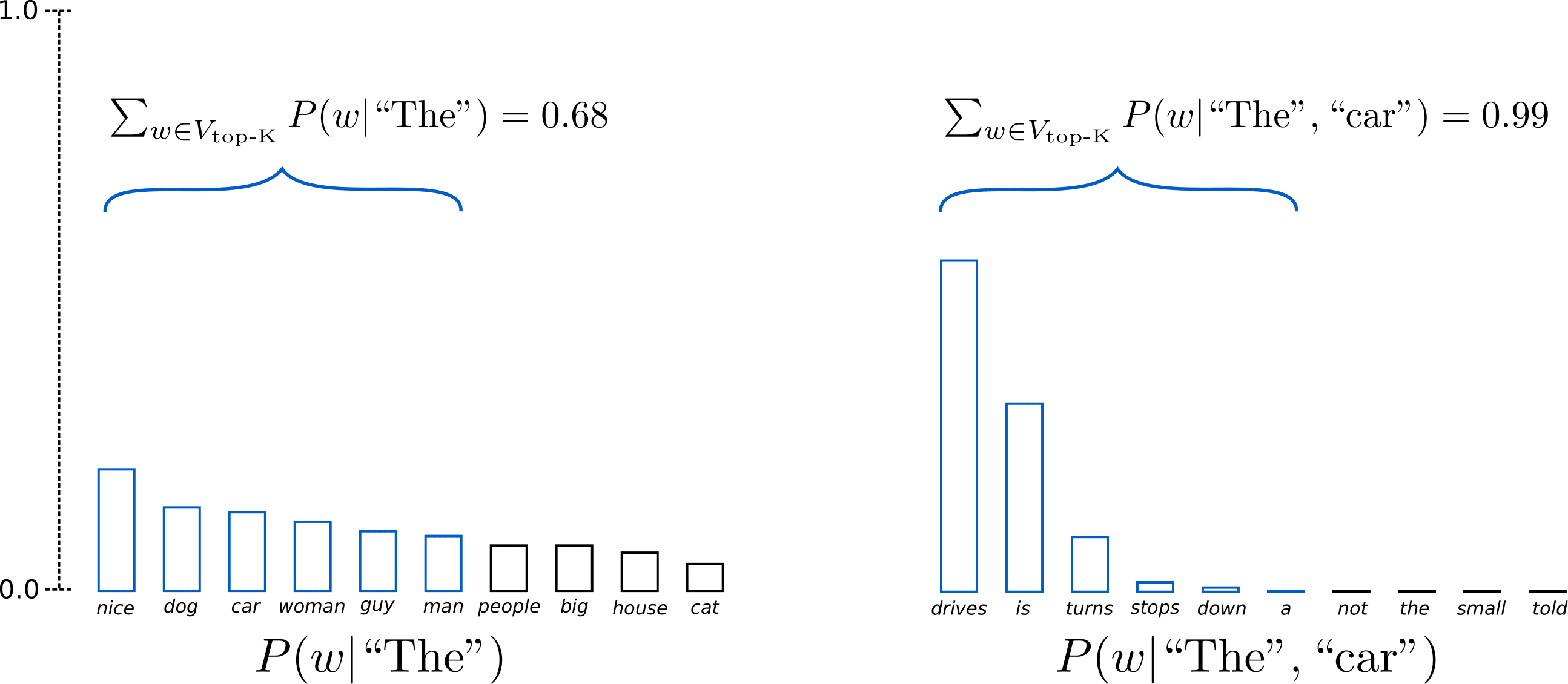

Top-K Sampling

Fan et. al (2018) introduced a

easy, but very powerful sampling scheme, called Top-K sampling.

In Top-K sampling, the K almost definitely next words are filtered and the

probability mass is redistributed amongst only those K next words. GPT2

adopted this sampling scheme, which was one in all the explanations for its

success in story generation.

We extend the range of words used for each sampling steps in the instance

above from 3 words to 10 words to higher illustrate Top-K sampling.

Having set , in each sampling steps we limit our sampling pool

to six words. While the 6 almost definitely words, defined as

encompass only ca. two-thirds of the entire

probability mass in step one, it includes almost all the

probability mass within the second step. Nevertheless, we see that it

successfully eliminates the quite weird candidates within the second sampling step.

Let’s examine how Top-K could be utilized in the library by setting top_k=50:

set_seed(42)

sample_output = model.generate(

**model_inputs,

max_new_tokens=40,

do_sample=True,

top_k=50

)

print("Output:n" + 100 * '-')

print(tokenizer.decode(sample_output[0], skip_special_tokens=True))

Output:

----------------------------------------------------------------------------------------------------

I enjoy walking with my cute dog for the remainder of the day, but this time it was hard for me to work out what to do with it. (One reason I asked this for just a few months back is that I had a

Not bad in any respect! The text is arguably probably the most human-sounding text so

far. One concern though with Top-K sampling is that it doesn’t

dynamically adapt the variety of words which are filtered from the following

word probability distribution . This could be

problematic as some words is likely to be sampled from a really sharp

distribution (distribution on the proper within the graph above), whereas

others from a rather more flat distribution (distribution on the left in

the graph above).

In step , Top-K eliminates the likelihood to sample

, which seem to be reasonable

candidates. Alternatively, in step the tactic includes the

arguably ill-fitted words within the sample pool of

words. Thus, limiting the sample pool to a hard and fast size K could endanger

the model to provide gibberish for sharp distributions and limit the

model’s creativity for flat distribution. This intuition led Ari

Holtzman et al. (2019) to create

Top-p– or nucleus-sampling.

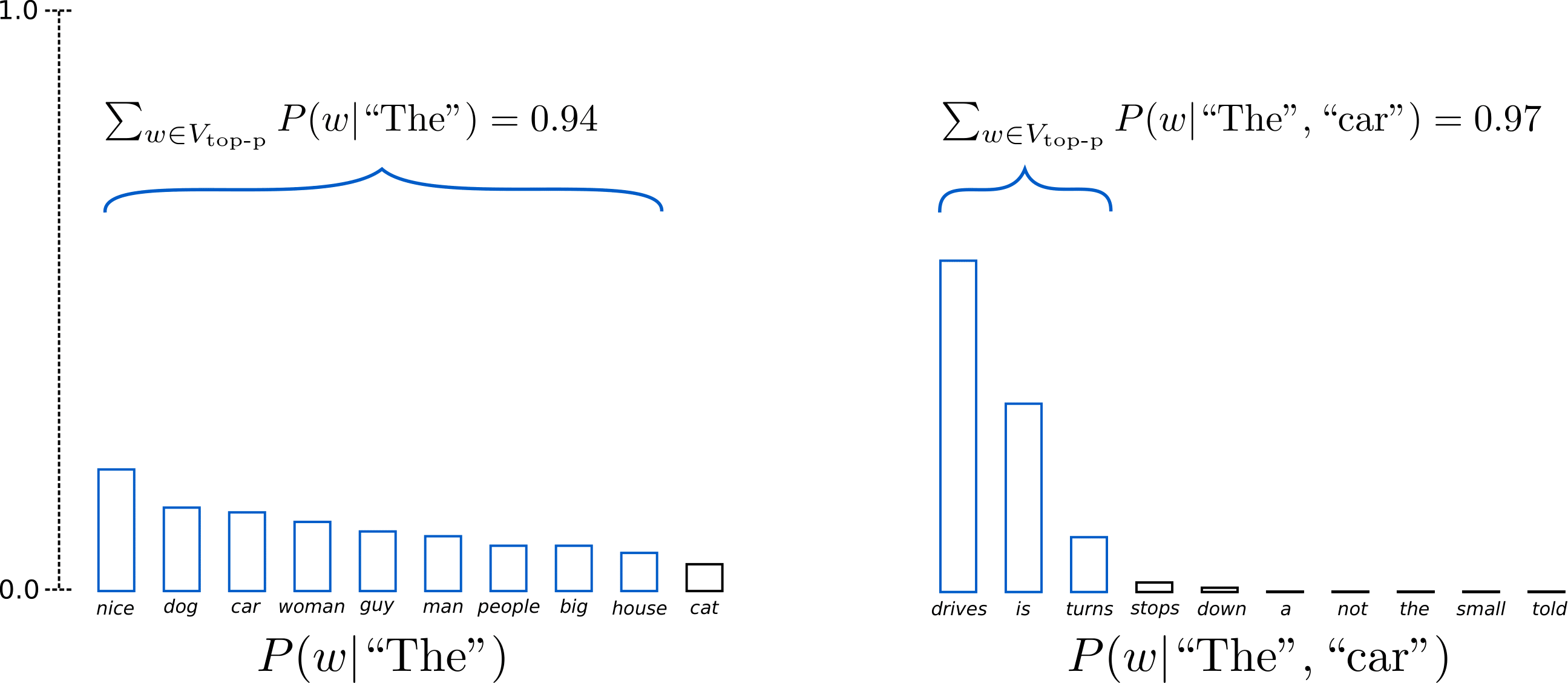

Top-p (nucleus) sampling

As a substitute of sampling only from the almost definitely K words, in Top-p

sampling chooses from the smallest possible set of words whose

cumulative probability exceeds the probability p. The probability mass

is then redistributed amongst this set of words. This fashion, the dimensions of the

set of words (a.k.a the variety of words within the set) can dynamically

increase and reduce in keeping with the following word’s probability

distribution. Okay, that was very wordy, let’s visualize.

Having set , Top-p sampling picks the minimum variety of

words to exceed together of the probability mass, defined as

. In the primary example, this included the 9 most

likely words, whereas it only has to select the highest 3 words within the second

example to exceed 92%. Quite easy actually! It could actually be seen that it

keeps a wide selection of words where the following word is arguably less

predictable, e.g. , and only just a few words when

the following word seems more predictable, e.g.

.

Alright, time to ascertain it out in transformers! We activate Top-p

sampling by setting 0 < top_p < 1:

set_seed(42)

sample_output = model.generate(

**model_inputs,

max_new_tokens=40,

do_sample=True,

top_p=0.92,

top_k=0

)

print("Output:n" + 100 * '-')

print(tokenizer.decode(sample_output[0], skip_special_tokens=True))

Output:

----------------------------------------------------------------------------------------------------

I enjoy walking with my cute dog for the remainder of the day, but this had me staying in an unusual room and never occurring nights out with friends (which can all the time be my craving for such a spacious screen on my desk

Great, that appears like it might have been written by a human. Well,

perhaps not quite yet.

While in theory, Top-p seems more elegant than Top-K, each methods

work well in practice. Top-p may also be used together with

Top-K, which might avoid very low ranked words while allowing for some

dynamic selection.

Finally, to get multiple independently sampled outputs, we will again

set the parameter num_return_sequences > 1:

set_seed(42)

sample_outputs = model.generate(

**model_inputs,

max_new_tokens=40,

do_sample=True,

top_k=50,

top_p=0.95,

num_return_sequences=3,

)

print("Output:n" + 100 * '-')

for i, sample_output in enumerate(sample_outputs):

print("{}: {}".format(i, tokenizer.decode(sample_output, skip_special_tokens=True)))

Output:

----------------------------------------------------------------------------------------------------

0: I enjoy walking with my cute dog for the remainder of the day, but this time it was hard for me to work out what to do with it. After I finally checked out this for just a few moments, I immediately thought, "

1: I enjoy walking with my cute dog. The one time I felt like walking was after I was working, so it was awesome for me. I didn't need to walk for days. I'm really curious how she will walk with me

2: I enjoy walking with my cute dog (Chama-I-I-I-I-I), and I actually enjoy running. I play in slightly game I play with my brother through which I take pictures of our homes.

Cool, now it is best to have all of the tools to let your model write your

stories with transformers!

Conclusion

As ad-hoc decoding methods, top-p and top-K sampling appear to

produce more fluent text than traditional greedy – and beam search

on open-ended language generation. There’s

evidence that the apparent flaws of greedy and beam search –

mainly generating repetitive word sequences – are brought on by the model

(especially the way in which the model is trained), quite than the decoding

method, cf. Welleck et al.

(2019). Also, as demonstrated in

Welleck et al. (2020), it looks as

top-K and top-p sampling also suffer from generating repetitive word

sequences.

In Welleck et al. (2019), the

authors show that in keeping with human evaluations, beam search can

generate more fluent text than Top-p sampling, when adapting the

model’s training objective.

Open-ended language generation is a rapidly evolving field of research

and because it is commonly the case there isn’t any one-size-fits-all method here,

so one has to see what works best in a single’s specific use case.

Fortunately, you can check out all the several decoding methods in

transfomers 🤗 — you may have an summary of the available methods

here.

Because of everybody, who has contributed to the blog post: Alexander Rush, Julien Chaumand, Thomas Wolf, Victor Sanh, Sam Shleifer, Clément Delangue, Yacine Jernite, Oliver Åstrand and John de Wasseige.

Appendix

generate has evolved right into a highly composable method, with flags to govern the resulting text in lots of

directions that weren’t covered on this blog post. Listed below are just a few helpful pages to guide you:

In the event you find that navigating our docs is difficult and you may’t easily find what you are on the lookout for, drop us a message in this GitHub issue. Your feedback is critical to set our future direction! 🤗