Over the past few months, we made several improvements to our transformers and tokenizers libraries, with the goal of constructing it easier than ever to train a brand new language model from scratch.

On this post we’ll demo how you can train a “small” model (84 M parameters = 6 layers, 768 hidden size, 12 attention heads) – that’s the identical variety of layers & heads as DistilBERT – on Esperanto. We’ll then fine-tune the model on a downstream task of part-of-speech tagging.

Esperanto is a constructed language with a goal of being easy to learn. We pick it for this demo for several reasons:

- it’s a comparatively low-resource language (although it’s spoken by ~2 million people) so this demo is less boring than training yet another English model 😁

- its grammar is extremely regular (e.g. all common nouns end in -o, all adjectives in -a) so we should always get interesting linguistic results even on a small dataset.

- finally, the overarching goal at the muse of the language is to bring people closer (fostering world peace and international understanding) which one could argue is aligned with the goal of the NLP community 💚

N.B. You won’t need to grasp Esperanto to grasp this post, but if you happen to do need to learn it, Duolingo has a pleasant course with 280k lively learners.

Our model goes to be called… wait for it… EsperBERTo 😂

1. Discover a dataset

First, allow us to discover a corpus of text in Esperanto. Here we’ll use the Esperanto portion of the OSCAR corpus from INRIA.

OSCAR is a big multilingual corpus obtained by language classification and filtering of Common Crawl dumps of the Web.

The Esperanto portion of the dataset is barely 299M, so we’ll concatenate with the Esperanto sub-corpus of the Leipzig Corpora Collection, which is comprised of text from diverse sources like news, literature, and wikipedia.

The ultimate training corpus has a size of three GB, which continues to be small – in your model, you’ll improve results the more data you possibly can get to pretrain on.

2. Train a tokenizer

We decide to coach a byte-level Byte-pair encoding tokenizer (the identical as GPT-2), with the identical special tokens as RoBERTa. Let’s arbitrarily pick its size to be 52,000.

We recommend training a byte-level BPE (fairly than let’s say, a WordPiece tokenizer like BERT) because it’ll start constructing its vocabulary from an alphabet of single bytes, so all words might be decomposable into tokens (no more

from pathlib import Path

from tokenizers import ByteLevelBPETokenizer

paths = [str(x) for x in Path("./eo_data/").glob("**/*.txt")]

tokenizer = ByteLevelBPETokenizer()

tokenizer.train(files=paths, vocab_size=52_000, min_frequency=2, special_tokens=[

"",

"" ,

"",

"" ,

"" ,

])

tokenizer.save_model(".", "esperberto")

And here’s a rather accelerated capture of the output:

On our dataset, training took about ~5 minutes.

🔥🔥 Wow, that was fast! ⚡️🔥

We now have each a vocab.json, which is a listing of probably the most frequent tokens ranked by frequency, and a merges.txt list of merges.

{

"": 0,

"" : 1,

"": 2,

"" : 3,

"" : 4,

"!": 5,

""": 6,

"#": 7,

"$": 8,

"%": 9,

"&": 10,

"'": 11,

"(": 12,

")": 13,

# ...

}

# merges.txt

l a

Ġ k

o n

Ġ la

t a

Ġ e

Ġ d

Ġ p

# ...

What’s great is that our tokenizer is optimized for Esperanto. In comparison with a generic tokenizer trained for English, more native words are represented by a single, unsplit token. Diacritics, i.e. accented characters utilized in Esperanto – ĉ, ĝ, ĥ, ĵ, ŝ, and ŭ – are encoded natively. We also represent sequences in a more efficient manner. Here on this corpus, the typical length of encoded sequences is ~30% smaller as when using the pretrained GPT-2 tokenizer.

Here’s how you should use it in tokenizers, including handling the RoBERTa special tokens – in fact, you’ll also give you the chance to make use of it directly from transformers.

from tokenizers.implementations import ByteLevelBPETokenizer

from tokenizers.processors import BertProcessing

tokenizer = ByteLevelBPETokenizer(

"./models/EsperBERTo-small/vocab.json",

"./models/EsperBERTo-small/merges.txt",

)

tokenizer._tokenizer.post_processor = BertProcessing(

("", tokenizer.token_to_id("")),

("", tokenizer.token_to_id("")),

)

tokenizer.enable_truncation(max_length=512)

print(

tokenizer.encode("Mi estas Julien.")

)

3. Train a language model from scratch

Update: The associated Colab notebook uses our recent Trainer directly, as an alternative of through a script. Be happy to choose the approach you want best.

We are going to now train our language model using the run_language_modeling.py script from transformers (newly renamed from run_lm_finetuning.py because it now supports training from scratch more seamlessly). Just remember to depart --model_name_or_path to None to coach from scratch vs. from an existing model or checkpoint.

We’ll train a RoBERTa-like model, which is a BERT-like with a few changes (check the documentation for more details).

Because the model is BERT-like, we’ll train it on a task of Masked language modeling, i.e. the predict how you can fill arbitrary tokens that we randomly mask within the dataset. That is taken care of by the instance script.

We just have to do two things:

- implement a straightforward subclass of

Datasetthat loads data from our text files- Depending in your use case, you would possibly not even need to jot down your personal subclass of Dataset, if certainly one of the provided examples (

TextDatasetandLineByLineTextDataset) works – but there are a number of custom tweaks that you simply might need to add based on what your corpus looks like.

- Depending in your use case, you would possibly not even need to jot down your personal subclass of Dataset, if certainly one of the provided examples (

- Select and experiment with different sets of hyperparameters.

Here’s a straightforward version of our EsperantoDataset.

from torch.utils.data import Dataset

class EsperantoDataset(Dataset):

def __init__(self, evaluate: bool = False):

tokenizer = ByteLevelBPETokenizer(

"./models/EsperBERTo-small/vocab.json",

"./models/EsperBERTo-small/merges.txt",

)

tokenizer._tokenizer.post_processor = BertProcessing(

("", tokenizer.token_to_id("")),

("", tokenizer.token_to_id("")),

)

tokenizer.enable_truncation(max_length=512)

self.examples = []

src_files = Path("./data/").glob("*-eval.txt") if evaluate else Path("./data/").glob("*-train.txt")

for src_file in src_files:

print("🔥", src_file)

lines = src_file.read_text(encoding="utf-8").splitlines()

self.examples += [x.ids for x in tokenizer.encode_batch(lines)]

def __len__(self):

return len(self.examples)

def __getitem__(self, i):

return torch.tensor(self.examples[i])

In case your dataset could be very large, you possibly can opt to load and tokenize examples on the fly, fairly than as a preprocessing step.

Here is one specific set of hyper-parameters and arguments we pass to the script:

--output_dir ./models/EsperBERTo-small-v1

--model_type roberta

--mlm

--config_name ./models/EsperBERTo-small

--tokenizer_name ./models/EsperBERTo-small

--do_train

--do_eval

--learning_rate 1e-4

--num_train_epochs 5

--save_total_limit 2

--save_steps 2000

--per_gpu_train_batch_size 16

--evaluate_during_training

--seed 42

As usual, pick the biggest batch size you possibly can fit in your GPU(s).

🔥🔥🔥 Let’s start training!! 🔥🔥🔥

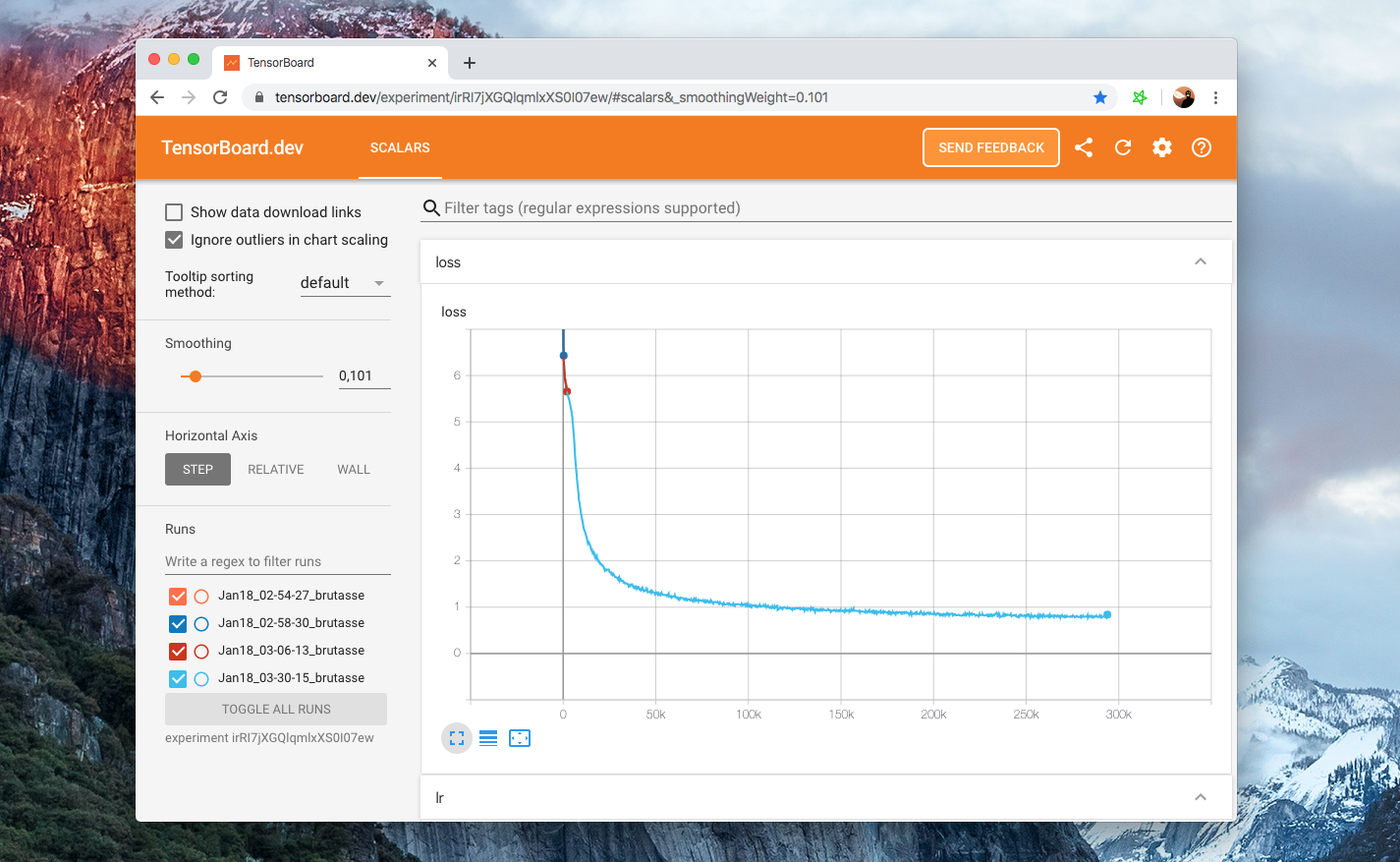

Here you possibly can check our Tensorboard for one particular set of hyper-parameters:

Our example scripts log into the Tensorboard format by default, under

runs/. Then to view your board just runtensorboard dev upload --logdir runs– this can arrange tensorboard.dev, a Google-managed hosted version that allows you to share your ML experiment with anyone.

4. Check that the LM actually trained

Except for taking a look at the training and eval losses happening, the simplest approach to check whether our language model is learning anything interesting is via the FillMaskPipeline.

Pipelines are easy wrappers around tokenizers and models, and the ‘fill-mask’ one will allow you to input a sequence containing a masked token (here,

from transformers import pipeline

fill_mask = pipeline(

"fill-mask",

model="./models/EsperBERTo-small",

tokenizer="./models/EsperBERTo-small"

)

result = fill_mask("La suno ." )

Okay, easy syntax/grammar works. Let’s try a rather more interesting prompt:

fill_mask("Jen la komenco de bela ." )

“Jen la komenco de bela tago”, indeed!

With more complex prompts, you possibly can probe whether your language model captured more semantic knowledge and even some form of (statistical) common sense reasoning.

5. Advantageous-tune your LM on a downstream task

We now can fine-tune our recent Esperanto language model on a downstream task of Part-of-speech tagging.

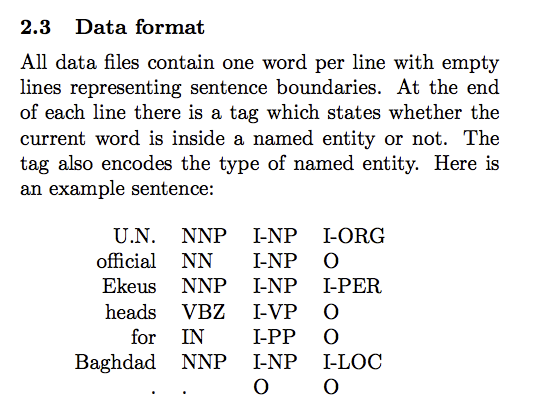

As mentioned before, Esperanto is a highly regular language where word endings typically condition the grammatical a part of speech. Using a dataset of annotated Esperanto POS tags formatted within the CoNLL-2003 format (see example below), we will use the run_ner.py script from transformers.

POS tagging is a token classification task just as NER so we will just use the very same script.

Again, here’s the hosted Tensorboard for this fine-tuning. We train for 3 epochs using a batch size of 64 per GPU.

Training and eval losses converge to small residual values because the task is fairly easy (the language is regular) – it’s still fun to give you the chance to coach it end-to-end 😃.

This time, let’s use a TokenClassificationPipeline:

from transformers import TokenClassificationPipeline, pipeline

MODEL_PATH = "./models/EsperBERTo-small-pos/"

nlp = pipeline(

"ner",

model=MODEL_PATH,

tokenizer=MODEL_PATH,

)

nlp("Mi estas viro kej estas tago varma.")

Looks prefer it worked! 🔥

For a more difficult dataset for NER, @stefan-it really useful that we could train on the silver standard dataset from WikiANN

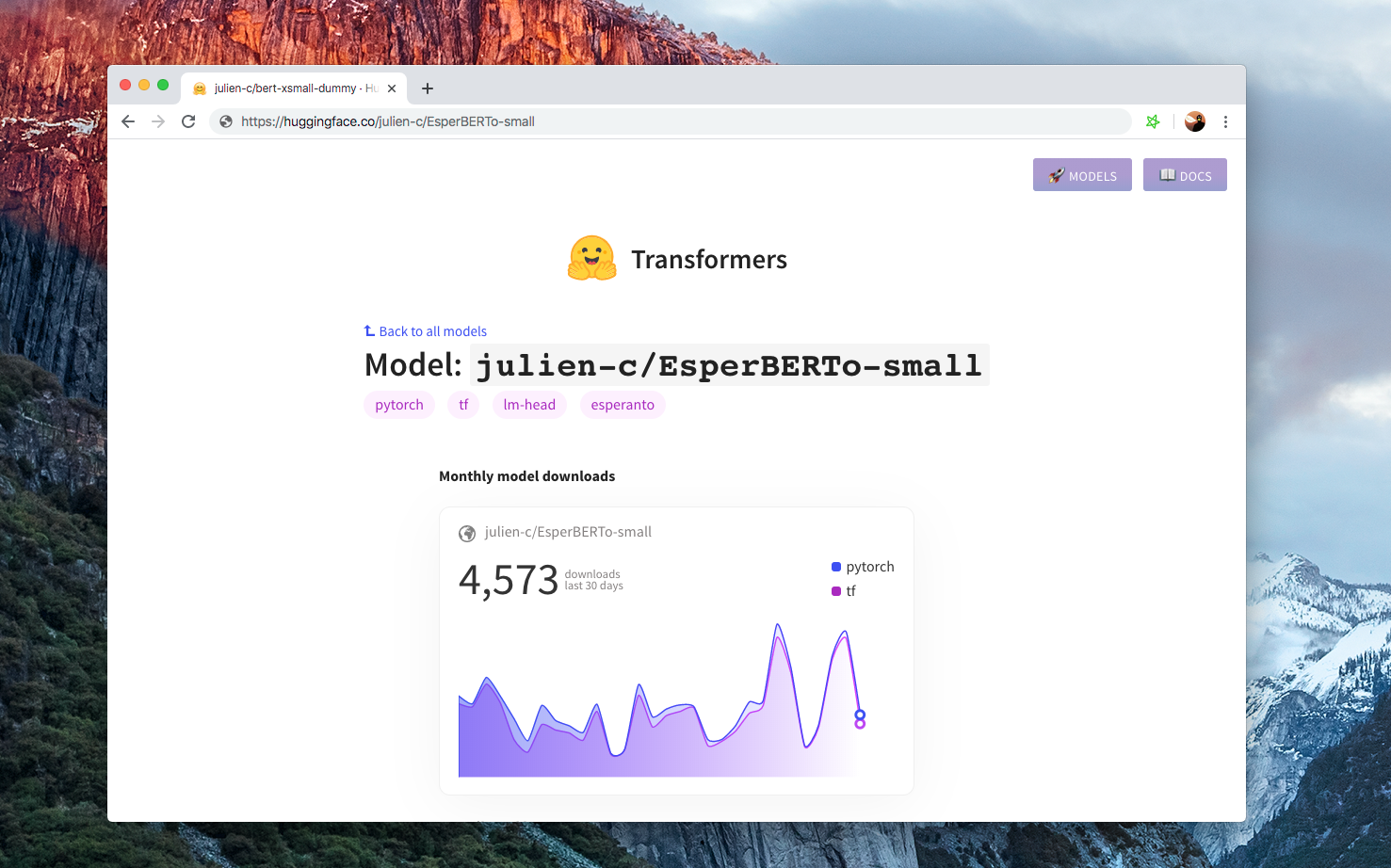

6. Share your model 🎉

Finally, when you’ve got a pleasant model, please take into consideration sharing it with the community:

- upload your model using the CLI:

transformers-cli upload - write a README.md model card and add it to the repository under

model_cards/. Your model card should ideally include:- a model description,

- training params (dataset, preprocessing, hyperparameters),

- evaluation results,

- intended uses & limitations

- whatever else is useful! 🤓

TADA!

➡️ Your model has a page on https://huggingface.co/models and everybody can load it using AutoModel.from_pretrained("username/model_name").

If you must take a take a look at models in numerous languages, check https://huggingface.co/models