A guest blog post by Stas Bekman

This text is an try to document how fairseq wmt19 translation system was ported to transformers.

I used to be on the lookout for some interesting project to work on and Sam Shleifer suggested I work on porting a prime quality translator.

I read the short paper: Facebook FAIR’s WMT19 News Translation Task Submission that describes the unique system and decided to offer it a try.

Initially, I had no idea learn how to approach this complex project and Sam helped me to break it right down to smaller tasks, which was of an excellent help.

I selected to work with the pre-trained en-ru/ru-en models during porting as I speak each languages. It’d have been way more difficult to work with de-en/en-de pairs as I do not speak German, and with the ability to evaluate the interpretation quality by just reading and making sense of the outputs on the advanced stages of the porting process saved me quite a lot of time.

Also, as I did the initial porting with the en-ru/ru-en models, I used to be totally unaware that the de-en/en-de models used a merged vocabulary, whereas the previous used 2 separate vocabularies of various sizes. So once I did the more complicated work of supporting 2 separate vocabularies, it was trivial to get the merged vocabulary to work.

Let’s cheat

Step one was to cheat, after all. Why make an enormous effort when one could make a bit one. So I wrote a short notebook that in just a few lines of code provided a proxy to fairseq and emulated transformers API.

If no other things, but basic translation, was required, this might have been enough. But, after all, we desired to have the total porting, so after having this small victory, I moved onto much harder things.

Preparations

For the sake of this text let’s assume that we work under ~/porting, and due to this fact let’s create this directory:

mkdir ~/porting

cd ~/porting

We’d like to put in just a few things for this work:

# install fairseq

git clone https://github.com/pytorch/fairseq

cd fairseq

pip install -e .

# install mosesdecoder under fairseq

git clone https://github.com/moses-smt/mosesdecoder

# install fastBPE under fairseq

git clone git@github.com:glample/fastBPE.git

cd fastBPE; g++ -std=c++11 -pthread -O3 fastBPE/principal.cc -IfastBPE -o fast; cd -

cd -

# install transformers

git clone https://github.com/huggingface/transformers/

pip install -e .[dev]

Files

As a fast overview, the next files needed to be created and written:

There are other files that needed to be modified as well, we are going to discuss those towards the top.

Conversion

One of the vital parts of the porting process is to create a script that may take all of the available source data provided by the unique developer of the model, which incorporates a checkpoint with pre-trained weights, model and training configuration, dictionaries and tokenizer support files, and convert them right into a latest set of model files supported by transformers. You will discover the ultimate conversion script here: src/transformers/convert_fsmt_original_pytorch_checkpoint_to_pytorch.py

I began this process by copying one in all the prevailing conversion scripts src/transformers/convert_bart_original_pytorch_checkpoint_to_pytorch.py, gutted most of it out after which steadily added parts to it as I used to be progressing within the porting process.

Throughout the development I used to be testing all my code against a neighborhood copy of the converted model files, and only on the very end when all the pieces was ready I uploaded the files to 🤗 s3 after which continued testing against the net version.

fairseq model and its support files

Let’s first have a look at what data we get with the fairseq pre-trained model.

We’re going to use the convenient torch.hub API, which makes it very easy to deploy models submitted to that hub:

import torch

torch.hub.load('pytorch/fairseq', 'transformer.wmt19.en-ru', checkpoint_file="model4.pt",

tokenizer="moses", bpe="fastbpe")

This code downloads the pre-trained model and its support files. I discovered this information on the page corresponding to fairseq on the pytorch hub.

To see what’s contained in the downloaded files, we’ve to first seek out the suitable folder under ~/.cache.

ls -1 ~/.cache/torch/hub/pytorch_fairseq/

shows:

15bca559d0277eb5c17149cc7e808459c6e307e5dfbb296d0cf1cfe89bb665d7.ded47c1b3054e7b2d78c0b86297f36a170b7d2e7980d8c29003634eb58d973d9

15bca559d0277eb5c17149cc7e808459c6e307e5dfbb296d0cf1cfe89bb665d7.ded47c1b3054e7b2d78c0b86297f36a170b7d2e7980d8c29003634eb58d973d9.json

You will have multiple entry there if you may have been using the hub for other models.

Let’s make a symlink in order that we are able to easily seek advice from that obscure cache folder name down the road:

ln -s /code/data/cache/torch/hub/pytorch_fairseq/15bca559d0277eb5c17149cc7e808459c6e307e5dfbb296d0cf1cfe89bb665d7.ded47c1b3054e7b2d78c0b86297f36a170b7d2e7980d8c29003634eb58d973d9

~/porting/pytorch_fairseq_model

Note: the trail may very well be different once you try it yourself, for the reason that hash value of the model could change. You will discover the suitable one in ~/.cache/torch/hub/pytorch_fairseq/

If we glance inside that folder:

ls -l ~/porting/pytorch_fairseq_model/

total 13646584

-rw-rw-r-- 1 stas stas 532048 Sep 8 21:29 bpecodes

-rw-rw-r-- 1 stas stas 351706 Sep 8 21:29 dict.en.txt

-rw-rw-r-- 1 stas stas 515506 Sep 8 21:29 dict.ru.txt

-rw-rw-r-- 1 stas stas 3493170533 Sep 8 21:28 model1.pt

-rw-rw-r-- 1 stas stas 3493170532 Sep 8 21:28 model2.pt

-rw-rw-r-- 1 stas stas 3493170374 Sep 8 21:28 model3.pt

-rw-rw-r-- 1 stas stas 3493170386 Sep 8 21:29 model4.pt

we’ve:

model*.pt– 4 checkpoints (pytorchstate_dictwith all of the pre-trained weights, and various other things)dict.*.txt– source and goal dictionariesbpecodes– special map file utilized by the tokenizer

We’re going to research each of those files in the next sections.

How translation systems work

Here’s a very transient introduction to how computers translate text nowadays.

Computers cannot read text, but can only handle numbers. So when working with text we’ve to map a number of letters into numbers, and hand those to a pc program. When this system completes it too returns numbers, which we’d like to convert back into text.

Let’s start with two sentences in Russian and English and assign a singular number to every word:

я люблю следовательно я существую

10 11 12 10 13

I love due to this fact I am

20 21 22 20 23

The numbers starting with 10 map Russian words to unique numbers. The numbers starting with 20 do the identical for English words. When you don’t speak Russian, you possibly can still see that the word я (means ‘I’) repeats twice within the sentence and it gets the identical number 10 related to it. Same goes for I (20), which also repeats twice.

A translation system works in the next stages:

1. [я люблю следовательно я существую] # tokenize sentence into words

2. [10 11 12 10 13] # look up words within the input dictionary and convert to ids

3. [black box] # machine learning system magic

4. [20 21 22 20 23] # look up numbers within the output dictionary and convert to text

5. [I love therefore I am] # detokenize the tokens back right into a sentence

If we mix the primary two and the last two steps we get 3 stages:

- Encode input: break input text into tokens, create a dictionary (vocab) of those tokens and remap each token into a singular id in that dictionary.

- Generate translation: take input numbers, run them through a pre-trained machine learning model which predicts the perfect translation, and return output numbers.

- Decode output: take output numbers, look them up within the goal language dictionary, convert them back to text, and at last merge the converted tokens into the translated sentence.

The second stage may return one or several possible translations. Within the case of the latter the caller then can select essentially the most suitable end result. In this text I’ll seek advice from the beam search algorithm, which is one in all the ways multiple possible results are looked for. And the dimensions of the beam refers to what number of results are returned.

If there is barely one result that is requested, the model will select the one with the very best likelihood probability. If multiple results are requested it should return those results sorted by their probabilities.

Note that this same idea applies to the vast majority of NLP tasks, and not only translation.

Tokenization

Early systems tokenized sentences into words and punctuation marks. But since many languages have a whole bunch of hundreds of words, it is extremely taxing to work with huge vocabularies, because it dramatically increases the compute resource requirements and the length of time to finish the duty.

As of 2020 there are quite just a few different tokenizing methods, but many of the recent ones are based on sub-word tokenization – that’s as an alternative of breaking input text down into words, these modern tokenizers break the input text down into word segments and letters, using some type of training to acquire essentially the most optimal tokenization.

Let’s examine how this approach helps to cut back memory and computation requirements. If we’ve an input vocabulary of 6 common words: go, going, speak, speaking, sleep, sleeping – with word-level tokenization we find yourself with 6 tokens. Nonetheless, if we break these down into: go, go-ing, speak, speak-ing, etc., then we’ve only 4 tokens in our vocabulary: go, speak, sleep, ing. This straightforward change made a 33% improvement! Except, the sub-word tokenizers don’t use grammar rules, but they’re trained on massive text inputs to seek out such splits. In this instance I used an easy grammar rule as it is simple to know.

One other vital advantage of this approach is when coping with input text words, that are not in our vocabulary. For instance, as an instance our system encounters the word grokking (*), which may’t be present in its vocabulary. If we split it into `grokk’-‘ing’, then the machine learning model won’t know what to do with the primary a part of the word, but it surely gets a useful insight that ‘ing’ indicates a continuous tense, so it’ll give you the chance to provide a greater translation. In such situation the tokenizer will split the unknown segments into segments it knows, within the worst case reducing them to individual letters.

- footnote: to grok was coined in 1961 by Robert A. Heinlein in “Stranger in a Strange Land”: to know (something) intuitively or by empathy.

There are a lot of other nuances to why the trendy tokenization approach is way more superior than easy word tokenization, which won’t be covered within the scope of this text. Most of those systems are very complex to how they do the tokenization, as in comparison with the easy example of splitting ing endings that was just demonstrated, however the principle is analogous.

Tokenizer porting

Step one was to port the encoder a part of the tokenizer, where text is converted to ids. The decoder part won’t be needed until the very end.

fairseq’s tokenizer workings

Let’s understand how fairseq‘s tokenizer works.

fairseq (*) uses the Byte Pair Encoding (BPE) algorithm for tokenization.

Let’s examine what BPE does:

import torch

sentence = "Machine Learning is great"

checkpoint_file="model4.pt"

model = torch.hub.load('pytorch/fairseq', 'transformer.wmt19.en-ru', checkpoint_file=checkpoint_file, tokenizer="moses", bpe="fastbpe")

# encode step-by-step

tokens = model.tokenize(sentence)

print("tokenize ", tokens)

bpe = model.apply_bpe(tokens)

print("apply_bpe: ", bpe)

bin = model.binarize(bpe)

print("binarize: ", len(bin), bin)

# compare to model.encode - should give us the identical output

expected = model.encode(sentence)

print("encode: ", len(expected), expected)

gives us:

('tokenize ', 'Machine Learning is great')

('apply_bpe: ', 'Mach@@ ine Lear@@ ning is great')

('binarize: ', 7, tensor([10217, 1419, 3, 2515, 21, 1054, 2]))

('encode: ', 7, tensor([10217, 1419, 3, 2515, 21, 1054, 2]))

You’ll be able to see that model.encode does tokenize+apply_bpe+binarize – as we get the identical output.

The steps were:

tokenize: normally it’d escape apostrophes and do other pre-processing, in this instance it just returned the input sentence with none changesapply_bpe: BPE splits the input into words and sub-words in accordance with itsbpecodesfile supplied by the tokenizer – we get 6 BPE chunksbinarize: this simply remaps the BPE chunks from the previous step into their corresponding ids within the vocabulary (which can be downloaded with the model)

You’ll be able to seek advice from this notebook to see more details.

That is a great time to look contained in the bpecodes file. Here is the highest of the file:

$ head -15 ~/porting/pytorch_fairseq_model/bpecodes

e n 1423551864

e r 1300703664

e r 1142368899

i n 1130674201

c h 933581741

a n 845658658

t h 811639783

e n 780050874

u n 661783167

s t 592856434

e i 579569900

a r 494774817

a l 444331573

o r 439176406

th e 432025210

[...]

The highest entries of this file include very frequent short 1-letter sequences. As we are going to see in a moment the underside includes essentially the most common multi-letter sub-words and even full long words.

A special token indicates the top of the word. So in several lines quoted above we discover:

e n 1423551864

e r 1142368899

th e 432025210

If the second column doesn’t include , it signifies that this segment is found in the midst of the word and never at the top of it.

The last column declares the variety of times this BPE code has been encountered while being trained. The bpecodes file is sorted by this column – so essentially the most common BPE codes are on top.

By the counts we now know that when this tokenizer was trained it encountered 1,423,551,864 words ending in en, 1,142,368,899 words ending in er and 432,025,210 words ending in the. For the latter it most probably means the actual word the, but it surely would also include words like lathe, detest, tithe, etc.

These huge numbers also illustrate to us that this tokenizer was trained on an unlimited amount of text!

If we have a look at the underside of the identical file:

$ tail -10 ~/porting/pytorch_fairseq_model/bpecodes

4 x 109019

F ische 109018

sal aries 109012

e kt 108978

ver gewal 108978

Sten cils 108977

Freiwilli ge 108969

doub les 108965

po ckets 108953

Gö tz 108943

we see complex combos of sub-words that are still pretty frequent, e.g. sal aries for 109,012 times! So it got its own dedicated entry within the bpecodes map file.

How apply_bpe does its work? By looking up the assorted combos of letters within the bpecodes map file and when finding the longest fitting entry it uses that.

Going back to our example, we saw that it split Machine into: Mach@@ + ine – let’s check:

$ grep -i ^mach ~/porting/pytorch_fairseq_model/bpecodes

mach ine 463985

Mach t 376252

Mach ines 374223

mach ines 214050

Mach th 119438

You’ll be able to see that it has mach ine. We do not see Mach ine in there – so it have to be handling lower cased look ups when normal case will not be matching.

Now let’s check: Lear@@ + ning

$ grep -i ^lear ~/porting/pytorch_fairseq_model/bpecodes

lear n 675290

lear ned 505087

lear ning 417623

We discover lear ning is there (again the case will not be the identical).

Considering more about it, the case probably doesn’t matter for tokenization, so long as there’s a singular entry for Mach/Lear and mach/lear within the dictionary where it’s totally critical to have each case covered.

Hopefully, you possibly can now see how this works.

One confusing thing is that should you remember the apply_bpe output was:

('apply_bpe: ', 6, ['Mach@@', 'ine', 'Lear@@', 'ning', 'is', 'great'])

As a substitute of marking endings of the words with , it leaves those as is, but, as an alternative, marks words that weren’t the endings with @@. This might be so, because fastBPE implementation is utilized by fairseq and that is the way it does things. I had to vary this to suit the transformers implementation, which does not use fastBPE.

One very last thing to envision is the remapping of the BPE codes to vocabulary ids. To repeat, we had:

('apply_bpe: ', 'Mach@@ ine Lear@@ ning is great')

('binarize: ', 7, tensor([10217, 1419, 3, 2515, 21, 1054, 2]))

2 – the last token id is a eos (end of stream) token. It’s used to illustrate to the model the top of input.

After which Mach@@ gets remapped to 10217, and ine to 1419.

Let’s check that the dictionary file is in agreement:

$ grep ^Mach@@ ~/porting/pytorch_fairseq_model/dict.en.txt

Mach@@ 6410

$ grep "^ine " ~/porting/pytorch_fairseq_model/dict.en.txt

ine 88376

Wait a second – those aren’t the ids that we got after binarize, which needs to be 10217 and 1419 correspondingly.

It took some investigating to seek out out that the vocab file ids aren’t the ids utilized by the model and that internally it remaps them to latest ids once the vocab file is loaded. Luckily, I didn’t must determine how exactly it was done. As a substitute, I just used fairseq.data.dictionary.Dictionary.load to load the dictionary (*), which performed all of the re-mappings, – and I then saved the ultimate dictionary. I discovered about that Dictionary class by stepping through fairseq code with debugger.

- footnote: the more I work on porting models and datasets, the more I realize that putting the unique code to work for me, moderately than trying to copy it, is a big time saver and most significantly that code has already been tested – it’s too easy to miss something and down the road discover big problems! In any case, at the top, none of this conversion code will matter, since only the info it generated can be utilized by

transformersand its end users.

Here is the relevant a part of the conversion script:

from fairseq.data.dictionary import Dictionary

def rewrite_dict_keys(d):

# (1) remove word breaking symbol

# (2) add word ending symbol where the word will not be broken up,

# e.g.: d = {'le@@': 5, 'tt@@': 6, 'er': 7} => {'le': 5, 'tt': 6, 'er': 7}

d2 = dict((re.sub(r"@@$", "", k), v) if k.endswith("@@") else (re.sub(r"$", "", k), v) for k, v in d.items())

keep_keys = " ".split()

# restore the special tokens

for k in keep_keys:

del d2[f"{k}"]

d2[k] = d[k] # restore

return d2

src_dict_file = os.path.join(fsmt_folder_path, f"dict.{src_lang}.txt")

src_dict = Dictionary.load(src_dict_file)

src_vocab = rewrite_dict_keys(src_dict.indices)

src_vocab_size = len(src_vocab)

src_vocab_file = os.path.join(pytorch_dump_folder_path, "vocab-src.json")

print(f"Generating {src_vocab_file}")

with open(src_vocab_file, "w", encoding="utf-8") as f:

f.write(json.dumps(src_vocab, ensure_ascii=False, indent=json_indent))

# we did the identical for the goal dict - omitted quoting it here

# and we also had to save lots of `bpecodes`, it's called `merges.txt` within the transformers land

After running the conversion script, let’s check the converted dictionary:

$ grep '"Mach"' /code/huggingface/transformers-fair-wmt/data/wmt19-en-ru/vocab-src.json

"Mach": 10217,

$ grep '"ine":' /code/huggingface/transformers-fair-wmt/data/wmt19-en-ru/vocab-src.json

"ine": 1419,

We have now the proper ids within the transformers version of the vocab file.

As you possibly can see I also needed to re-write the vocabularies to match the transformers BPE implementation. We have now to vary:

['Mach@@', 'ine', 'Lear@@', 'ning', 'is', 'great']

to:

['Mach', 'ine', 'Lear', 'ning', 'is', 'great']

As a substitute of marking chunks which can be segments of a word, except the last segment, we mark segments or words which can be the ultimate segment. One can easily go from one variety of encoding to a different and back.

This successfully accomplished the porting of the primary a part of the model files. You’ll be able to see the ultimate version of the code here.

When you’re curious to look deeper there are more tinkering bits in this notebook.

Porting tokenizer’s encoder to transformers

transformers cannot depend on fastBPE for the reason that latter requires a C-compiler, but luckily someone already implemented a python version of the identical in tokenization_xlm.py.

So I just copied it to src/transformers/tokenization_fsmt.py and renamed the category names:

cp tokenization_xlm.py tokenization_fsmt.py

perl -pi -e 's|XLM|FSMT|ig; s|xlm|fsmt|g;' tokenization_fsmt.py

and with only a few changes I had a working encoder a part of the tokenizer. There was quite a lot of code that did not apply to the languages I needed to support, so I removed that code.

Since I needed 2 different vocabularies, as an alternative of 1 here in tokenizer and in every single place else I had to vary the code to support each. So for instance I needed to override the super-class’ methods:

def get_vocab(self) -> Dict[str, int]:

return self.get_src_vocab()

@property

def vocab_size(self) -> int:

return self.src_vocab_size

Since fairseq didn’t use bos (starting of stream) tokens, I also had to vary the code to not include those (*):

- return bos + token_ids_0 + sep

- return bos + token_ids_0 + sep + token_ids_1 + sep

+ return token_ids_0 + sep

+ return token_ids_0 + sep + token_ids_1 + sep

- footnote: that is the output of

diff(1)which shows the difference between two chunks of code – lines starting with-show what was removed, and with+what was added.

fairseq was also escaping characters and performing an aggressive dash splitting, so I needed to also change:

- [...].tokenize(text, return_str=False, escape=False)

+ [...].tokenize(text, return_str=False, escape=True, aggressive_dash_splits=True)

When you’re following along, and would love to see all of the changes I did to the unique tokenization_xlm.py, you possibly can do:

cp tokenization_xlm.py tokenization_orig.py

perl -pi -e 's|XLM|FSMT|g; s|xlm|fsmt|g;' tokenization_orig.py

diff -u tokenization_orig.py tokenization_fsmt.py | less

Just be certain that you are trying out the repository across the time fsmt was released, for the reason that 2 files could have diverged since then.

The ultimate stage was to run through a bunch of inputs and to make sure that the ported tokenizer produced the identical ids as the unique. You’ll be able to see this is completed in this notebook, which I used to be running repeatedly while attempting to determine learn how to make the outputs match.

That is how many of the porting process went, I’d take a small feature, run it the fairseq-way, get the outputs, do the identical with the transformers code, attempt to make the outputs match – fiddle with the code until it did, then try a unique type of input be certain that it produced the identical outputs, and so forth, until all inputs produced outputs that matched.

Porting the core translation functionality

Having had a comparatively quick success with porting the tokenizer (obviously, due to many of the code being there already), the following stage was way more complex. That is the generate() function which takes inputs ids, runs them through the model and returns output ids.

I had to interrupt it down into multiple sub-tasks. I needed to

- port the model weights.

- make

generate()work for a single beam (i.e. return only one result). - after which multiple beams (i.e. return multiple results).

I first researched which of the prevailing architectures were the closest to my needs. It was BART that fit the closest, so I went ahead and did:

cp modeling_bart.py modeling_fsmt.py

perl -pi -e 's|Bart|FSMT|ig; s|bart|fsmt|g;' modeling_fsmt.py

This was my start line that I needed to tweak to work with the model weights provided by fairseq.

Porting weights and configuration

The very first thing I did is to have a look at what was contained in the publicly shared checkpoint. This notebook shows what I did there.

I discovered that there have been 4 checkpoints in there. I had no idea what to do about it, so I began with an easier job of using just the primary checkpoint. Later I discovered that fairseq used all 4 checkpoints in an ensemble to get the perfect predictions, and that transformers currently doesn’t support that feature. When the porting was accomplished and I used to be in a position to measure the performance scores, I discovered that the model4.pt checkpoint provided the perfect rating. But in the course of the porting performance didn’t matter much. Since I used to be using just one checkpoint it was crucial that once I was comparing outputs, I had fairseq also use only one and the identical checkpoint.

To perform that I used a rather different fairseq API:

from fairseq import hub_utils

#checkpoint_file="model1.pt:model2.pt:model3.pt:model4.pt"

checkpoint_file="model1.pt"

model_name_or_path="transformer.wmt19.ru-en"

data_name_or_path="."

cls = fairseq.model_parallel.models.transformer.ModelParallelTransformerModel

models = cls.hub_models()

kwargs = {'bpe': 'fastbpe', 'tokenizer': 'moses'}

ru2en = hub_utils.from_pretrained(

model_name_or_path,

checkpoint_file,

data_name_or_path,

archive_map=models,

**kwargs

)

First I checked out the model:

print(ru2en["models"][0])

TransformerModel(

(encoder): TransformerEncoder(

(dropout_module): FairseqDropout()

(embed_tokens): Embedding(31232, 1024, padding_idx=1)

(embed_positions): SinusoidalPositionalEmbedding()

(layers): ModuleList(

(0): TransformerEncoderLayer(

(self_attn): MultiheadAttention(

(dropout_module): FairseqDropout()

(k_proj): Linear(in_features=1024, out_features=1024, bias=True)

(v_proj): Linear(in_features=1024, out_features=1024, bias=True)

(q_proj): Linear(in_features=1024, out_features=1024, bias=True)

(out_proj): Linear(in_features=1024, out_features=1024, bias=True)

)

[...]

# the total output is within the notebook

which looked very just like BART’s architecture, with some slight differences in just a few layers – some were added, others removed. So this was great news as I didn’t should re-invent the wheel, but to only tweak a well-working design.

Note that within the code sample above I’m not using torch.load() to load state_dict. That is what I initially did and the result was most puzzling – I used to be missing self_attn.(k|q|v)_proj weights and as an alternative had a single self_attn.in_proj. After I tried loading the model using fairseq API, it fixed things up – apparently that model was old and was using an old architecture that had one set of weights for k/q/v and the newer architecture has them separate. When fairseq loads this old model, it rewrites the weights to match the trendy architecture.

I also used this notebook to check the state_dicts visually. In that notebook you can even see that fairseq fetches a 2.2GB-worth of information in last_optimizer_state, which we are able to safely ignore, and have a 3 times leaner final model size.

Within the conversion script I also needed to remove some state_dict keys, which I wasn’t going to make use of, e.g. model.encoder.version, model.model and just a few others.

Next we have a look at the configuration args:

args = dict(vars(ru2en["args"]))

pprint(args)

'activation_dropout': 0.0,

'activation_fn': 'relu',

'adam_betas': '(0.9, 0.98)',

'adam_eps': 1e-08,

'adaptive_input': False,

'adaptive_softmax_cutoff': None,

'adaptive_softmax_dropout': 0,

'arch': 'transformer_wmt_en_de_big',

'attention_dropout': 0.1,

'bpe': 'fastbpe',

[... full output is in the notebook ...]

okay, we are going to copy those to configure the model. I needed to rename a number of the argument names, wherever transformers used different names for the corresponding configuration setting. So the re-mapping of configuration looks as following:

model_conf = {

"architectures": ["FSMTForConditionalGeneration"],

"model_type": "fsmt",

"activation_dropout": args["activation_dropout"],

"activation_function": "relu",

"attention_dropout": args["attention_dropout"],

"d_model": args["decoder_embed_dim"],

"dropout": args["dropout"],

"init_std": 0.02,

"max_position_embeddings": args["max_source_positions"],

"num_hidden_layers": args["encoder_layers"],

"src_vocab_size": src_vocab_size,

"tgt_vocab_size": tgt_vocab_size,

"langs": [src_lang, tgt_lang],

[...]

"bos_token_id": 0,

"pad_token_id": 1,

"eos_token_id": 2,

"is_encoder_decoder": True,

"scale_embedding": not args["no_scale_embedding"],

"tie_word_embeddings": args["share_all_embeddings"],

}

All that continues to be is to save lots of the configuration into config.json and create a brand new state_dict dump into pytorch.dump:

print(f"Generating {fsmt_tokenizer_config_file}")

with open(fsmt_tokenizer_config_file, "w", encoding="utf-8") as f:

f.write(json.dumps(tokenizer_conf, ensure_ascii=False, indent=json_indent))

[...]

print(f"Generating {pytorch_weights_dump_path}")

torch.save(model_state_dict, pytorch_weights_dump_path)

We have now the configuration and the model’s state_dict ported – yay!

You will discover the ultimate conversion code here.

Porting the architecture code

Now that we’ve the model weights and the model configuration ported, we just need to regulate the code copied from modeling_bart.py to match fairseq‘s functionality.

Step one was to take a sentence, encode it after which feed to the generate function – for fairseq and for transformers.

After just a few very failing attempts to get somewhere (*) – I quickly realized that with the present level of complexity using print as debugging method will get me nowhere, and neither will the essential pdb debugger. In an effort to be efficient and to give you the chance to look at multiple variables and have watches which can be code-evaluations I needed a serious visual debugger. I spent a day trying every kind of python debuggers and only once I tried pycharm I noticed that it was the tool that I needed. It was my first time using pycharm, but I quickly found out learn how to use it, because it was quite intuitive.

- footnote: the model was generating ‘nononono’ in Russian – that was fair and hilarious!

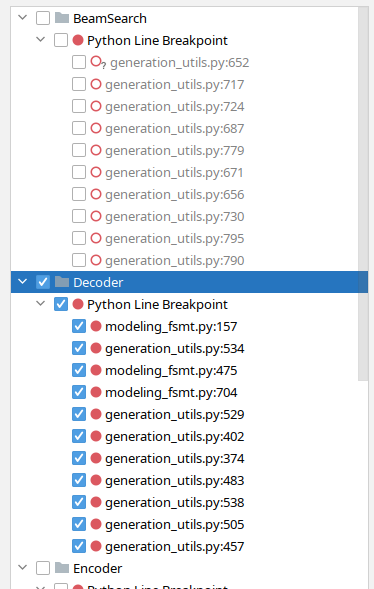

Over time I discovered an excellent feature in pycharm that allowed me to group breakpoints by functionality and I could turn whole groups on and off depending on what I used to be debugging. For instance, here I even have beam-search related break-points off and decoder ones on:

Now that I even have used this debugger to port FSMT, I do know that it could have taken me again and again over to make use of pdb to do the identical – I can have even given it up.

I began with 2 scripts:

(without the decode part first)

running each side by side, stepping through with debugger on both sides and comparing values of relevant variables – until I discovered the primary divergence. I then studied the code, made adjustments inside modeling_fsmt.py, restarted the debugger, quickly jumped to the purpose of divergence and re-checked the outputs. This cycle has been repeated multiple times until the outputs matched.

The primary things I had to vary was to remove just a few layers that weren’t utilized by fairseq after which add some latest layers it was using as an alternative. After which the remainder was primarily determining when to change to src_vocab_size and when to tgt_vocab_size – since within the core modules it’s just vocab_size, which weren’t accounting for a possible model that has 2 dictionaries. Finally, I discovered that just a few hyperparameter configurations weren’t the identical, and so those were modified too.

I first did this process for the simpler no-beam search, and once the outputs were 100% matching I repeated it with the more complicated beam search. Here, for instance, I discovered that fairseq was using the equivalent of early_stopping=True, whereas transformers has it as False by default. When early stopping is enabled it stops on the lookout for latest candidates as soon as there are as many candidates because the beam size, whereas when it’s disabled, the algorithm stops searching only when it will probably’t find higher probability candidates than what it already has. The fairseq paper mentions that an enormous beam size of fifty was used, which compensates for using early stopping.

Tokenizer decoder porting

Once I had the ported generate function produce pretty similar results to fairseq‘s generate I next needed to finish the last stage of decoding the outputs into the human readable text. This allowed me to make use of my eyes for a fast comparison and the standard of translation – something I could not do with output ids.

Just like the encoding process, this one was done in reverse.

The steps were:

- convert output ids into text strings

- remove BPE encodings

- detokenize – handle escaped characters, etc.

After doing a little more debugging here, I had to vary the way in which BPE was handled from the unique approach in tokenization_xlm.py and likewise run the outputs through the moses detokenizer.

def convert_tokens_to_string(self, tokens):

""" Converts a sequence of tokens (string) in a single string. """

- out_string = "".join(tokens).replace("", " ").strip()

- return out_string

+ # remove BPE

+ tokens = [t.replace(" ", "").replace("", " ") for t in tokens]

+ tokens = "".join(tokens).split()

+ # detokenize

+ text = self.moses_detokenize(tokens, self.tgt_lang)

+ return text

And all was good.

Uploading models to s3

Once the conversion script did a whole job of porting all of the required files to transformers, I uploaded the models to my 🤗 s3 account:

cd data

transformers-cli upload -y wmt19-ru-en

transformers-cli upload -y wmt19-en-ru

transformers-cli upload -y wmt19-de-en

transformers-cli upload -y wmt19-en-de

cd -

In the course of testing I used to be using my 🤗 s3 account and once my PR with the whole changes was able to be merged I asked within the PR to maneuver the models to the facebook organization account, since these models belong there.

Several times I needed to update just the config files, and I didn’t wish to re-upload the big models, so I wrote this little script that produces the suitable upload commands, which otherwise were too long to type and because of this were error-prone:

perl -le 'for $f (@ARGV) { print qq[transformers-cli upload -y $_/$f --filename $_/$f]

for map { "wmt19-$_" } ("en-ru", "ru-en", "de-en", "en-de")}'

vocab-src.json vocab-tgt.json tokenizer_config.json config.json

# add/remove files as needed

So if, for instance, I only needed to update all of the config.json files, the script above gave me a convenient copy-n-paste:

transformers-cli upload -y wmt19-en-ru/config.json --filename wmt19-en-ru/config.json

transformers-cli upload -y wmt19-ru-en/config.json --filename wmt19-ru-en/config.json

transformers-cli upload -y wmt19-de-en/config.json --filename wmt19-de-en/config.json

transformers-cli upload -y wmt19-en-de/config.json --filename wmt19-en-de/config.json

Once the upload was accomplished, these models may very well be accessed as (*):

tokenizer = FSMTTokenizer.from_pretrained("stas/wmt19-en-ru")

Before I made this upload I had to make use of the local path to the folder with the model files, e.g.:

tokenizer = FSMTTokenizer.from_pretrained("/code/huggingface/transformers-fair-wmt/data/wmt19-en-ru")

Vital: When you update the model files, and re-upload them, it’s essential to bear in mind that attributable to CDN caching the uploaded model could also be unavailable for as much as 24 hours after the upload – i.e. the old cached model can be delivered. So the one strategy to start using the brand new model sooner is by either:

- downloading it to a neighborhood path and using that path as an argument that gets passed to

from_pretrained(). - or using:

from_pretrained(..., use_cdn=False)in every single place for the following 24h – it isn’t enough to do it once.

AutoConfig, AutoTokenizer, etc.

One other change I needed to do is to plug the newly ported model into the automated model transformers system. That is used totally on the models website to load the model configuration, tokenizer and the principal class without providing any specific class names. For instance, within the case of FSMT one can do:

from transformers import AutoTokenizer, AutoModelWithLMHead

mname = "facebook/wmt19-en-ru"

tokenizer = AutoTokenizer.from_pretrained(mname)

model = AutoModelWithLMHead.from_pretrained(mname)

There are 3 *auto* files which have mappings to enable that:

-rw-rw-r-- 1 stas stas 16K Sep 23 13:53 src/transformers/configuration_auto.py

-rw-rw-r-- 1 stas stas 65K Sep 23 13:53 src/transformers/modeling_auto.py

-rw-rw-r-- 1 stas stas 13K Sep 23 13:53 src/transformers/tokenization_auto.py

Then the are the pipelines, which completely hide all of the NLP complexities from the top user and supply a quite simple API to simply pick a model and use it for a task at hand. For instance, here is how one could perform a summarization task using pipeline:

summarizer = pipeline("summarization", model="t5-base", tokenizer="t5-base")

summary = summarizer("Some long document here", min_length=5, max_length=20)

print(summary)

The interpretation pipelines are a piece in progress as of this writing, watch this document for updates for when translation can be supported (currently only just a few specific models/languages are supported).

Finally, there’s src/transforers/__init__.py to edit in order that one could do:

from transformers import FSMTTokenizer, FSMTForConditionalGeneration

as an alternative of:

from transformers.tokenization_fsmt import FSMTTokenizer

from transformers.modeling_fsmt import FSMTForConditionalGeneration

but either way works.

To search out all of the places I needed to plug FSMT in, I mimicked BartConfig, BartForConditionalGeneration and BartTokenizer. I just grepped which files had it and inserted corresponding entries for FSMTConfig, FSMTForConditionalGeneration and FSMTTokenizer.

$ egrep -l "(BartConfig|BartForConditionalGeneration|BartTokenizer)" src/transformers/*.py

| egrep -v "(marian|bart|pegasus|rag|fsmt)"

src/transformers/configuration_auto.py

src/transformers/generation_utils.py

src/transformers/__init__.py

src/transformers/modeling_auto.py

src/transformers/pipelines.py

src/transformers/tokenization_auto.py

Within the grep search I excluded the files that also include those classes.

Manual testing

Until now I used to be primarily using my very own scripts to do the testing.

Once I had the translator working, I converted the reversed ru-en model after which wrote two paraphrase scripts:

which took a sentence within the source language, translated it to a different language after which translated the results of that back to the unique language. This process normally ends in a paraphrased end result, attributable to differences in how different languages express similar things.

With the assistance of those scripts I discovered some more problems with the detokenizer, stepped through with the debugger and made the fsmt script produce the identical results because the fairseq version.

At this stage no-beam search was producing mostly similar results, but there was still some divergence within the beam search. In an effort to discover the special cases, I wrote a fsmt-port-validate.py script that used as inputs sacrebleu test data and it run that data through each fairseq and transformers translation and reported only mismatches. It quickly identified just a few remaining problems and observing the patterns I used to be in a position to fix those issues as well.

Porting other models

I next proceeded to port the en-de and de-en models.

I used to be surprised to find that these weren’t inbuilt the identical way. Each of those had a merged dictionary, so for a moment I felt frustration, since I assumed I’d now should do one other huge change to support that. But, I didn’t must make any changes, because the merged dictionary slot in with no need any changes. I just used 2 similar dictionaries – one as a source and a replica of it as a goal.

I wrote one other script to check all ported models’ basic functionality: fsmt-test-all.py.

Test Coverage

This next step was very vital – I needed to arrange an intensive testing for the ported model.

Within the transformers test suite most tests that cope with large models are marked as @slow and people do not get to run normally on CI (Continual Integration), as they’re, well, slow. So I needed to also create a tiny model, that has the identical structure as a standard pre-trained model, but it surely needed to be very small and it could have random weights. This tiny model is then might be used to check the ported functionality. It just cannot be used for quality testing, because it has just just a few weights and thus cannot really be trained to do anything practical. fsmt-make-tiny-model.py creates such a tiny model. The generated model with all of its dictionary and config files was just 3MB in size. I uploaded it to s3 using transformers-cli upload and now I used to be in a position to use it within the test suite.

Identical to with the code, I began by copying tests/test_modeling_bart.py and converting it to make use of FSMT, after which tweaking it to work with the brand new model.

I then converted just a few of my scripts I used for manual testing into unit tests – that was easy.

transformers has an enormous set of common tests that every model runs through – I needed to do some more tweaks to make these tests work for FSMT (primarily to regulate for the two dictionary setup) and I needed to override just a few tests, that weren’t possible to run attributable to the individuality of this model, with a view to skip them. You’ll be able to see the outcomes here.

I added yet one more test that performs a light-weight BLEU evaluation – I used just 8 text inputs for every of the 4 models and measured BLEU scores on those. Here is the test and the script that generated data.

SinusoidalPositionalEmbedding

fairseq used a rather different implementation of SinusoidalPositionalEmbedding than the one utilized by transformers. Initially I copied the fairseq implementation. But when attempting to get the test suite to work I could not get the torchscript tests to pass. SinusoidalPositionalEmbedding was written in order that it won’t be a part of state_dict and never get saved with the model weights – all of the weights generated by this class are deterministic and should not trained. fairseq used a trick to make this work transparently by not making its weights a parameter or a buffer, after which during forward switching the weights to the proper device. torchscript wasn’t taking this well, because it wanted all of the weights to be on the proper device before the primary forward call.

I needed to rewrite the implementation to convert it to a standard nn.Embedding subclass after which add functionality to not save these weights during save_pretrained() and for from_pretrained() to not complain if it will probably’t find those weights in the course of the state_dict loading.

Evaluation

I knew that the ported model was doing quite well based on my manual testing with a big body of text, but I didn’t understand how well the ported model performed comparatively to the unique. So it was time to judge.

For the duty of translation BLEU rating is used as an evaluation metric. transformers

has a script run_eval.py to perform the evaluation.

Here is an evaluation for the ru-en pair

export PAIR=ru-en

export MODEL=facebook/wmt19-$PAIR

export DATA_DIR=data/$PAIR

export SAVE_DIR=data/$PAIR

export BS=64

export NUM_BEAMS=5

export LENGTH_PENALTY=1.1

mkdir -p $DATA_DIR

sacrebleu -t wmt19 -l $PAIR --echo src > $DATA_DIR/val.source

sacrebleu -t wmt19 -l $PAIR --echo ref > $DATA_DIR/val.goal

PYTHONPATH="src:examples/seq2seq" python examples/seq2seq/run_eval.py $MODEL

$DATA_DIR/val.source $SAVE_DIR/test_translations.txt --reference_path $DATA_DIR/val.goal

--score_path $SAVE_DIR/test_bleu.json --bs $BS --task translation --num_beams $NUM_BEAMS

--length_penalty $LENGTH_PENALTY --info $MODEL --dump-args

which took just a few minutes to run and returned:

{'bleu': 39.0498, 'n_obs': 2000, 'runtime': 184, 'seconds_per_sample': 0.092,

'num_beams': 5, 'length_penalty': 1.1, 'info': 'ru-en'}

You’ll be able to see that the BLEU rating was 39.0498 and that it evaluated using 2000 test inputs, provided by sacrebleu using the wmt19 dataset.

Remember, I could not use the model ensemble, so I next needed to seek out the perfect performing checkpoint. For that purpose I wrote a script fsmt-bleu-eval-each-chkpt.py which converted each checkpoint, run the eval script and reported the perfect one. Consequently I knew that model4.pt was delivering the perfect performance, out of the 4 available checkpoints.

I wasn’t getting the identical BLEU scores because the ones reported in the unique paper, so I next needed to be certain that that we were comparing the identical data using the identical tools. Through asking on the fairseq issue I used to be given the code that was utilized by fairseq developers to get their BLEU scores – you can see it here. But, alas, their method was using a re-ranking approach which wasn’t disclosed. Furthermore, they evaled on outputs before detokenization and never the actual output, which apparently scores higher. Bottom line – we weren’t scoring in the identical way (*).

Currently, this ported model is barely behind the unique on the BLEU scores, because model ensemble will not be used, but it surely’s unimaginable to inform the precise difference until the identical measuring method is used.

Porting latest models

After uploading the 4 fairseq models here it was then suggested to port 3 wmt16 and a couple of wmt19 AllenAI models (Jungo Kasai, et al). The porting was a breeze, as I only needed to determine learn how to put all of the source files together, since they were unfolded through several unrelated archives. Once this was done the conversion worked and not using a hitch.

The one issue I discovered after porting is that I used to be getting a lower BLEU rating than the unique. Jungo Kasai, the creator of those models, was very helpful at suggesting that a custom hyper-parameterlength_penalty=0.6 was used, and once I plugged that in I used to be getting significantly better results.

This discovery lead me to write down a brand new script: run_eval_search.py, which might be used to go looking various hyper-parameters that will result in the perfect BLEU scores. Here is an example of its usage:

# search space

export PAIR=ru-en

export DATA_DIR=data/$PAIR

export SAVE_DIR=data/$PAIR

export BS=32

mkdir -p $DATA_DIR

sacrebleu -t wmt19 -l $PAIR --echo src > $DATA_DIR/val.source

sacrebleu -t wmt19 -l $PAIR --echo ref > $DATA_DIR/val.goal

PYTHONPATH="src:examples/seq2seq" python examples/seq2seq/run_eval_search.py stas/wmt19-$PAIR

$DATA_DIR/val.source $SAVE_DIR/test_translations.txt --reference_path $DATA_DIR/val.goal

--score_path $SAVE_DIR/test_bleu.json --bs $BS --task translation

--search="num_beams=5:8:11:15 length_penalty=0.6:0.7:0.8:0.9:1.0:1.1 early_stopping=true:false"

Here it searches though all of the possible combos of num_beams, length_penalty and early_stopping.

Once finished executing it reports:

bleu | num_beams | length_penalty | early_stopping

----- | --------- | -------------- | --------------

39.20 | 15 | 1.1 | 0

39.13 | 11 | 1.1 | 0

39.05 | 5 | 1.1 | 0

39.05 | 8 | 1.1 | 0

39.03 | 15 | 1.0 | 0

39.00 | 11 | 1.0 | 0

38.93 | 8 | 1.0 | 0

38.92 | 15 | 1.1 | 1

[...]

You’ll be able to see that within the case of transformers early_stopping=False performs higher (fairseq uses the early_stopping=True equivalent).

So for the 5 latest models I used this script to seek out the perfect default parameters and I used those when converting the models. User can still override these parameters, when invoking generate(), but why not provide the perfect defaults.

You will discover the 5 ported AllenAI models here.

More scripts

As each ported group of models has its own nuances, I made dedicated scripts to every one in all them, in order that it should be easy to re-build things in the long run or to create latest scripts to convert latest models. You will discover all of the conversion, evaluation, and other scripts here.

Model cards

One other vital thing is that it isn’t enough to port a model and make it available to others. One needs to offer information on learn how to use it, nuances about hyper-parameters, sources of datasets, evaluation metrics, etc. That is all done by creating model cards, which is only a README.md file, that starts with some metadata that’s utilized by the models website, followed by all of the useful information that might be shared.

For instance, let’s take the facebook/wmt19-en-ru model card. Here is its top:

---

language:

- en

- ru

thumbnail:

tags:

- translation

- wmt19

- facebook

license: apache-2.0

datasets:

- wmt19

metrics:

- bleu

---

# FSMT

## Model description

It is a ported version of

[...]

As you possibly can see we define the languages, tags, license, datasets, and metrics. There may be a full guide for writing these at Model sharing and uploading. The remaining is the markdown document describing the model and its nuances.

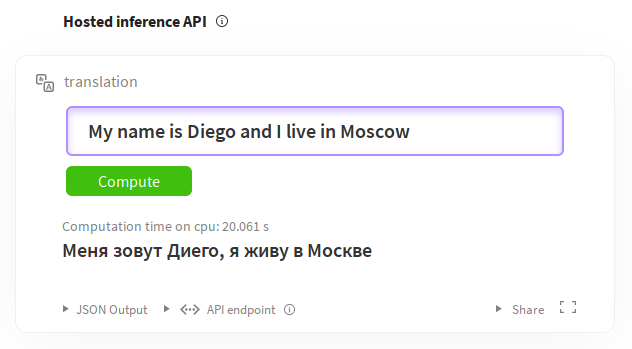

You can too check out the models directly from the model pages due to the Inference widgets. For instance for English-to-russian translation: https://huggingface.co/facebook/wmt19-en-ru?text=My+name+is+Diego+and+I+live+in+Moscow.

Documentation

Finally, the documentation needed to be added.

Luckily, many of the documentation is autogenerated from the docstrings within the module files.

As before, I copied docs/source/model_doc/bart.rst and adapted it to FSMT. When it was ready I linked to it by adding fsmt entry inside docs/source/index.rst

I used:

make docs

to check that the newly added document was constructing appropriately. The file I needed to envision after running that concentrate on was docs/_build/html/model_doc/fsmt.html – I just loaded in my browser and verified that it rendered appropriately.

Here is the ultimate source document docs/source/model_doc/fsmt.rst and its rendered version.

It’s PR time

Once I felt my work was quite complete, I used to be able to submit my PR.

Since this work involved many git commits, I desired to make a clean PR, so I used the next technique to squash all of the commits into one in a brand new branch. This kept all of the initial commits in place if I desired to access any of them later.

The branch I used to be developing on was called fair-wmt, and the brand new branch that I used to be going to submit the PR from I named fair-wmt-clean, so here’s what I did:

git checkout master

git checkout -b fair-wmt-clean

git merge --squash fair-wmt

git commit -m "Ready for PR"

git push origin fair-wmt-clean

Then I went to github and submitted this PR based on the fair-wmt-clean branch.

It took two weeks of several cycles of feedback, followed by modifications, and more such cycles. Eventually it was all satisfactory and the PR got merged.

While this process was happening, I used to be finding issues here and there, adding latest tests, improving the documentation, etc., so it was time well spent.

I subsequently filed just a few more PRs with changes after I improved and reworked just a few features, adding various construct scripts, models cards, etc.

Because the models I ported were belonging to facebook and allenai organizations, I needed to ask Sam to maneuver those model files from my account on s3 to the corresponding organizations.

Closing thoughts

-

While I could not port the model ensemble as

transformersdoesn’t support it, on the plus side the download size of the ultimatefacebook/wmt19-*models is 1.1GB and never 13GB as in the unique. For some reason the unique includes the optimizer state saved within the model – so it adds almost 9GB (4×2.2GB) of dead weight for many who just wish to download the model to make use of it as is to translate text. -

While the job of porting looked very difficult at the start as I didn’t know the internals of neither

transformersnorfairseq, looking back it wasn’t that difficult in spite of everything. This was primarily attributable to having many of the components already available to me in the assorted parts oftransformers– I just needed to seek out parts that I needed, mostly borrowing heavily from other models, after which tweak them to do what I needed. This was true for each the code and the tests. Let’s rephrase that – porting was difficult – but it surely’d have been way more difficult if I had to write down all of it from scratch. And finding the suitable parts wasn’t easy.

Appreciations

-

Having Sam Shleifer mentor me through this process was of an extreme help to me, each due to his technical support and just as importantly for uplifting and inspiring me once I was getting stuck.

-

The PR merging process took a great couple of weeks before it was accepted. During this stage, besides Sam, Lysandre Debut and Sylvain Gugger contributed rather a lot through their insights and suggestions, which I integrating into the codebase.

-

I’m grateful to everybody who has contributed to the

transformerscodebase, which paved the way in which for my work.

Notes

Autoprint all in Jupyter Notebook

My jupyter notebook is configured to routinely print all expressions, so I haven’t got to explicitly print() them. The default behavior is to print only the last expression of every cell. So should you read the outputs in my notebooks they might not the be same as should you were to run them yourself, unless you may have the identical setup.

You’ll be able to enable the print-all feature in your jupyter notebook setup by adding the next to ~/.ipython/profile_default/ipython_config.py (create it should you haven’t got one):

c = get_config()

# Run all nodes interactively

c.InteractiveShell.ast_node_interactivity = "all"

# restore to the unique behavior

# c.InteractiveShell.ast_node_interactivity = "last_expr"

and restarting your jupyter notebook server.

Links to the github versions of files

In an effort to make sure that all links work should you read this text much later after it has been written, the links were made to a selected SHA version of the code and never necessarily the most recent version. That is in order that if files were renamed or removed you’ll still find the code this text is referring to. If you would like to ensure you are looking at the most recent version of the code, replace the hash code within the links with master. For instance, a link:

https://github.com/huggingface/transformers/blob/129fdae04033fe4adfe013b734deaec6ec34ae2e/src/transformers/modeling_fsmt.py

becomes:

https://github.com/huggingface/transformers/blob/master/src/transformers/convert_fsmt_original_pytorch_checkpoint_to_pytorch.py

Thanks for reading!