spaCy is a preferred library for advanced Natural Language Processing used widely across industry. spaCy makes it easy to make use of and train pipelines for tasks like named entity recognition, text classification, a part of speech tagging and more, and enables you to construct powerful applications to process and analyze large volumes of text.

Hugging Face makes it very easy to share your spaCy pipelines with the community! With a single command, you’ll be able to upload any pipeline package, with a fairly model card and all required metadata auto-generated for you. The inference API currently supports NER out-of-the-box, and you’ll be able to check out your pipeline interactively in your browser. You will also get a live URL to your package that you may pip install from anywhere for a smooth path from prototype all of the method to production!

Finding models

Over 60 canonical models might be present in the spaCy org. These models are from the latest 3.1 release, so you’ll be able to try the most recent realesed models right away! On top of this, you’ll find all spaCy models from the community here https://huggingface.co/models?filter=spacy.

Widgets

This integration includes support for NER widgets, so all models with a NER component can have this out of the box! Coming soon there will probably be support for text classification and POS.

Using existing models

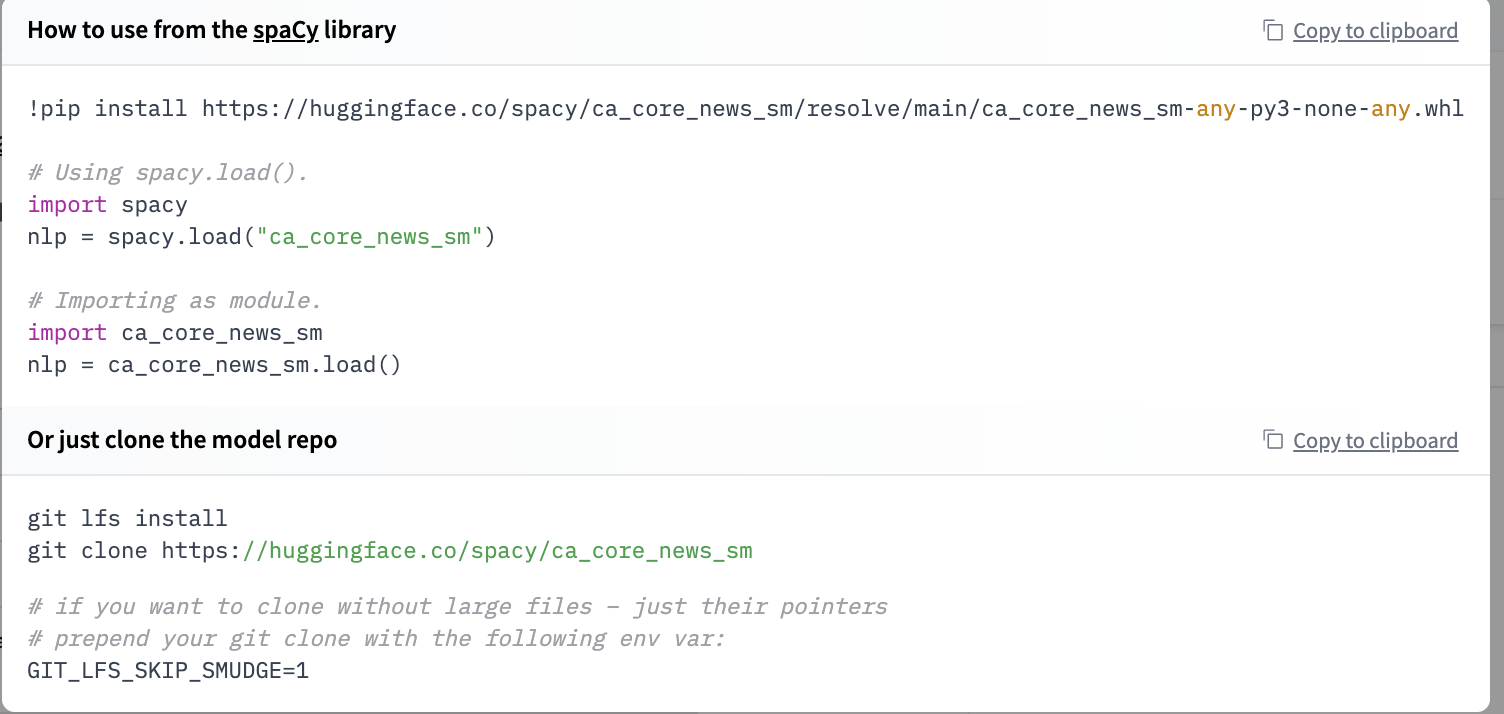

All models from the Hub might be directly installed using pip install.

pip install https://huggingface.co/spacy/en_core_web_sm/resolve/important/en_core_web_sm-any-py3-none-any.whl

import spacy

nlp = spacy.load("en_core_web_sm")

import en_core_web_sm

nlp = en_core_web_sm.load()

While you open a repository, you’ll be able to click Use in spaCy and also you will probably be given a working snippet that you may use to put in and cargo the model!

You possibly can even make HTTP requests to call the models from the Inference API, which is beneficial in production settings. Here is an example of a straightforward request:

curl -X POST --data '{"inputs": "Hello, that is Omar"}' https://api-inference.huggingface.co/models/spacy/en_core_web_sm

>>> [{"entity_group":"PERSON","word":"Omar","start":15,"end":19,"score":1.0}]

And for larger-scale use cases, you’ll be able to click “Deploy > Accelerated Inference” and see methods to do that with Python.

Sharing your models

But probably the good feature is that now you’ll be able to very easily share your models with the spacy-huggingface-hub library, which extends the spaCy CLI with a brand new command, huggingface-hub push.

huggingface-cli login

python -m spacy package ./en_ner_fashion ./output --build wheel

cd ./output/en_ner_fashion-0.0.0/dist

python -m spacy huggingface-hub push en_ner_fashion-0.0.0-py3-none-any.whl

In only a minute, you’ll be able to get your packaged model within the Hub, try it out directly within the browser, and share it with the remainder of the community. All of the required metadata will probably be uploaded for you and also you even get a cool model card.

Try it out and share your models with the community!

Would you prefer to integrate your library to the Hub?

This integration is feasible because of the huggingface_hub library which has all our widgets and the API for all our supported libraries. If you happen to would really like to integrate your library to the Hub, we’ve got a guide for you!