It’s really easy to display a Machine Learning project due to Gradio.

On this blog post, we’ll walk you thru:

- the recent Gradio integration that helps you demo models from the Hub seamlessly with few lines of code leveraging the Inference API.

- methods to use Hugging Face Spaces to host demos of your individual models.

Hugging Face Hub Integration in Gradio

You’ll be able to display your models within the Hub easily. You simply must define the Interface that features:

- The repository ID of the model you would like to infer with

- An outline and title

- Example inputs to guide your audience

After defining your Interface, just call .launch() and your demo will start running. You’ll be able to do that in Colab, but when you would like to share it with the community an important option is to make use of Spaces!

Spaces are a straightforward, free strategy to host your ML demo apps in Python. To achieve this, you may create a repository at https://huggingface.co/new-space and choose Gradio because the SDK. Once done, you may create a file called app.py, copy the code below, and your app will probably be up and running in a number of seconds!

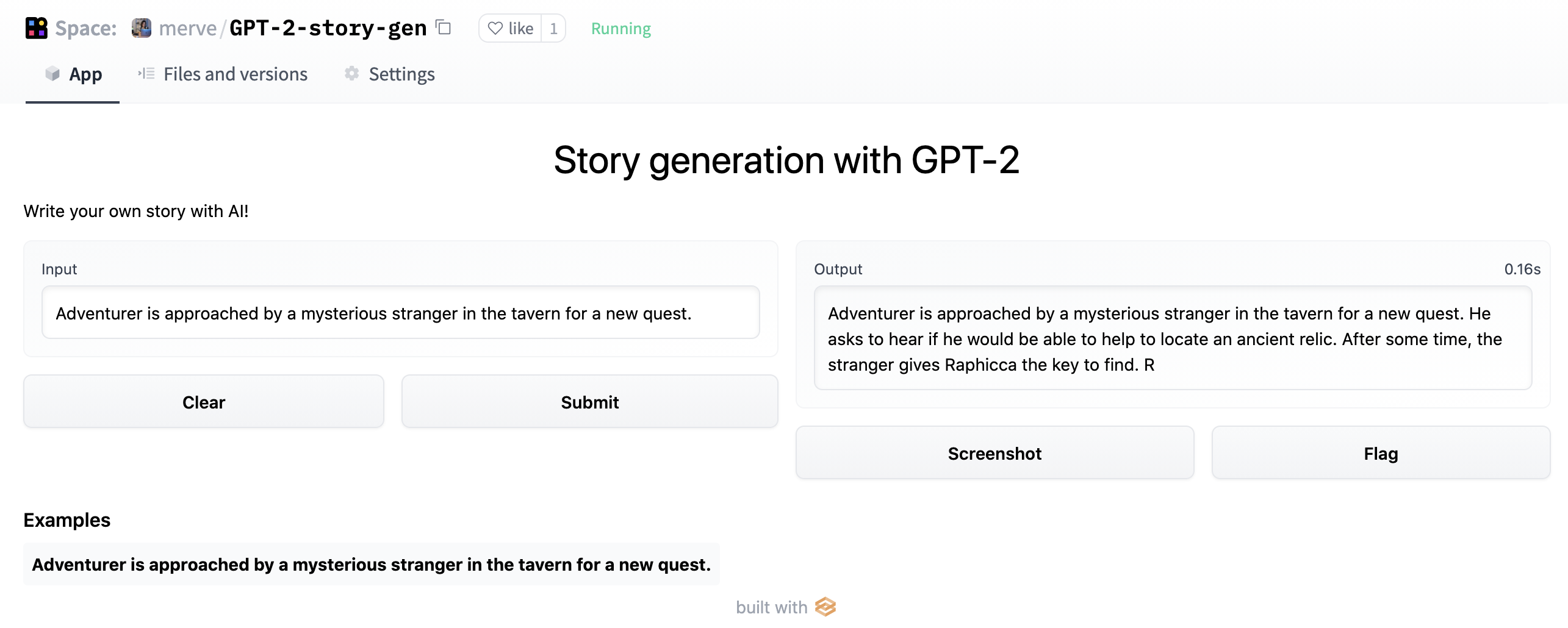

import gradio as gr

description = "Story generation with GPT-2"

title = "Generate your individual story"

examples = [["Adventurer is approached by a mysterious stranger in the tavern for a new quest."]]

interface = gr.Interface.load("huggingface/pranavpsv/gpt2-genre-story-generator",

description=description,

examples=examples

)

interface.launch()

You’ll be able to play with the Story Generation model here

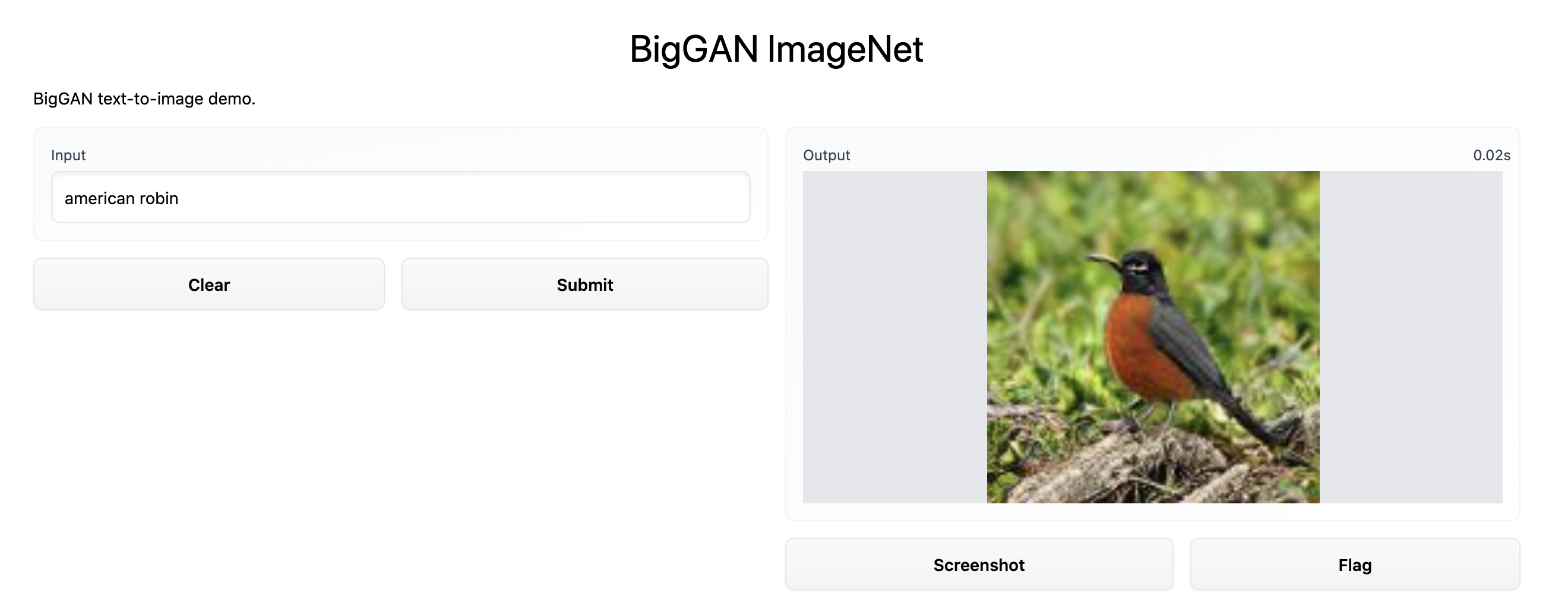

Under the hood, Gradio calls the Inference API which supports Transformers in addition to other popular ML frameworks reminiscent of spaCy, SpeechBrain and Asteroid. This integration supports several types of models, image-to-text, speech-to-text, text-to-speech and more. You’ll be able to take a look at this instance BigGAN ImageNet text-to-image model here. Implementation is below.

import gradio as gr

description = "BigGAN text-to-image demo."

title = "BigGAN ImageNet"

interface = gr.Interface.load("huggingface/osanseviero/BigGAN-deep-128",

description=description,

title = title,

examples=[["american robin"]]

)

interface.launch()

Serving Custom Model Checkpoints with Gradio in Hugging Face Spaces

You’ll be able to serve your models in Spaces even when the Inference API doesn’t support your model. Just wrap your model inference in a Gradio Interface as described below and put it in Spaces.

Mix and Match Models!

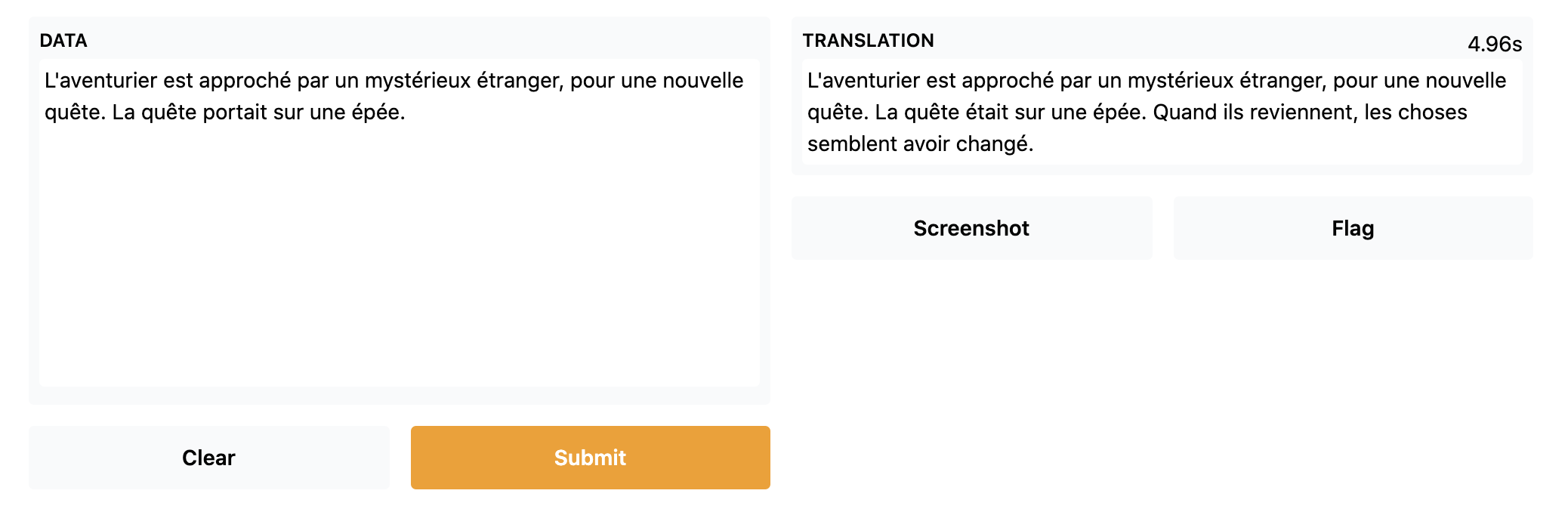

Using Gradio Series, you may mix-and-match different models! Here, we have put a French to English translation model on top of the story generator and a English to French translation model at the tip of the generator model to easily make a French story generator.

import gradio as gr

from gradio.mix import Series

description = "Generate your individual D&D story!"

title = "French Story Generator using Opus MT and GPT-2"

translator_fr = gr.Interface.load("huggingface/Helsinki-NLP/opus-mt-fr-en")

story_gen = gr.Interface.load("huggingface/pranavpsv/gpt2-genre-story-generator")

translator_en = gr.Interface.load("huggingface/Helsinki-NLP/opus-mt-en-fr")

examples = [["L'aventurier est approché par un mystérieux étranger, pour une nouvelle quête."]]

Series(translator_fr, story_gen, translator_en, description = description,

title = title,

examples=examples, inputs = gr.inputs.Textbox(lines = 10)).launch()

You’ll be able to take a look at the French Story Generator here

Uploading your Models to the Spaces

You’ll be able to serve your demos in Hugging Face due to Spaces! To do that, simply create a brand new Space, after which drag and drop your demos or use Git.

Easily construct your first demo with Spaces here!