Speaking on the 2021 AI Hardware Summit, Hugging Face announced the launch of their latest Hardware Partner Program, including device-optimized models and software integrations. Here, Graphcore – creators of the Intelligence Processing Unit (IPU) and a founding member of this system – explain how their partnership with Hugging Face will allow developers to simply speed up their use of state-of-the-art Transformer models.

Graphcore and Hugging Face are two firms with a standard goal – to make it easier for innovators to harness the facility of machine intelligence.

Hugging Face’s Hardware Partner Program will allow developers using Graphcore systems to deploy state-of-the-art Transformer models, optimised for our Intelligence Processing Unit (IPU), at production scale, with minimum coding complexity.

What’s an Intelligence Processing Unit?

IPUs are the processors that power Graphcore’s IPU-POD datacenter compute systems. This latest style of processor is designed to support the very specific computational requirements of AI and machine learning. Characteristics akin to fine-grained parallelism, low precision arithmetic, and the power to handle sparsity have been built into our silicon.

As a substitute of adopting a SIMD/SIMT architecture like GPUs, Graphcore’s IPU uses a massively parallel, MIMD architecture, with ultra-high bandwidth memory placed adjoining to the processor cores, right on the silicon die.

This design delivers high performance and latest levels of efficiency, whether running today’s hottest models, akin to BERT and EfficientNet, or exploring next-generation AI applications.

Software plays a significant role in unlocking the IPU’s capabilities. Our Poplar SDK has been co-designed with the processor since Graphcore’s inception. Today it fully integrates with standard machine learning frameworks, including PyTorch and TensorFlow, in addition to orchestration and deployment tools akin to Docker and Kubernetes.

Making Poplar compatible with these widely used, third-party systems allows developers to simply port their models from their other compute platforms and begin benefiting from the IPU’s advanced AI capabilities.

Optimising Transformers for Production

Transformers have completely transformed (pun intended) the sector of AI. Models akin to BERT are widely utilized by Graphcore customers in an enormous array of applications, across NLP and beyond. These multi-talented models can perform feature extraction, text generation, sentiment evaluation, translation and plenty of more functions.

Already, Hugging Face plays host to a whole lot of Transformers, from the French-language CamemBERT to ViT which applies lessons learned in NLP to computer vision. The Transformers library is downloaded a median of two million times every month and demand is growing.

With a user base of greater than 50,000 developers – Hugging Face has seen the fastest ever adoption of an open-source project.

Now, with its Hardware Partner Program, Hugging Face is connecting the last word Transformer toolset with today’s most advanced AI hardware.

Using Optimum, a brand new open-source library and toolkit, developers will give you the chance to access hardware-optimized models certified by Hugging Face.

These are being developed in a collaboration between Graphcore and Hugging Face, with the primary IPU-optimized models appearing on Optimum later this 12 months. Ultimately, these will cover a big selection of applications, from vision and speech to translation and text generation.

Hugging Face CEO Clément Delangue said: “Developers all want access to the newest and best hardware – just like the Graphcore IPU, but there’s at all times that query of whether or not they’ll must learn latest code or processes. With Optimum and the Hugging Face Hardware Program, that’s just not a difficulty. It’s essentially plug-and-play”.

SOTA Models meet SOTA Hardware

Prior to the announcement of the Hugging Face Partnership, we had demonstrated the facility of the IPU to speed up state-of-the-art Transformer models with a special Graphcore-optimised implementation of Hugging Face BERT using Pytorch.

Full details of this instance could be present in the Graphcore blog BERT-Large training on the IPU explained.

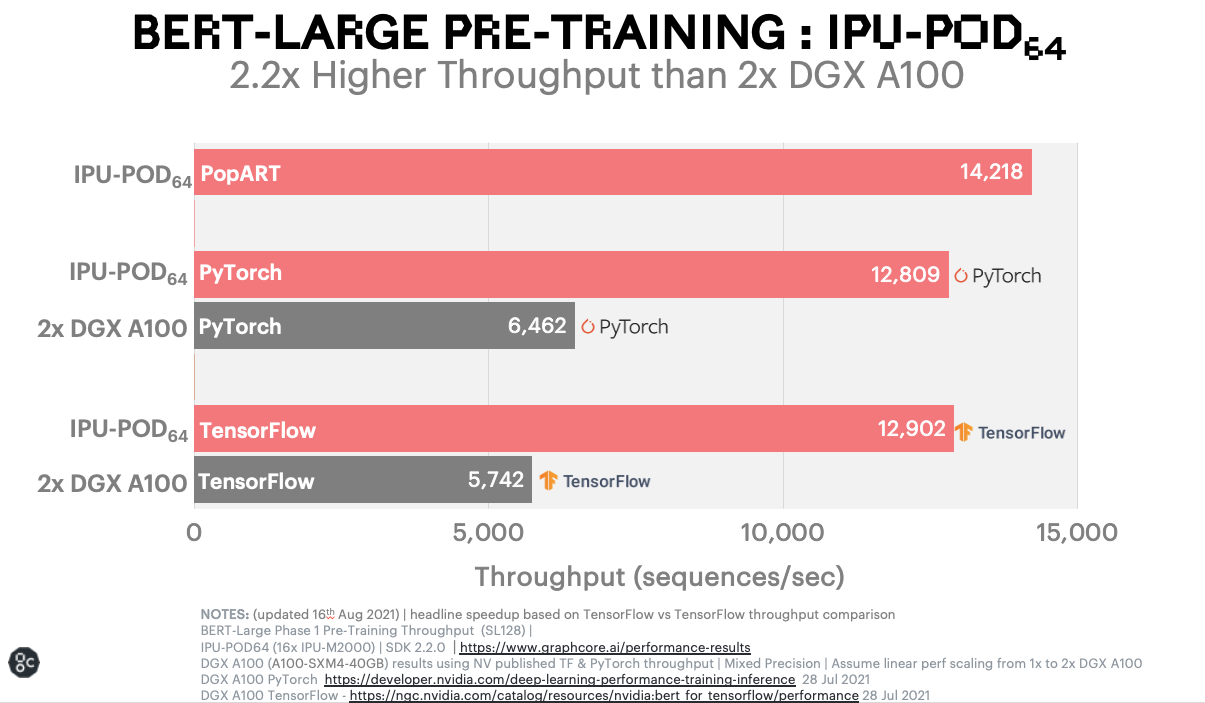

The dramatic benchmark results for BERT running on a Graphcore system, compared with a comparable GPU-based system are surely a tantalising prospect for anyone currently running the favored NLP model on something aside from the IPU.

One of these acceleration could be game changing for machine learning researchers and engineers, winning them back invaluable hours of coaching time and allowing them many more iterations when developing latest models.

Now Graphcore users will give you the chance to unlock such performance benefits, through the Hugging Face platform, with its elegant simplicity and superlative range of models.

Together, Hugging Face and Graphcore are helping much more people to access the facility of Transformers and speed up the AI revolution.

Visit the Hugging Face Hardware Partner portal to learn more about Graphcore IPU systems and how you can gain access