This guide is just suited to Sentence Transformers before v3.0. Read Training and Finetuning Embedding Models with Sentence Transformers v3 for an updated guide.

Try this tutorial with the Notebook Companion:

Training or fine-tuning a Sentence Transformers model highly relies on the available data and the goal task. The secret is twofold:

- Understand the right way to input data into the model and prepare your dataset accordingly.

- Know different loss functions and the way they relate to the dataset.

On this tutorial, you’ll:

- Understand how Sentence Transformers models work by creating one from “scratch” or fine-tuning one from the Hugging Face Hub.

- Learn different formats your dataset could have.

- Review different loss functions you may select based in your dataset format.

- Train or fine-tune your model.

- Share your model to the Hugging Face Hub.

- Learn when Sentence Transformers models is probably not the very best alternative.

How Sentence Transformers models work

In a Sentence Transformer model, you map a variable-length text (or image pixels) to a fixed-size embedding representing that input’s meaning. To start with embeddings, take a look at our previous tutorial. This post focuses on text.

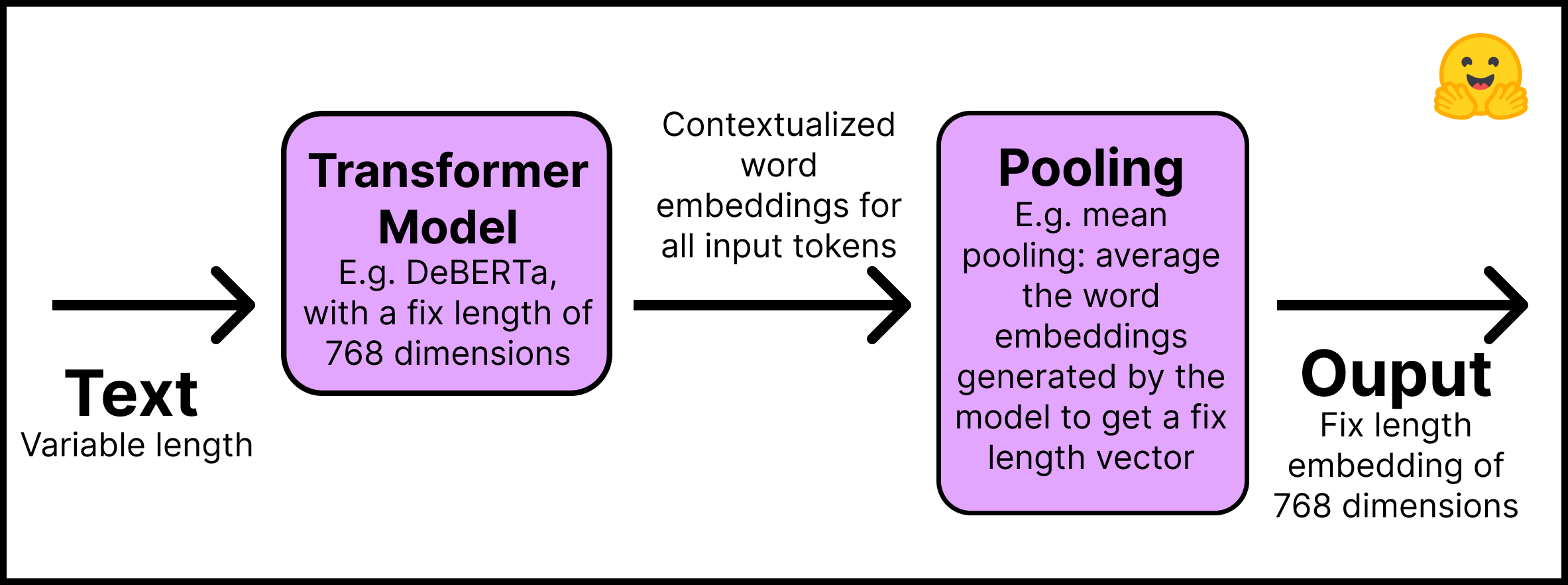

That is how the Sentence Transformers models work:

- Layer 1 – The input text is passed through a pre-trained Transformer model that might be obtained directly from the Hugging Face Hub. This tutorial will use the “distilroberta-base” model. The Transformer outputs are contextualized word embeddings for all input tokens; imagine an embedding for every token of the text.

- Layer 2 – The embeddings undergo a pooling layer to get a single fixed-length embedding for all of the text. For instance, mean pooling averages the embeddings generated by the model.

This figure summarizes the method:

Remember to put in the Sentence Transformers library with pip install -U sentence-transformers. In code, this two-step process is straightforward:

from sentence_transformers import SentenceTransformer, models

word_embedding_model = models.Transformer('distilroberta-base')

pooling_model = models.Pooling(word_embedding_model.get_word_embedding_dimension())

model = SentenceTransformer(modules=[word_embedding_model, pooling_model])

From the code above, you may see that Sentence Transformers models are made up of modules, that’s, a listing of layers which can be executed consecutively. The input text enters the primary module, and the ultimate output comes from the last component. So simple as it looks, the above model is a typical architecture for Sentence Transformers models. If crucial, additional layers might be added, for instance, dense, bag of words, and convolutional.

Why not use a Transformer model, like BERT or Roberta, out of the box to create embeddings for entire sentences and texts? There are at the least two reasons.

- Pre-trained Transformers require heavy computation to perform semantic search tasks. For instance, finding probably the most similar pair in a set of 10,000 sentences requires about 50 million inference computations (~65 hours) with BERT. In contrast, a BERT Sentence Transformers model reduces the time to about 5 seconds.

- Once trained, Transformers create poor sentence representations out of the box. A BERT model with its token embeddings averaged to create a sentence embedding performs worse than the GloVe embeddings developed in 2014.

On this section we’re making a Sentence Transformers model from scratch. If you must fine-tune an existing Sentence Transformers model, you may skip the steps above and import it from the Hugging Face Hub. Yow will discover many of the Sentence Transformers models within the “Sentence Similarity” task. Here we load the “sentence-transformers/all-MiniLM-L6-v2” model:

from sentence_transformers import SentenceTransformer

model_id = "sentence-transformers/all-MiniLM-L6-v2"

model = SentenceTransformer(model_id)

Now for probably the most critical part: the dataset format.

The best way to prepare your dataset for training a Sentence Transformers model

To coach a Sentence Transformers model, you want to inform it by some means that two sentences have a certain degree of similarity. Due to this fact, each example in the info requires a label or structure that permits the model to know whether two sentences are similar or different.

Unfortunately, there isn’t a single technique to prepare your data to coach a Sentence Transformers model. It largely relies on your goals and the structure of your data. When you haven’t got an explicit label, which is the most probably scenario, you may derive it from the design of the documents where you obtained the sentences. For instance, two sentences in the identical report needs to be more comparable than two sentences in numerous reports. Neighboring sentences is likely to be more comparable than non-neighboring sentences.

Moreover, the structure of your data will influence which loss function you need to use. This might be discussed in the following section.

Remember the Notebook Companion for this post has all of the code already implemented.

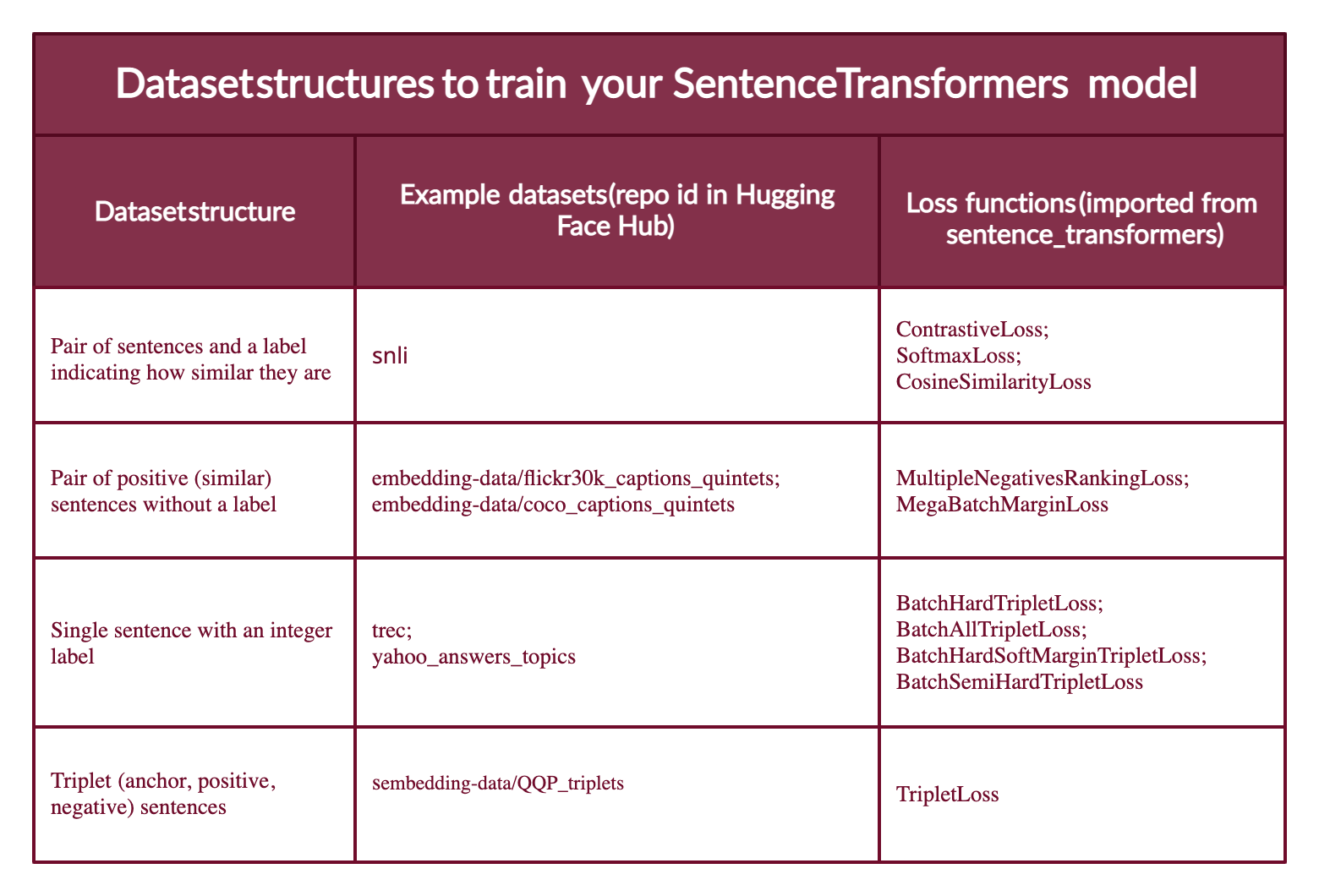

Most dataset configurations will take one in all 4 forms (below you will notice examples of every case):

- Case 1: The instance is a pair of sentences and a label indicating how similar they’re. The label might be either an integer or a float. This case applies to datasets originally prepared for Natural Language Inference (NLI), since they contain pairs of sentences with a label indicating whether or not they infer one another or not.

- Case 2: The instance is a pair of positive (similar) sentences without a label. For instance, pairs of paraphrases, pairs of full texts and their summaries, pairs of duplicate questions, pairs of (

query,response), or pairs of (source_language,target_language). Natural Language Inference datasets may also be formatted this manner by pairing entailing sentences. Having your data on this format might be great since you need to use theMultipleNegativesRankingLoss, one of the used loss functions for Sentence Transformers models. - Case 3: The instance is a sentence with an integer label. This data format is well converted by loss functions into three sentences (triplets) where the primary is an “anchor”, the second a “positive” of the identical class because the anchor, and the third a “negative” of a special class. Each sentence has an integer label indicating the category to which it belongs.

- Case 4: The instance is a triplet (anchor, positive, negative) without classes or labels for the sentences.

For instance, on this tutorial you’ll train a Sentence Transformer using a dataset within the fourth case. You’ll then fine-tune it using the second case dataset configuration (please confer with the Notebook Companion for this blog).

Note that Sentence Transformers models might be trained with human labeling (cases 1 and three) or with labels routinely deduced from text formatting (mainly case 2; although case 4 doesn’t require labels, it’s tougher to seek out data in a triplet unless you process it because the MegaBatchMarginLoss function does).

There are datasets on the Hugging Face Hub for every of the above cases. Moreover, the datasets within the Hub have a Dataset Preview functionality that lets you view the structure of datasets before downloading them. Listed below are sample data sets for every of those cases:

-

Case 1: The identical setup as for Natural Language Inference might be used if you’ve gotten (or fabricate) a label indicating the degree of similarity between two sentences; for instance {0,1,2} where 0 is contradiction and a couple of is entailment. Review the structure of the SNLI dataset.

-

Case 2: The Sentence Compression dataset has examples made up of positive pairs. In case your dataset has greater than two positive sentences per example, for instance quintets as within the COCO Captions or the Flickr30k Captions datasets, you may format the examples as to have different mixtures of positive pairs.

-

Case 3: The TREC dataset has integer labels indicating the category of every sentence. Each example within the Yahoo Answers Topics dataset incorporates three sentences and a label indicating its topic; thus, each example might be divided into three.

-

Case 4: The Quora Triplets dataset has triplets (anchor, positive, negative) without labels.

The subsequent step is converting the dataset right into a format the Sentence Transformers model can understand. The model cannot accept raw lists of strings. Each example have to be converted to a sentence_transformers.InputExample class after which to a torch.utils.data.DataLoader class to batch and shuffle the examples.

Install Hugging Face Datasets with pip install datasets. Then import a dataset with the load_dataset function:

from datasets import load_dataset

dataset_id = "embedding-data/QQP_triplets"

dataset = load_dataset(dataset_id)

This guide uses an unlabeled triplets dataset, the fourth case above.

With the datasets library you may explore the dataset:

print(f"- The {dataset_id} dataset has {dataset['train'].num_rows} examples.")

print(f"- Each example is a {type(dataset['train'][0])} with a {type(dataset['train'][0]['set'])} as value.")

print(f"- Examples appear like this: {dataset['train'][0]}")

Output:

- The embedding-data/QQP_triplets dataset has 101762 examples.

- Each example is a <class 'dict'> with a <class 'dict'> as value.

- Examples appear like this: {'set': {'query': 'Why in India will we not have one on one political debate as in USA?', 'pos': ['Why can't we have a public debate between politicians in India like the one in US?'], 'neg': ['Can people on Quora stop India Pakistan debate? We are sick and tired seeing this everyday in bulk?'...]

You’ll be able to see that query (the anchor) has a single sentence, pos (positive) is a listing of sentences (the one we print has just one sentence), and neg (negative) has a listing of multiple sentences.

Convert the examples into InputExample‘s. For simplicity, (1) only one in all the positives and one in all the negatives within the embedding-data/QQP_triplets dataset might be used. (2) We’ll only employ 1/2 of the available examples. You’ll be able to obtain a lot better results by increasing the variety of examples.

from sentence_transformers import InputExample

train_examples = []

train_data = dataset['train']['set']

n_examples = dataset['train'].num_rows // 2

for i in range(n_examples):

example = train_data[i]

train_examples.append(InputExample(texts=[example['query'], example['pos'][0], example['neg'][0]]))

Convert the training examples to a Dataloader.

from torch.utils.data import DataLoader

train_dataloader = DataLoader(train_examples, shuffle=True, batch_size=16)

The subsequent step is to decide on an acceptable loss function that might be used with the info format.

Loss functions for training a Sentence Transformers model

Remember the 4 different formats your data could possibly be in? Each may have a special loss function related to it.

Case 1: Pair of sentences and a label indicating how similar they’re. The loss function optimizes such that (1) the sentences with the closest labels are near within the vector space, and (2) the sentences with the farthest labels are so far as possible. The loss function relies on the format of the label. If its an integer use ContrastiveLoss or SoftmaxLoss; if its a float you need to use CosineSimilarityLoss.

Case 2: When you only have two similar sentences (two positives) with no labels, then you need to use the MultipleNegativesRankingLoss function. The MegaBatchMarginLoss may also be used, and it could convert your examples to triplets (anchor_i, positive_i, positive_j) where positive_j serves because the negative.

Case 3: When your samples are triplets of the shape [anchor, positive, negative] and you’ve gotten an integer label for every, a loss function optimizes the model in order that the anchor and positive sentences are closer together in vector space than the anchor and negative sentences. You should use BatchHardTripletLoss, which requires the info to be labeled with integers (e.g., labels 1, 2, 3) assuming that samples with the identical label are similar. Due to this fact, anchors and positives should have the identical label, while negatives should have a special one. Alternatively, you need to use BatchAllTripletLoss, BatchHardSoftMarginTripletLoss, or BatchSemiHardTripletLoss. The differences between them is beyond the scope of this tutorial, but might be reviewed within the Sentence Transformers documentation.

Case 4: When you haven’t got a label for every sentence within the triplets, you must use TripletLoss. This loss minimizes the gap between the anchor and the positive sentences while maximizing the gap between the anchor and the negative sentences.

This figure summarizes the different sorts of datasets formats, example dataets within the Hub, and their adequate loss functions.

The toughest part is selecting an acceptable loss function conceptually. Within the code, there are only two lines:

from sentence_transformers import losses

train_loss = losses.TripletLoss(model=model)

Once the dataset is in the specified format and an acceptable loss function is in place, fitting and training a Sentence Transformers is straightforward.

The best way to train or fine-tune a Sentence Transformer model

“SentenceTransformers was designed in order that fine-tuning your personal sentence/text embeddings models is simple. It provides many of the constructing blocks you may stick together to tune embeddings in your specific task.” – Sentence Transformers Documentation.

That is what the training or fine-tuning looks like:

model.fit(train_objectives=[(train_dataloader, train_loss)], epochs=10)

Do not forget that if you happen to are fine-tuning an existing Sentence Transformers model (see Notebook Companion), you may directly call the fit method from it. If this can be a latest Sentence Transformers model, you should first define it as you probably did within the “How Sentence Transformers models work” section.

That is it; you’ve gotten a brand new or improved Sentence Transformers model! Do you must share it to the Hugging Face Hub?

First, log in to the Hugging Face Hub. You’ll need to create a write token in your Account Settings. Then there are two options to log in:

-

Type

huggingface-cli loginin your terminal and enter your token. -

If in a python notebook, you need to use

notebook_login.

from huggingface_hub import notebook_login

notebook_login()

Then, you may share your models by calling the save_to_hub method from the trained model. By default, the model might be uploaded to your account. Still, you may upload to a corporation by passing it within the organization parameter. save_to_hub routinely generates a model card, an inference widget, example code snippets, and more details. You’ll be able to routinely add to the Hub’s model card a listing of datasets you used to coach the model with the argument train_datasets:

model.save_to_hub(

"distilroberta-base-sentence-transformer",

organization=

train_datasets=["embedding-data/QQP_triplets"],

)

Within the Notebook Companion I fine-tuned this same model using the embedding-data/sentence-compression dataset and the MultipleNegativesRankingLoss loss.

What are the boundaries of Sentence Transformers?

Sentence Transformers models work a lot better than the straightforward Transformers models for semantic search. Nonetheless, where do the Sentence Transformers models not work well? In case your task is classification, then using sentence embeddings is the fallacious approach. In that case, the 🤗 Transformers library can be a better option.

Extra Resources

Thanks for reading! Completely happy embedding making.