Transformer based models in language, vision and speech are getting larger to support complex multi-modal use cases for the tip customer. Increasing model sizes directly impact the resources needed to coach these models and scale them as the scale increases. Hugging Face and Microsoft’s ONNX Runtime teams are working together to construct advancements in finetuning large Language, Speech and Vision models. Hugging Face’s Optimum library, through its integration with ONNX Runtime for training, provides an open solution to improve training times by 35% or more for a lot of popular Hugging Face models. We present details of each Hugging Face Optimum and the ONNX Runtime Training ecosystem, with performance numbers highlighting the advantages of using the Optimum library.

Performance results

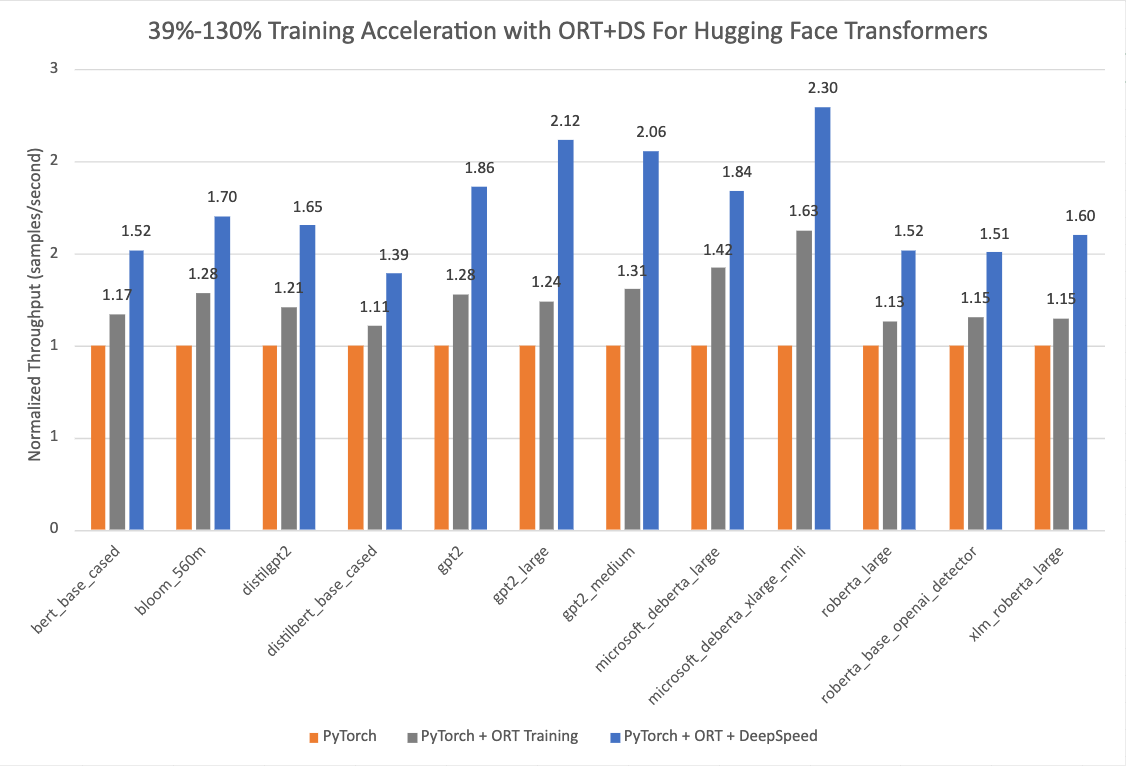

The chart below shows impressive acceleration from 39% to 130% for Hugging Face models with Optimum when using ONNX Runtime and DeepSpeed ZeRO Stage 1 for training. The performance measurements were done on chosen Hugging Face models with PyTorch because the baseline run, only ONNX Runtime for training because the second run, and ONNX Runtime + DeepSpeed ZeRO Stage 1 as the ultimate run, showing maximum gains. The Optimizer used for the baseline PyTorch runs is the AdamW optimizer and the ORT Training runs use the Fused Adam Optimizer. The runs were performed on a single Nvidia A100 node with 8 GPUs.

Additional details on configuration settings to activate Optimum for training acceleration could be found here. The version information used for these runs is as follows:

PyTorch: 1.14.0.dev20221103+cu116; ORT: 1.14.0.dev20221103001+cu116; DeepSpeed: 0.6.6; HuggingFace: 4.24.0.dev0; Optimum: 1.4.1.dev0; Cuda: 11.6.2

Optimum Library

Hugging Face is a fast-growing open community and platform aiming to democratize good machine learning. We prolonged modalities from NLP to audio and vision, and now covers use cases across Machine Learning to satisfy our community’s needs following the success of the Transformers library. Now on Hugging Face Hub, there are greater than 120K free and accessible model checkpoints for various machine learning tasks, 18K datasets, and 20K ML demo apps. Nevertheless, scaling transformer models into production continues to be a challenge for the industry. Despite high accuracy, training and inference of transformer-based models could be time-consuming and expensive.

To focus on these needs, Hugging Face built two open-sourced libraries: Speed up and Optimum. While 🤗 Speed up focuses on out-of-the-box distributed training, 🤗 Optimum, as an extension of transformers, accelerates model training and inference by leveraging the utmost efficiency of users’ targeted hardware. Optimum integrated machine learning accelerators like ONNX Runtime and specialized hardware like Intel’s Habana Gaudi, so users can profit from considerable speedup in each training and inference. Besides, Optimum seamlessly integrates other Hugging Face’s tools while inheriting the identical ease of use as Transformers. Developers can easily adapt their work to realize lower latency with less computing power.

ONNX Runtime Training

ONNX Runtime accelerates large model training to hurry up throughput by as much as 40% standalone, and 130% when composed with DeepSpeed for popular HuggingFace transformer based models. ONNX Runtime is already integrated as a part of Optimum and enables faster training through Hugging Face’s Optimum training framework.

ONNX Runtime Training achieves such throughput improvements via several memory and compute optimizations. The memory optimizations enable ONNX Runtime to maximise the batch size and utilize the available memory efficiently whereas the compute optimizations speed up the training time. These optimizations include, but aren’t limited to, efficient memory planning, kernel optimizations, multi tensor apply for Adam Optimizer (which batches the elementwise updates applied to all of the model’s parameters into one or a couple of kernel launches), FP16 optimizer (which eliminates lots of device to host memory copies), mixed precision training and graph optimizations like node fusions and node eliminations. ONNX Runtime Training supports each NVIDIA and AMD GPUs, and offers extensibility with custom operators.

Briefly, it empowers AI developers to take full advantage of the ecosystem they’re aware of, like PyTorch and Hugging Face, and use acceleration from ONNX Runtime on the goal device of their alternative to avoid wasting each time and resources.

ONNX Runtime Training in Optimum

Optimum provides an ORTTrainer API that extends the Trainer in Transformers to make use of ONNX Runtime because the backend for acceleration. ORTTrainer is an easy-to-use API containing feature-complete training loop and evaluation loop. It supports features like hyperparameter search, mixed-precision training and distributed training with multiple GPUs. ORTTrainer enables AI developers to compose ONNX Runtime and other third-party acceleration techniques when training Transformers’ models, which helps speed up the training further and gets the most effective out of the hardware. For instance, developers can mix ONNX Runtime Training with distributed data parallel and mixed-precision training integrated in Transformers’ Trainer. Besides, ORTTrainer makes it easy to compose ONNX Runtime Training with DeepSpeed ZeRO-1, which saves memory by partitioning the optimizer states. After the pre-training or the fine-tuning is finished, developers can either save the trained PyTorch model or convert it to the ONNX format with APIs that Optimum implemented for ONNX Runtime to ease the deployment for Inference. And similar to Trainer, ORTTrainer has full integration with Hugging Face Hub: after the training, users can upload their model checkpoints to their Hugging Face Hub account.

So concretely, what should users do with Optimum to benefit from the ONNX Runtime acceleration for training? If you happen to are already using Trainer, you only have to adapt a couple of lines of code to learn from all of the improvements mentioned above. There are mainly two replacements that should be applied. Firstly, replace Trainer with ORTTrainer, then replace TrainingArguments with ORTTrainingArguments which accommodates all of the hyperparameters the trainer will use for training and evaluation. ORTTrainingArguments extends TrainingArguments to use some extra arguments empowered by ONNX Runtime. For instance, users can apply Fused Adam Optimizer for extra performance gain. Here is an example:

-from transformers import Trainer, TrainingArguments

+from optimum.onnxruntime import ORTTrainer, ORTTrainingArguments

# Step 1: Define training arguments

-training_args = TrainingArguments(

+training_args = ORTTrainingArguments(

output_dir="path/to/save/folder/",

- optim = "adamw_hf",

+ optim = "adamw_ort_fused",

...

)

# Step 2: Create your ONNX Runtime Trainer

-trainer = Trainer(

+trainer = ORTTrainer(

model=model,

args=training_args,

train_dataset=train_dataset,

+ feature="sequence-classification",

...

)

# Step 3: Use ONNX Runtime for training!🤗

trainer.train()

Looking Forward

The Hugging Face team is working on open sourcing more large models and lowering the barrier for users to learn from them with acceleration tools on each training and inference. We’re collaborating with the ONNX Runtime training team to bring more training optimizations to newer and bigger model architectures, including Whisper and Stable Diffusion. Microsoft has also packaged its state-of-the-art training acceleration technologies within the Azure Container for PyTorch. It is a light-weight curated environment including DeepSpeed and ONNX Runtime to enhance productivity for AI developers training with PyTorch. Along with large model training, the ONNX Runtime training team can be constructing recent solutions for learning on the sting – training on devices which might be constrained on memory and power.

Getting Began

We invite you to envision out the links below to learn more about, and start with, Optimum ONNX Runtime Training on your Hugging Face models.

🏎Thanks for reading! If you may have any questions, be happy to achieve us through Github, or on the forum. It’s also possible to connect with me on Twitter or LinkedIn.