BigCode is releasing StarCoder2, the subsequent generation of transparently trained open code LLMs. All StarCoder2 variants were trained on The Stack v2, a brand new large and high-quality code dataset. We release all models, datasets, and the processing in addition to the training code. Take a look at the paper for details.

What’s StarCoder2?

StarCoder2 is a family of open LLMs for code and is available in 3 different sizes with 3B, 7B and 15B parameters. The flagship StarCoder2-15B model is trained on over 4 trillion tokens and 600+ programming languages from The Stack v2. All models use Grouped Query Attention, a context window of 16,384 tokens with a sliding window attention of 4,096 tokens, and were trained using the Fill-in-the-Middle objective.

StarCoder2 offers three model sizes: a 3 billion-parameter model trained by ServiceNow, a 7 billion-parameter model trained by Hugging Face, and a 15 billion-parameter model trained by NVIDIA using NVIDIA NeMo on NVIDIA accelerated infrastructure:

- StarCoder2-3B was trained on 17 programming languages from The Stack v2 on 3+ trillion tokens.

- StarCoder2-7B was trained on 17 programming languages from The Stack v2 on 3.5+ trillion tokens.

- StarCoder2-15B was trained on 600+ programming languages from The Stack v2 on 4+ trillion tokens.

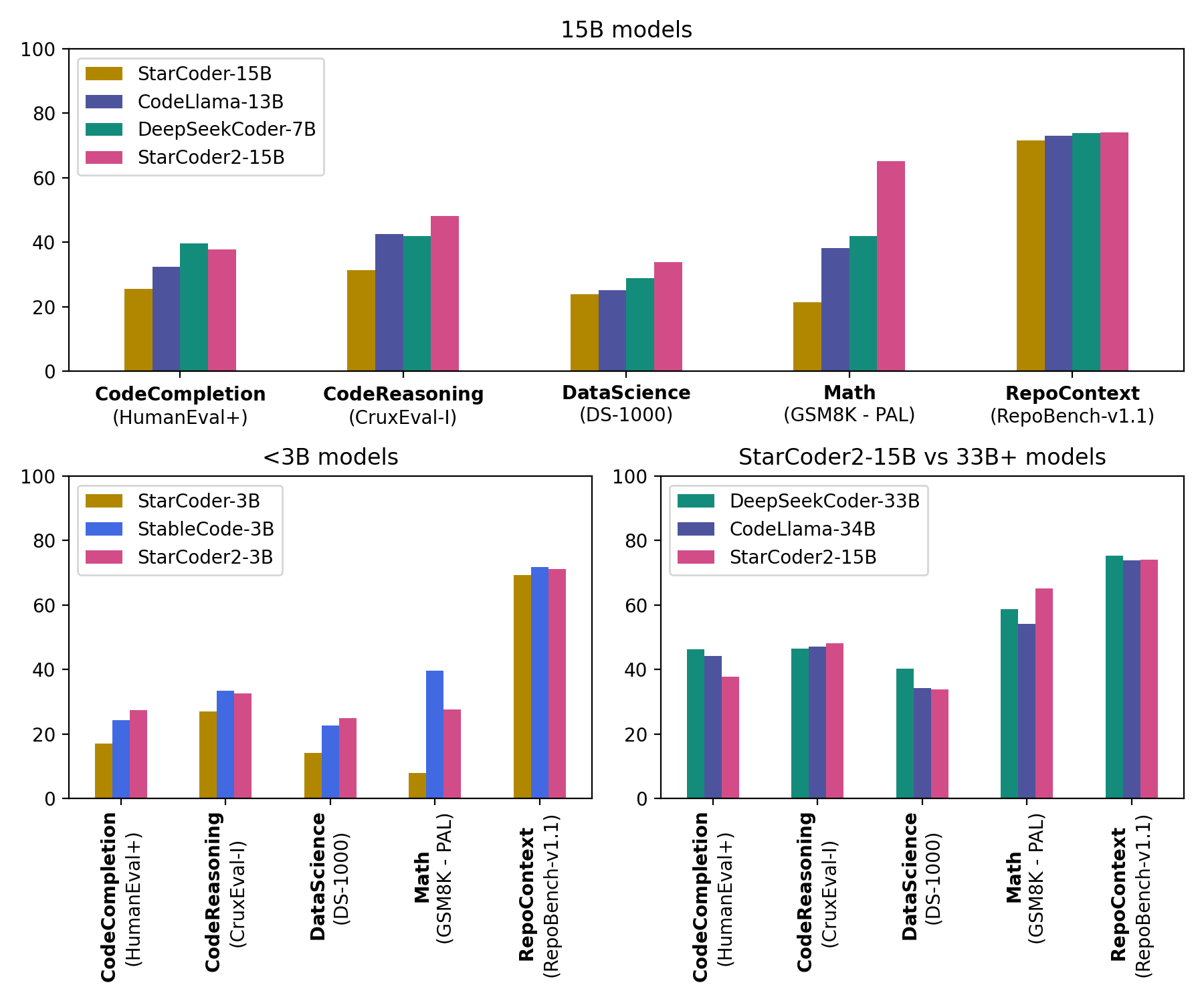

StarCoder2-15B is the very best in its size class and matches 33B+ models on many evaluations. StarCoder2-3B matches the performance of StarCoder1-15B:

What’s The Stack v2?

The Stack v2 is the most important open code dataset suitable for LLM pretraining. The Stack v2 is larger than The Stack v1, follows an improved language and license detection procedure, and higher filtering heuristics. As well as, the training dataset is grouped by repositories, allowing to coach models with repository context.

This dataset is derived from the Software Heritage archive, the most important public archive of software source code and accompanying development history. Software Heritage, launched by Inria in partnership with UNESCO, is an open, non-profit initiative to gather, preserve, and share the source code of all publicly available software. We’re grateful to Software Heritage for providing access to this invaluable resource. For more details, visit the Software Heritage website.

The Stack v2 may be accessed through the Hugging Face Hub.

About BigCode

BigCode is an open scientific collaboration led jointly by Hugging Face and ServiceNow that works on the responsible development of enormous language models for code.

Links

Models

Data & Governance

Others

You’ll find all of the resources and links at huggingface.co/bigcode!